Neuromorphic Edge Processors

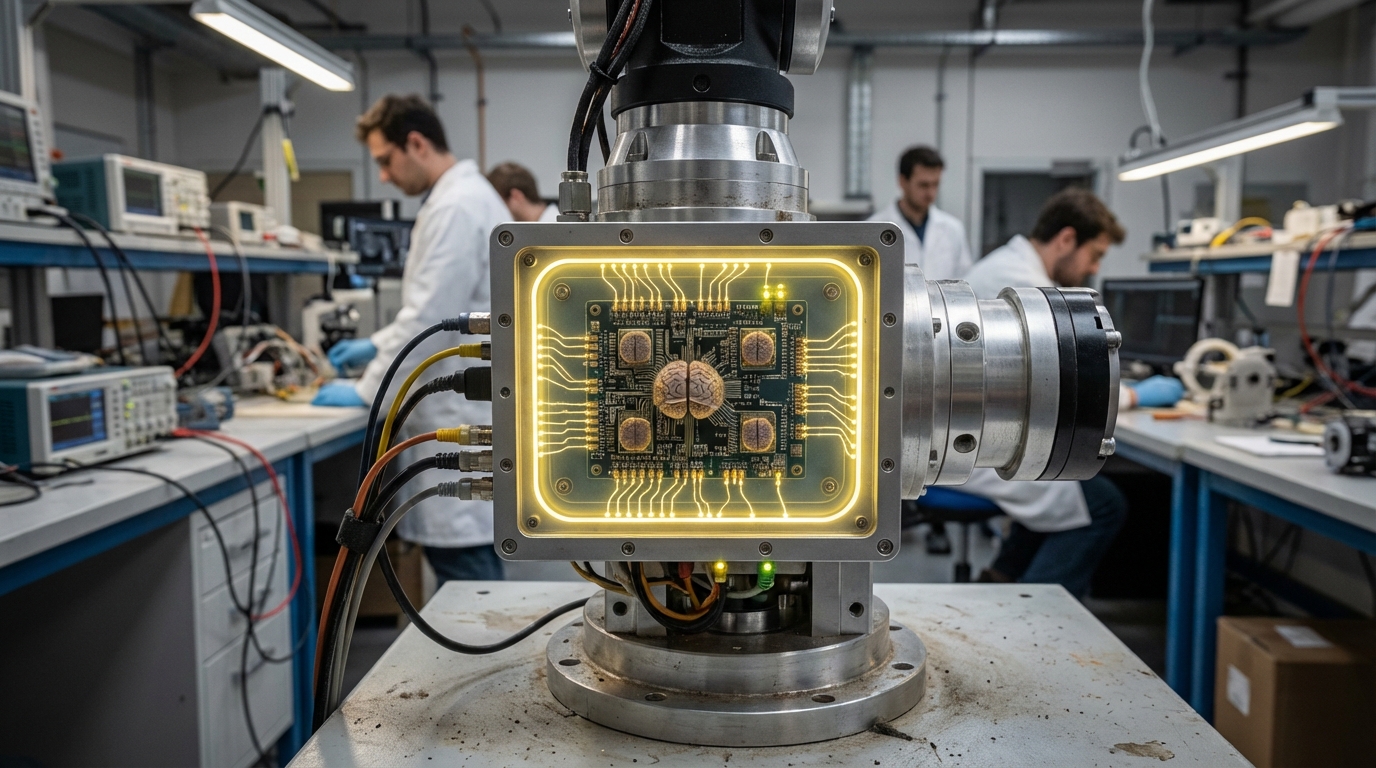

The telecommunications industry faces a fundamental challenge in deploying artificial intelligence capabilities across vast networks of edge devices: traditional AI processors consume excessive power and require constant cloud connectivity, making them impractical for remote cell towers, IoT gateways, and energy-constrained network infrastructure. Neuromorphic edge processors address this challenge by fundamentally reimagining how computation occurs at the network edge. Unlike conventional processors that execute instructions sequentially, these brain-inspired chips process information through spiking neural networks—architectures that mimic the event-driven communication patterns of biological neurons. When a neuron-like processing element receives sufficient input, it fires a spike that propagates through the network, triggering cascading computations only when and where needed. This asynchronous, event-driven approach means the chip consumes power only during active processing, achieving energy efficiency improvements of 100x to 1000x compared to traditional GPU-based AI inference for many workloads. The processors integrate memory and computation within the same physical architecture, eliminating the energy-intensive data movement that dominates conventional computing.

For telecommunications providers managing millions of distributed network nodes, neuromorphic processors enable a shift from centralized cloud intelligence to autonomous edge decision-making. Cell towers equipped with these chips can perform real-time spectrum analysis, identifying interference patterns and optimizing signal routing without transmitting massive datasets to distant data centers. In industrial IoT deployments, neuromorphic gateways continuously monitor sensor streams for anomalies—detecting equipment vibration signatures that indicate impending failure or identifying unusual network traffic patterns that suggest security breaches—all while operating on minimal power budgets suitable for solar or battery operation. This local processing capability proves particularly valuable in remote locations where network connectivity is intermittent or bandwidth-constrained, as critical decisions can be made locally without dependence on cloud services. The technology also addresses latency-sensitive applications where milliseconds matter, such as autonomous vehicle coordination or industrial automation, by processing sensory data and making decisions within the immediate physical environment.

Early deployments of neuromorphic edge processors are emerging across telecommunications infrastructure, with research initiatives exploring their integration into 5G and future 6G network architectures. Pilot programs have demonstrated their effectiveness in predictive maintenance scenarios, where processors analyze vibration, temperature, and acoustic data from network equipment to forecast failures days or weeks in advance, enabling proactive repairs that minimize service disruptions. The technology aligns with broader industry trends toward distributed intelligence and edge computing, as network operators seek to reduce backhaul bandwidth costs while improving service responsiveness. As telecommunications networks continue to densify with the proliferation of small cells, IoT devices, and edge computing nodes, the energy efficiency and autonomous operation capabilities of neuromorphic processors position them as essential components in sustainable, intelligent network infrastructure that can scale without proportional increases in power consumption or operational complexity.