Neuro-Adaptive Learning Environments

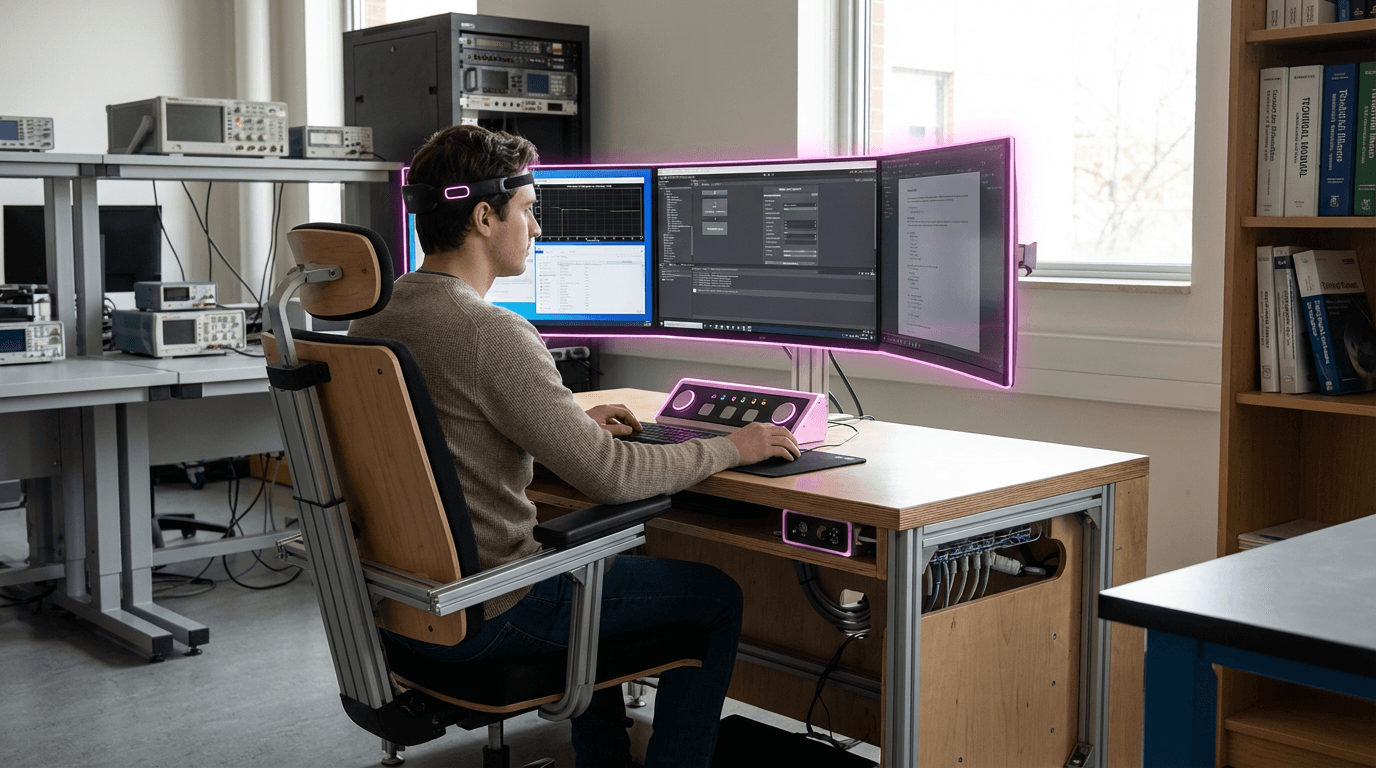

Neuro-adaptive learning environments represent a convergence of neuroscience, educational technology, and real-time biometric monitoring to create educational experiences that respond dynamically to a learner's cognitive state. These systems integrate brain-computer interfaces (BCI), eye-tracking devices, and other physiological sensors to continuously monitor indicators of cognitive load, attention, stress, and engagement. By analyzing patterns in neural activity, pupil dilation, blink rates, and gaze patterns, the technology can detect when a learner is struggling with material that is too challenging, becoming disengaged due to content that is too simple, or experiencing stress that impedes learning. The underlying algorithms process these biometric signals in real-time, creating a feedback loop that allows the system to make immediate adjustments to the learning experience. This approach moves beyond traditional adaptive learning systems that rely solely on performance metrics like test scores, instead accessing direct physiological evidence of the learner's mental state.

The educational sector has long grappled with the challenge of delivering personalized instruction at scale, particularly in contexts where individual tutoring is impractical or cost-prohibitive. Traditional classroom settings and even conventional e-learning platforms struggle to maintain each student within their optimal zone of proximal development—the sweet spot where material is challenging enough to promote growth but not so difficult as to cause frustration and disengagement. Neuro-adaptive systems address this limitation by providing continuous, individualized calibration of learning experiences. When cognitive load indicators suggest a student is overwhelmed, the system might simplify explanations, introduce additional scaffolding, reduce the pace of new information, or shift to different presentation modalities. Conversely, when biometric data indicates boredom or underutilization, the platform can accelerate content delivery, introduce more complex challenges, or present material in more engaging formats. This capability is particularly valuable for learners with diverse needs, including those with learning differences, attention disorders, or varying levels of prior knowledge in a subject area.

Early implementations of neuro-adaptive learning environments have emerged primarily in research settings and specialized educational contexts, with pilot programs exploring applications in language learning, STEM education, and professional training. Some aviation and medical training programs have begun experimenting with these systems to optimize skill acquisition in high-stakes fields where maintaining optimal cognitive engagement is critical. The technology aligns with broader trends toward personalized learning, competency-based education, and the integration of neuroscience insights into pedagogical practice. As BCI and eye-tracking hardware becomes more affordable and less intrusive—with some systems now requiring only webcam-based eye tracking rather than specialized equipment—the potential for wider adoption increases. However, significant challenges remain, including concerns about data privacy, the need for robust validation of the relationship between biometric signals and learning outcomes, and questions about whether constant optimization might inadvertently prevent learners from developing resilience and self-regulation skills. Looking forward, these environments may become integral components of educational ecosystems, particularly as artificial intelligence advances enable more sophisticated interpretation of physiological data and more nuanced instructional adjustments, potentially transforming how we approach everything from elementary education to professional development.

Related Organizations

Develops BMI technology including the FocusCalm headband and prosthetic hands.

Pioneering research group led by Rosalind Picard that develops systems to recognize, interpret, and simulate human affects, including adaptive interfaces.

The Soft Machines Lab at CMU develops soft multifunctional materials and robots.

The global leader in eye-tracking technology, providing the sensor stack required for dynamic foveated rendering.

Develops VR-compatible brain sensor modules to analyze user emotion and stress levels during immersive experiences.

Developer of the SenzeBand and Memorie app, a cognitive training solution for children and seniors.

Develops semi-dry and dry EEG wearable devices for human behavior research and neurotechnology applications.

Develops the Muse EEG headband and software platform that adapts audio soundscapes in real-time based on the user's brain state (meditation/focus).

Creates open-source brain-computer interface tools and the Galea headset (integrating with VR) for researching physiological responses.