Spatial Computing

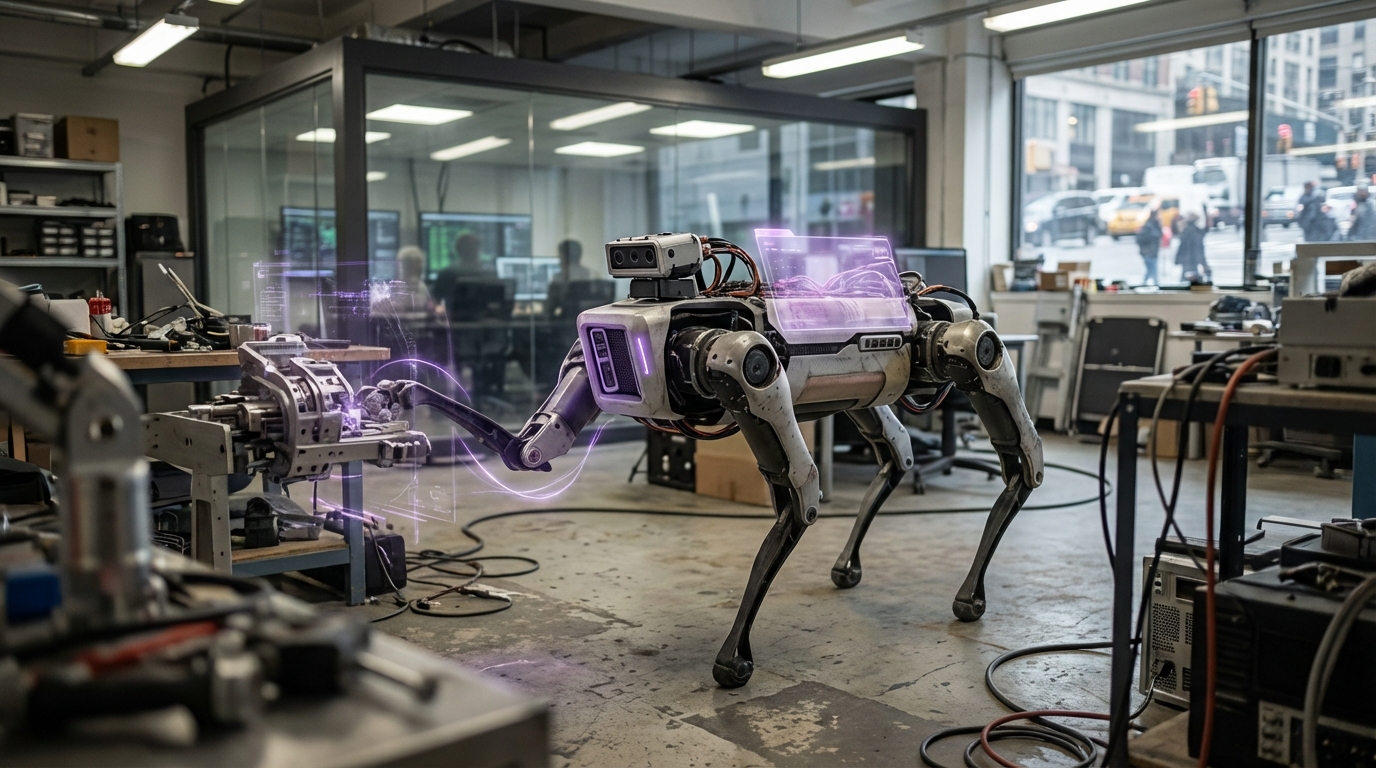

Spatial computing creates digital representations of physical spaces by combining computer vision, depth sensing, LiDAR, and other sensor technologies to map, understand, and interact with three-dimensional environments in real-time. The technology enables devices and systems to understand spatial relationships, object positions, surfaces, and physical constraints, allowing digital content to be precisely overlaid on or interact with the physical world. This creates seamless integration between digital and physical realms, enabling augmented reality experiences, spatial-aware robots, and intelligent systems that understand their physical context.

The technology enables new forms of human-computer interaction where digital interfaces exist in three-dimensional space, robots that understand and navigate physical environments, and systems that can reason about spatial relationships. Spatial computing combines simultaneous localization and mapping (SLAM), object recognition, depth perception, and spatial reasoning to create comprehensive understanding of physical spaces. Applications include augmented and mixed reality devices, autonomous robots and vehicles, smart buildings that understand occupancy and spatial usage, and urban planning tools that model and simulate spatial changes. Companies like Apple (with Vision Pro), Meta, and various robotics firms are advancing spatial computing capabilities.

At TRL 7, spatial computing is commercially deployed in various applications including AR/VR devices, autonomous systems, and smart infrastructure. The technology faces challenges including accuracy and precision in complex environments, computational requirements for real-time processing, privacy concerns with spatial data collection, and interoperability between different spatial computing systems. However, as sensor technology improves and processing power increases, spatial computing becomes increasingly sophisticated. The technology could transform how we interact with computers, enabling interfaces that exist in physical space, robots that seamlessly operate in human environments, and systems that understand and optimize spatial relationships, potentially creating a world where digital and physical seamlessly merge.

Related Organizations

Developing 'Apple Intelligence', a personal intelligence system integrated into iOS/macOS that uses on-device context to mediate tasks and information.

AR platform company that develops the Lightship ARDK and owns Scaniverse, a 3D scanning app leveraging LiDAR.

United States · Consortium

Maintains the Vulkan API, which includes cross-platform extensions for hardware-accelerated ray tracing.

AR headset manufacturer utilizing dynamic dimming and eye-tracking for optimized rendering.

Offers the AI Stack which includes tools for hardware-aware model efficiency and architecture search.

Provides spatial mapping and visual positioning technology that allows for city-scale AR experiences; acquired by Hexagon.

Manufacturer of consumer AR glasses (Air, Light) that tether to phones or computing pucks.

Specializes in AR glasses and AI, producing the Rokid Max and Station.

United States · Company

Provides a platform for 3D geospatial data, enabling developers to stream real-world terrain into game engines like Unreal and Unity.

Supplier of smart glasses and Augmented Reality (AR) technologies.