Explainable Artificial Intelligence (XAI)

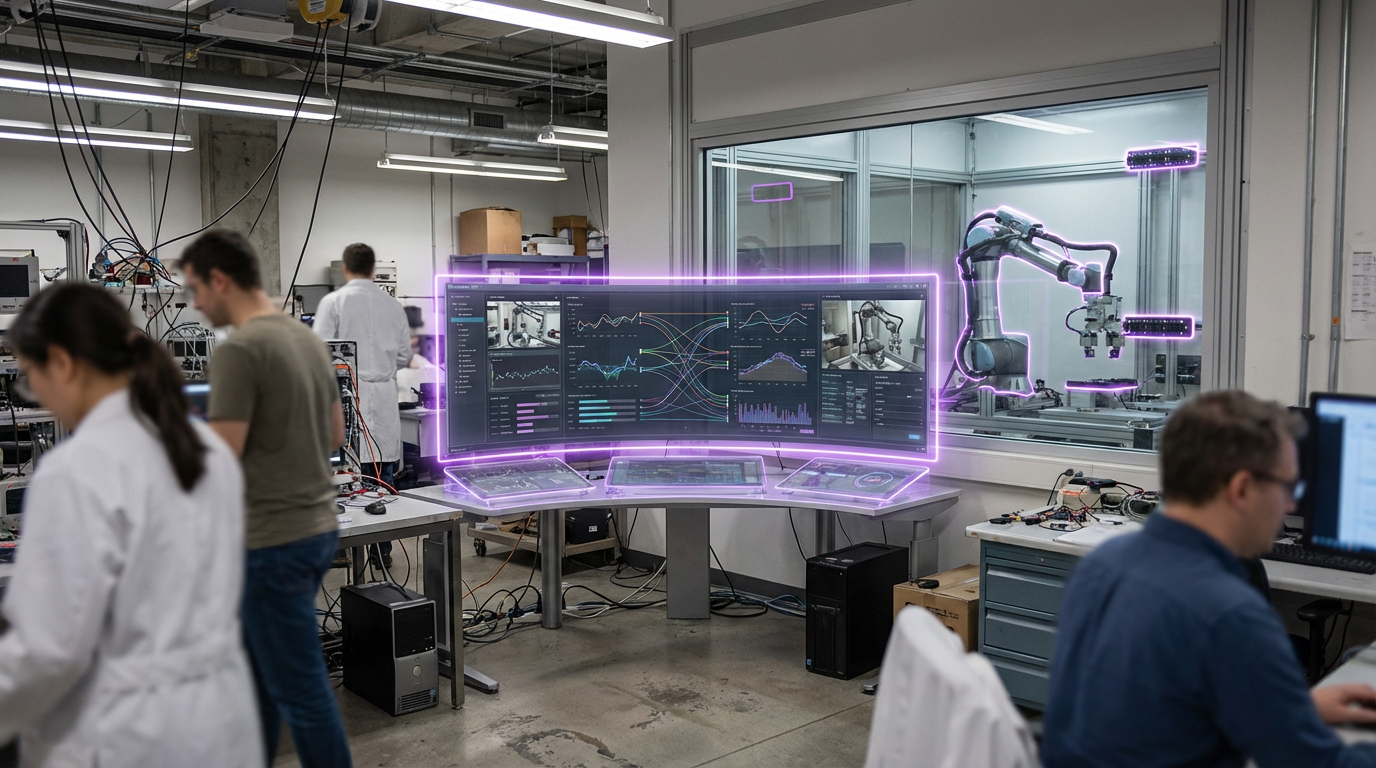

Explainable Artificial Intelligence (XAI) encompasses techniques and methods that make AI system decisions, predictions, and behaviors understandable to humans. As AI systems become more complex and are deployed in critical applications, the ability to understand why an AI made a particular decision becomes essential for trust, debugging, compliance, and ethical oversight. XAI techniques include model interpretability methods that reveal how models work, post-hoc explanation systems that explain decisions after they're made, and inherently interpretable models designed to be understandable from the start.

The technology addresses the "black box" problem where complex AI systems make decisions that humans cannot understand or verify. This is particularly critical in regulated industries, high-stakes applications, and situations where decisions affect people's lives or rights. XAI enables stakeholders to understand AI reasoning, verify that decisions are fair and appropriate, debug problems, and build trust in AI systems. Applications include financial services where decisions must be explainable for regulatory compliance, healthcare where doctors need to understand AI recommendations, and legal systems where decisions must be justifiable. Companies and research institutions are developing various XAI techniques and tools.

At TRL 5, explainable AI techniques are available and being integrated into AI systems, though balancing explainability with performance remains a challenge. The technology faces obstacles including the trade-off between model complexity and explainability, ensuring explanations are accurate and not misleading, developing explanations that are useful to different audiences, and maintaining performance while adding explainability. However, as regulations require AI explainability and trust becomes essential for adoption, XAI becomes increasingly important. The technology could enable broader, safer adoption of AI by making systems transparent and auditable, potentially allowing AI to be deployed in critical applications where understanding and trust are essential, while also helping identify and correct biases or errors in AI systems.

Related Organizations

Runs the Semantic Forensics (SemaFor) program to develop technologies for automatically detecting, attributing, and characterizing falsified media.

Provides Model Performance Management (MPM) to monitor, explain, and analyze AI models in production.

Provides AI software for credit underwriting that includes automated explainability for compliance (Zest Automated Machine Learning).

A model monitoring platform that specializes in explainability, bias detection, and performance tracking.

Long-standing leader in neuro-symbolic AI, combining neural networks with logical reasoning for enterprise applications.

The US federal agency leading the global competition to select and standardize post-quantum cryptographic algorithms.

Provides an AI governance platform that helps enterprises measure and monitor the fairness and performance of their AI systems.

An ML observability platform that helps teams detect issues, troubleshoot, and improve model performance in production.

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

Provides Driverless AI, an AutoML platform that includes architecture search and hyperparameter tuning.

Offers a platform for creating collaborative data ecosystems using federated learning and privacy-preserving technologies.