Multimodal Archive Indices

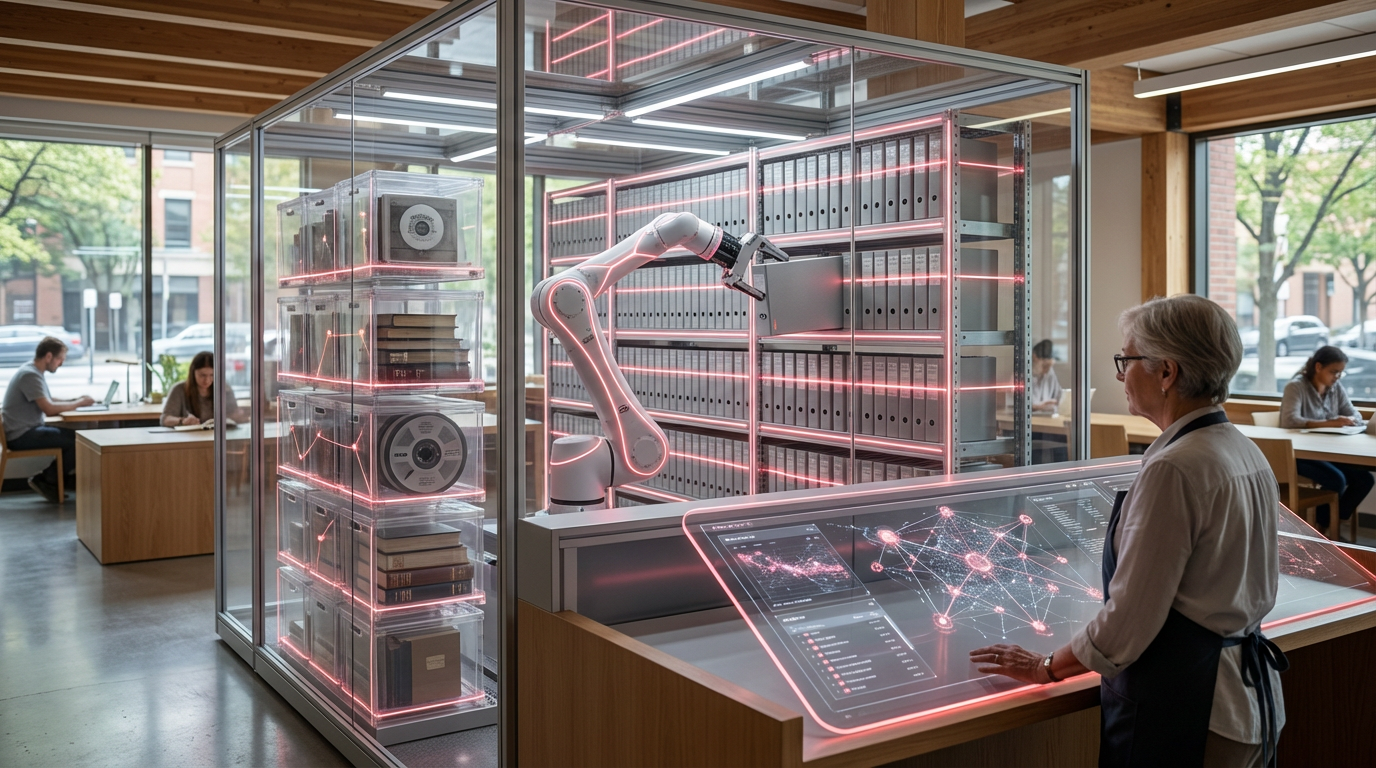

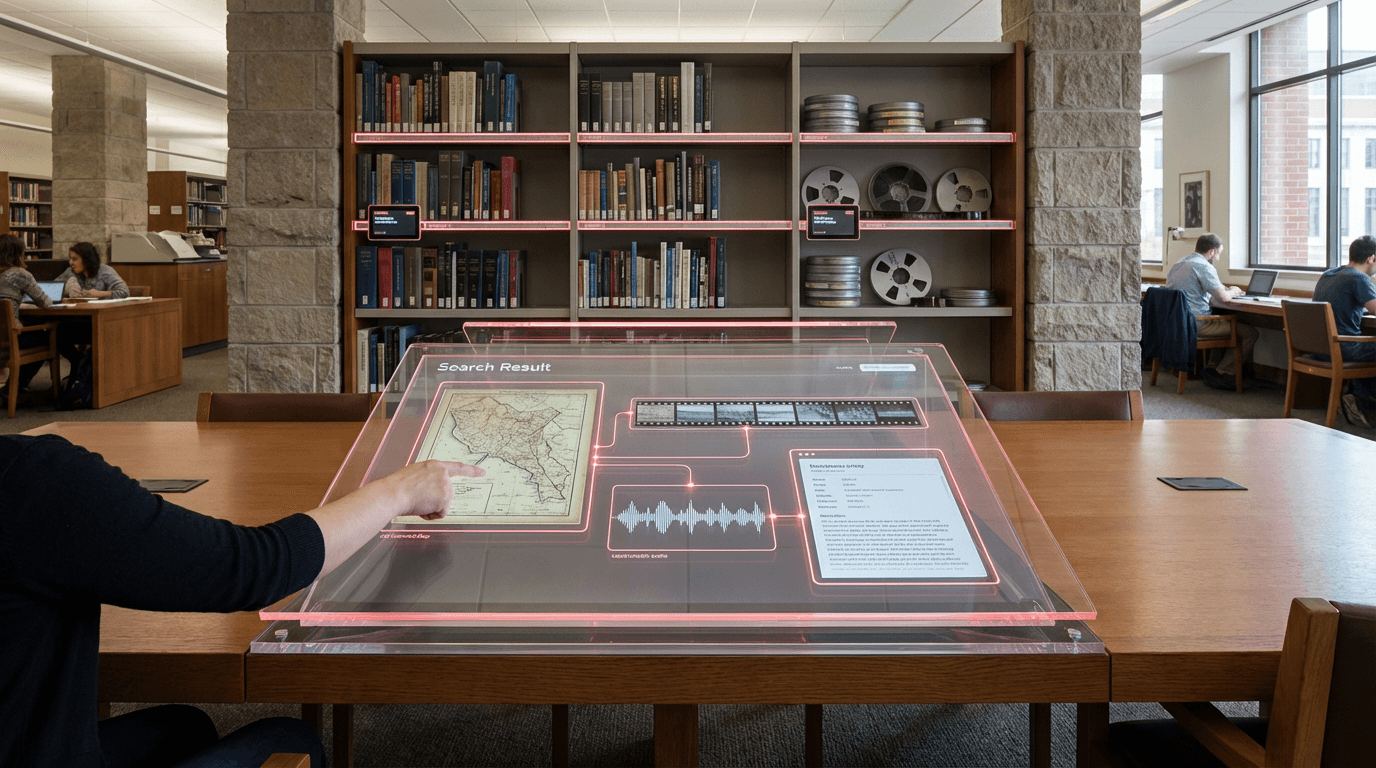

Traditional archival systems have long struggled with the fundamental challenge of siloed media formats, where text documents, photographs, audio recordings, and video footage exist in separate catalogues with incompatible metadata schemas. Researchers seeking comprehensive understanding of historical events or cultural phenomena have been forced to conduct multiple parallel searches across disconnected systems, often missing crucial connections between related materials stored in different formats. Multimodal archive indices address this fragmentation by leveraging foundation models—large-scale neural networks trained on diverse data types—to create unified representations of heterogeneous archival collections. These systems employ embedding techniques that transform books, manuscripts, photographic scans, oral histories, and film footage into points within a shared mathematical space, where conceptual similarity rather than format determines proximity. The underlying architecture typically combines vision transformers for visual materials, language models for textual content, and audio encoders for sound recordings, all aligned through contrastive learning methods that teach the system to recognise when different media types express related concepts or depict the same events.

For cultural heritage institutions, libraries, and research archives, this technology fundamentally transforms the discovery process and unlocks previously inaccessible connections within their holdings. Scholars can now pose queries in natural language—such as "industrial labour conditions in 1920s textile mills"—and retrieve relevant newspaper articles alongside factory photographs, worker testimonies recorded decades later, and documentary footage, all ranked by conceptual relevance rather than keyword matches. This capability is particularly valuable for interdisciplinary research, oral history projects, and investigations of underrepresented communities whose stories may be scattered across diverse media formats and institutional collections. Museums and archives also gain new tools for identifying gaps in their collections, discovering unexpected relationships between holdings, and creating more comprehensive digital exhibitions that weave together multiple forms of evidence. The technology addresses long-standing challenges in archival description, where the labour-intensive process of creating detailed metadata for every item has left vast portions of many collections effectively invisible to traditional search systems.

Early implementations are emerging in national libraries, university special collections, and cultural heritage consortia, where pilot projects demonstrate the potential for cross-collection discovery at unprecedented scale. Research institutions are deploying these systems to support projects ranging from climate history studies that combine scientific reports with photographic evidence of environmental change, to social history investigations that link census records with family photographs and recorded interviews. The technology shows particular promise for audiovisual archives, where the cost of manual transcription and cataloguing has historically limited access to film and sound collections. As foundation models continue to improve and computational costs decline, multimodal archive indices are positioned to become standard infrastructure for knowledge institutions, enabling what archivists call "collection as data" approaches where entire holdings become computationally accessible research corpora. This trajectory aligns with broader movements toward linked open data, digital humanities methodologies, and efforts to democratise access to cultural heritage, suggesting that future researchers will increasingly work across format boundaries that previous generations took for granted as fixed constraints.

Related Organizations

Information management consulting and software development firm.

Building foundation models specifically for video understanding, enabling semantic search and summarization of video content.

Open-source vector search engine with out-of-the-box modules for vectorization and RAG.

AI platform for computer vision, NLP, and audio recognition.

The research library that officially serves the United States Congress.

Provides a managed vector database optimized for high-performance AI similarity search.

Maintainers of Milvus, an open-source vector database for scalable similarity search.