Federated Archival Learning

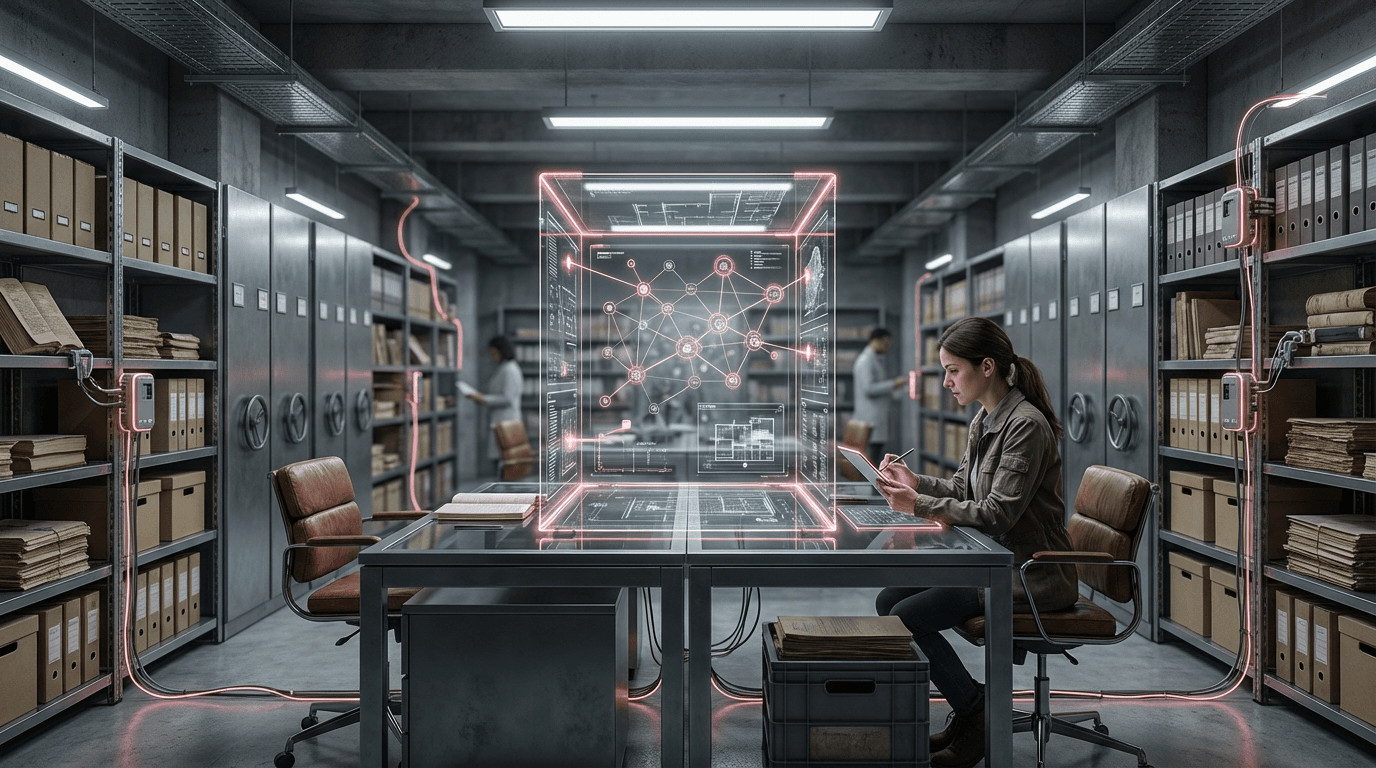

Federated Archival Learning represents a paradigm shift in how artificial intelligence systems can extract insights from sensitive historical and cultural materials distributed across multiple institutions. Unlike traditional centralized machine learning approaches that require aggregating all data in a single location, this framework enables algorithms to train collaboratively across decentralized repositories while keeping the actual data securely stored at its source. The technical foundation rests on federated learning protocols, where local models are trained on individual datasets—whether personal diaries, medical records, institutional archives, or indigenous knowledge collections—and only the learned parameters or model updates are shared across the network. These updates are then aggregated to create a global model that benefits from the collective knowledge without any institution needing to expose its sensitive materials. Advanced cryptographic techniques, including differential privacy and secure multi-party computation, ensure that even the shared model updates cannot be reverse-engineered to reveal information about individual records or contributors.

The archival and library sectors face a persistent tension between maximizing research value and protecting privacy, cultural sensitivity, and institutional autonomy. Many of the most valuable historical collections—from Holocaust testimonies to indigenous oral histories, from psychiatric case files to personal correspondence—contain information too sensitive to share openly, yet too important to leave unanalyzed. Traditional digitization and open access initiatives often stall when confronted with these privacy concerns, legal restrictions, or cultural protocols that limit data sharing. Federated Archival Learning resolves this impasse by enabling collaborative research and AI-driven discovery without requiring data centralization. This approach is particularly transformative for cross-institutional research projects, allowing museums, libraries, and archives to participate in large-scale pattern recognition, semantic analysis, and knowledge extraction initiatives while maintaining complete control over their collections and honoring donor agreements, cultural sensitivities, and regulatory requirements like GDPR or HIPAA.

Early implementations of this framework are emerging in medical archives, where researchers are using federated approaches to analyze historical patient records across multiple hospitals without violating privacy regulations, and in cultural heritage contexts, where indigenous communities are exploring how AI might help preserve and analyze oral traditions without requiring centralized databases. Research institutions are piloting federated systems that allow scholars to query sensitive collections remotely, receiving aggregated insights rather than raw data access. The technology aligns with broader movements toward data sovereignty and ethical AI, particularly as archival institutions grapple with decolonization efforts and the need to respect community ownership of cultural materials. As concerns about data privacy intensify and regulatory frameworks become more stringent, federated approaches are likely to become standard practice for any AI analysis involving sensitive archival materials, fundamentally reshaping how knowledge institutions balance openness with protection in an increasingly data-driven research landscape.

Related Organizations

A community-driven organization building privacy-preserving AI technology, including PySyft for encrypted, privacy-preserving deep learning.

Develops the Flower framework, an open-source, unified approach to federated learning that works with any workload, ML framework, and training environment.

Creators of TensorFlow Federated, a framework for machine learning on decentralized data.

Swiss Federal Institute of Technology, a global leader in privacy technologies and decentralized AI research.

Provides a privacy-preserving AI platform that enables federated learning for data privacy and regulatory compliance.

Offers a platform for creating collaborative data ecosystems using federated learning and privacy-preserving technologies.

Long-standing leader in neuro-symbolic AI, combining neural networks with logical reasoning for enterprise applications.

Developer of the Loihi neuromorphic research chip and Foveros 3D packaging technology.

Provides a distributed data science platform that allows algorithms to travel to the data rather than moving the data itself.

Provides data clean rooms powered by confidential computing to enable secure data collaboration and model training.