Perinatal AI Fairness Audits

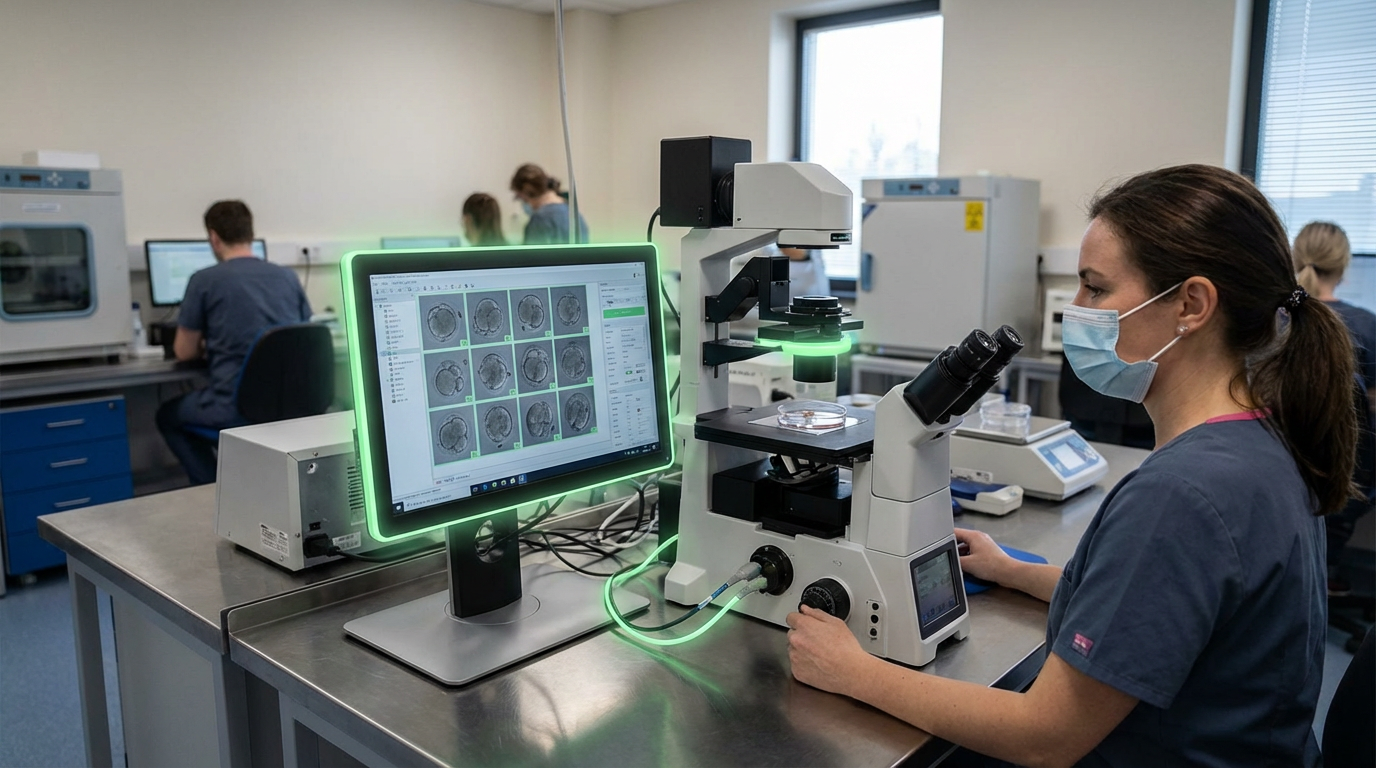

Perinatal AI Fairness Audits represent a critical quality assurance framework designed to identify and address algorithmic bias in artificial intelligence systems deployed across reproductive healthcare. These audits systematically examine machine learning models used in fertility prediction, pregnancy risk assessment, and neonatal care prioritization to ensure they perform equitably across different demographic groups. The technical approach typically involves statistical analysis of model outputs across protected characteristics such as race, ethnicity, socioeconomic status, and geographic location, comparing prediction accuracy, false positive rates, and treatment recommendations. Auditing methodologies draw from established fairness metrics in machine learning—including demographic parity, equalized odds, and calibration measures—while adapting them to the unique clinical context of perinatal care. The process often includes both retrospective analysis of historical predictions and prospective monitoring of deployed systems, creating feedback loops that can identify emerging bias patterns as patient populations and clinical practices evolve.

The healthcare industry faces mounting concerns about algorithmic bias in reproductive medicine, where AI systems increasingly influence critical decisions about fertility treatments, high-risk pregnancy management, and neonatal intensive care resource allocation. Research suggests that training data often underrepresents minority populations and may encode historical healthcare disparities, leading to models that systematically underestimate risk for certain groups or recommend different intervention thresholds based on non-clinical factors. These fairness audits address the urgent need for accountability mechanisms as hospitals and fertility clinics adopt AI-powered decision support tools. By establishing standardized testing protocols and reporting frameworks, these audits enable healthcare providers to identify problematic algorithms before they cause harm, while also creating documentation trails essential for regulatory compliance and medical liability management. The emergence of this practice reflects growing recognition that clinical AI systems require ongoing surveillance beyond initial validation studies, particularly in domains where algorithmic errors can perpetuate or amplify existing health inequities.

Early implementations of perinatal AI fairness audits have appeared primarily in academic medical centers and large hospital systems with dedicated AI ethics committees, though regulatory bodies are beginning to develop formal requirements for bias testing in clinical algorithms. Some healthcare organizations now conduct quarterly fairness reviews of their pregnancy risk stratification tools, examining whether prediction errors cluster around specific patient subgroups. The practice connects to broader movements toward algorithmic accountability in healthcare and aligns with emerging regulatory frameworks in the European Union and proposed legislation in several U.S. states requiring impact assessments for automated decision systems in sensitive domains. As AI adoption accelerates in reproductive medicine—from embryo selection algorithms to preterm birth prediction models—the demand for rigorous fairness auditing will likely intensify, potentially evolving into mandatory certification requirements similar to those governing medical devices. The long-term trajectory points toward integration of fairness metrics into the standard development lifecycle for perinatal AI systems, transforming bias detection from an optional quality check into a fundamental component of responsible clinical AI deployment.

Related Organizations

Coalition for Health AI (CHAI)

United States · Consortium

A coalition of health systems, tech companies, and academic institutions establishing guidelines for credible, fair, and transparent health AI.

An organization that combines art and research to illuminate the social implications and harms of AI systems.

NIH program dedicated to Artificial Intelligence/Machine Learning Consortium to Advance Health Equity and Researcher Diversity.

A global gender data alliance working to improve the quality, availability, and use of gender data.

Interdisciplinary institute at Stanford University dedicated to guiding the future of AI.

Established the Expert Advisory Committee on Developing Global Standards for Governance and Oversight of Human Genome Editing.

Developed Derm Assist, an AI-powered tool that helps identify skin conditions and provides information on common treatments.

Long-standing leader in neuro-symbolic AI, combining neural networks with logical reasoning for enterprise applications.

A $500 million initiative by Merck to create a world where no woman has to die while giving life.

World-leading deep learning research institute, with specific research tracks led by Yoshua Bengio on 'Consciousness Priors' in AI.

A digital health platform for family building, pregnancy, and parenting (acquired by LabCorp).