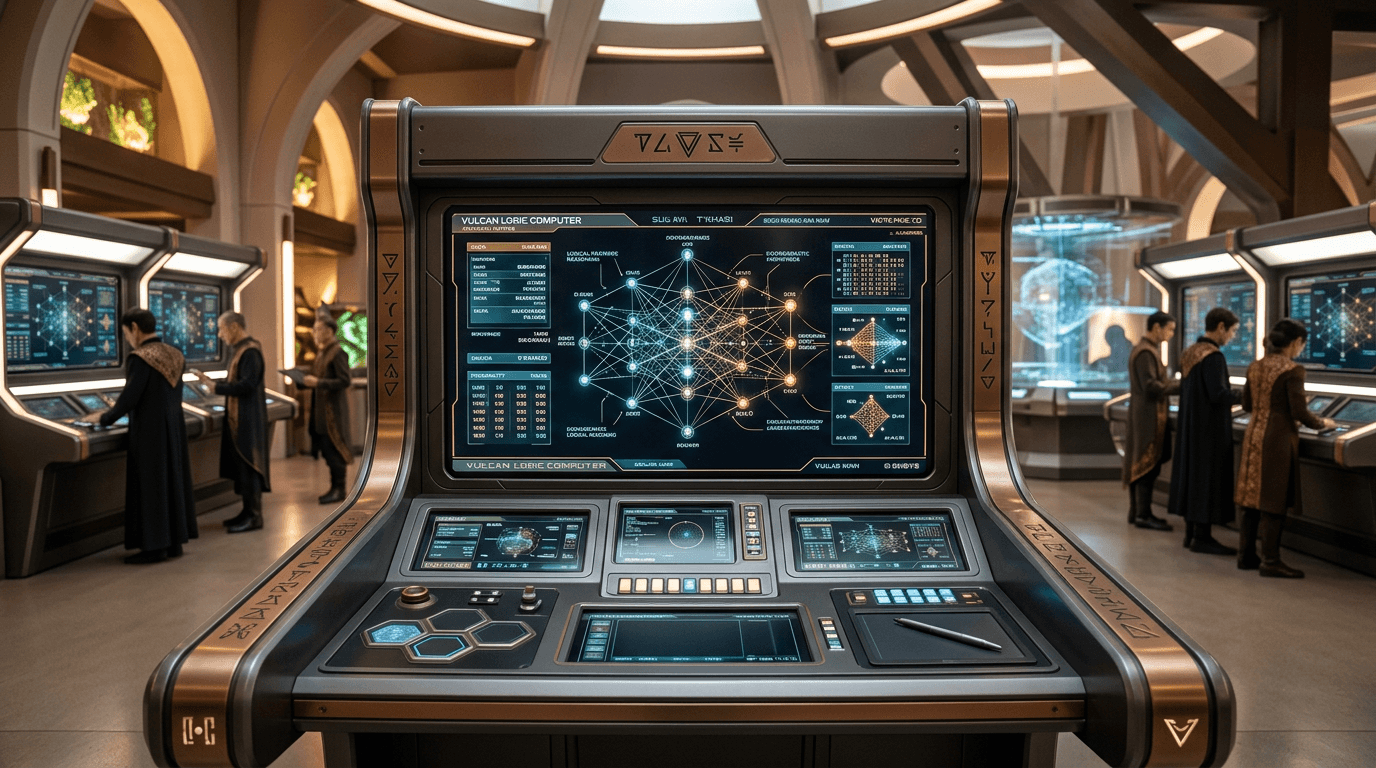

Vulcan Logic Computers

Vulcan logic computers represent a speculative computing paradigm that diverges fundamentally from conventional binary architectures by employing probabilistic logic matrices as their computational foundation. Rather than processing information through discrete true/false states, these systems are imagined to operate using graduated probability distributions across multiple logical states simultaneously, enabling them to model uncertainty and nuance in ways that binary systems cannot. This approach theoretically allows for more sophisticated handling of complex reasoning tasks, particularly those involving incomplete information, contradictory evidence, or multi-valued logic problems. The probabilistic matrices would continuously evaluate and re-weight logical propositions based on incoming data, creating a computational substrate optimized for inference rather than simple calculation. This design philosophy reflects a cultural prioritization of rigorous logical analysis over raw processing speed, suggesting a civilization that values precision in reasoning above computational throughput.

Within science fiction narratives, particularly those exploring advanced civilizations with distinct technological philosophies, Vulcan computing systems serve as a counterpoint to more familiar AI-driven architectures. The emphasis on minimal autonomous decision-making reflects a narrative theme where logical beings maintain strict control over their computational tools, avoiding the emergence of independent machine intelligence. This design choice appears in scenarios examining how different cultural values might shape technological development—in this case, a society that prizes conscious logical thought would naturally create computing systems that augment rather than replace deliberative reasoning. The concept also intersects with real-world research into probabilistic computing, quantum logic gates, and non-binary computational models, though these remain largely experimental. The extreme reliability requirement suggests redundancy architectures and formal verification methods that ensure computational outputs can be trusted for critical decision-making, a concern increasingly relevant in contemporary discussions about AI safety and algorithmic accountability.

From a plausibility standpoint, probabilistic logic systems face significant practical challenges that current technology has not overcome. While research into probabilistic computing exists, particularly in specialized applications like Bayesian inference accelerators and certain neural network implementations, creating general-purpose computers based on probabilistic logic matrices would require fundamental breakthroughs in hardware design, error correction, and programming paradigms. The notion that such systems would inherently resist autonomous AI development is questionable, as intelligence emergence depends more on architecture and training than on the underlying logical substrate. However, the core concept of non-binary computing finds some validation in quantum computing research and fuzzy logic systems, both of which explore computational models beyond classical binary states. For Vulcan-style computers to become plausible, advances would be needed in stable multi-state logic gates, probabilistic circuit design, and methods for programming systems that reason with uncertainty as a native operation rather than a simulated one.