Varifocal Displays

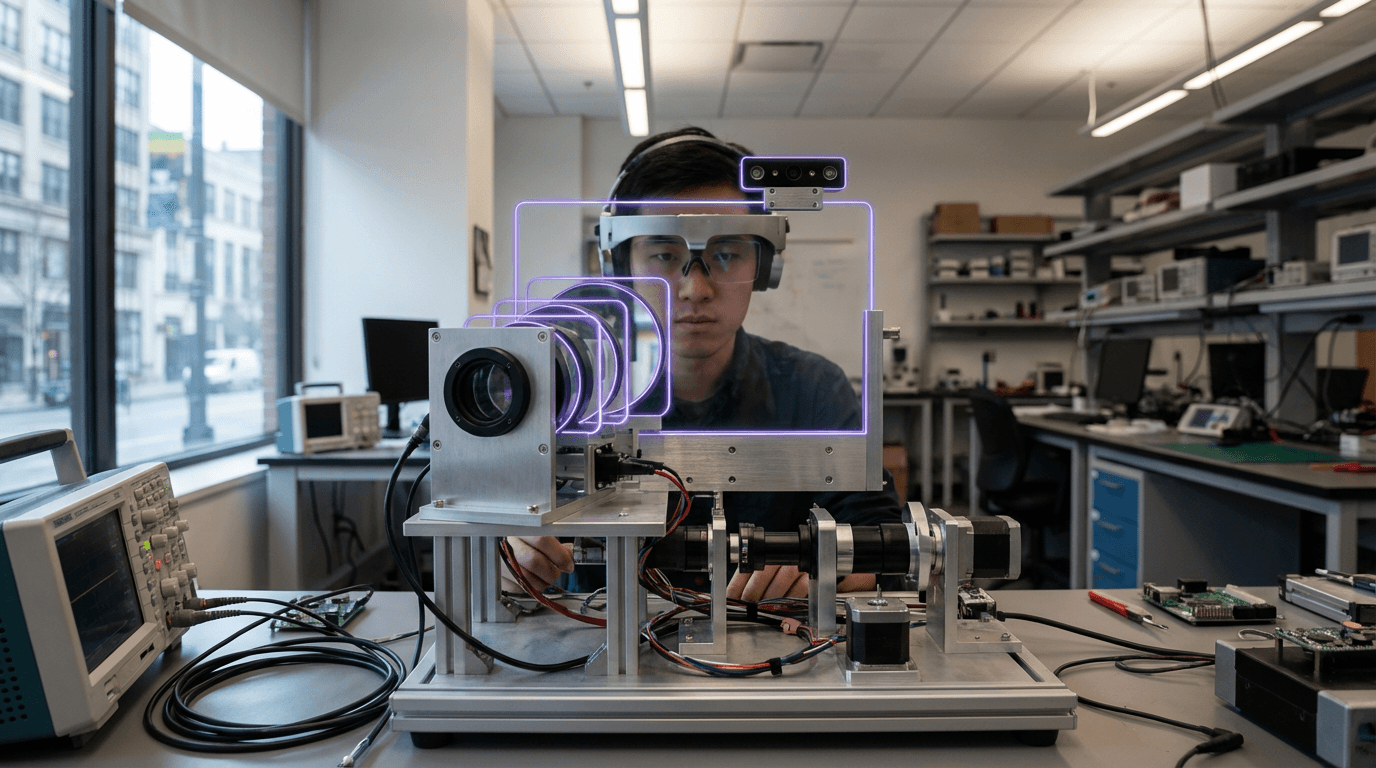

Varifocal displays represent a significant advancement in optical technology designed to address one of the most persistent challenges in virtual and augmented reality systems: the vergence-accommodation conflict. In natural human vision, when we focus on objects at different distances, our eyes both converge toward the target (vergence) and adjust their lens shape to bring that distance into sharp focus (accommodation). Traditional stereoscopic displays used in XR headsets create a fundamental mismatch by presenting images at a fixed optical distance while simulating depth through binocular disparity alone. This forces the eyes to converge on virtual objects at various apparent distances while the lenses remain focused at a single physical plane, typically around two meters away. Varifocal displays resolve this conflict through dynamic optical systems that physically adjust the distance between display panels and lenses, or employ tunable optical elements such as liquid crystal lenses, deformable membrane mirrors, or Alvarez lenses. These mechanisms shift the focal plane in real time, synchronized with eye-tracking systems that detect where the user is directing their gaze, ensuring that accommodation and vergence cues remain naturally aligned.

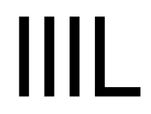

The implications of this technology extend far beyond user comfort. The vergence-accommodation conflict has been a major barrier to extended XR usage, contributing to visual fatigue, headaches, and nausea that limit session durations to minutes or hours rather than the sustained periods required for professional applications. Industries relying on immersive visualization—including medical training, architectural design review, industrial maintenance, and remote collaboration—have been constrained by these physiological limitations. Varifocal displays enable practitioners to work in virtual environments for extended periods without the cumulative eye strain that plagues current systems. Additionally, this technology unlocks more convincing depth perception, which is critical for tasks requiring precise spatial judgment, such as surgical simulation or complex assembly procedures. By providing correct focus cues across the entire depth range of a virtual scene, varifocal systems also reduce the cognitive load associated with interpreting artificial depth information, allowing users to interact with virtual content as naturally as they would with physical objects.

Research prototypes from major technology companies and academic institutions have demonstrated various varifocal implementations, though commercial availability remains limited to high-end enterprise applications and specialized research equipment. Early deployments indicate particular promise in professional training environments where visual accuracy and extended session comfort justify the additional hardware complexity and cost. The technology faces engineering challenges including the speed of focal adjustment, mechanical reliability of moving optical components, and integration with lightweight headset designs suitable for consumer markets. However, as eye-tracking becomes standard in XR headsets and manufacturing techniques for adaptive optics mature, varifocal displays are positioned to become a foundational element of next-generation immersive systems. This evolution aligns with broader industry trends toward perceptually accurate rendering and physiologically compatible display technologies, suggesting that future XR devices will increasingly prioritize optical fidelity that matches human visual capabilities rather than simply maximizing resolution or field of view.

Related Organizations

Developing light-field display technology primarily for AR glasses but applicable to direct-view panels.

Develops the Quest Pro and research prototypes (Butterscotch, Starburst) focusing on foveated systems.

Academic lab led by Gordon Wetzstein researching computational light field displays and near-eye optics.

Develops multi-focal AR headsets using rapid switching liquid crystal screens to create physical depth planes.

AR headset manufacturer utilizing dynamic dimming and eye-tracking for optimized rendering.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Software and IP licensing company specializing in computer-generated holography (CGH) for displays and AR.

R&D company focusing on tracked holographic 3D displays, with significant investment from Volkswagen.

Fabless semiconductor company developing 'Holographic eXtended Reality' (HXR) chips based on proprietary Phase Change Material (PCM) technology with pixel pitches under 300nm.

Led the OSIRIS-REx mission, developing techniques for mapping, prospecting, and sampling asteroid Bennu.