Ultrasonic Haptics

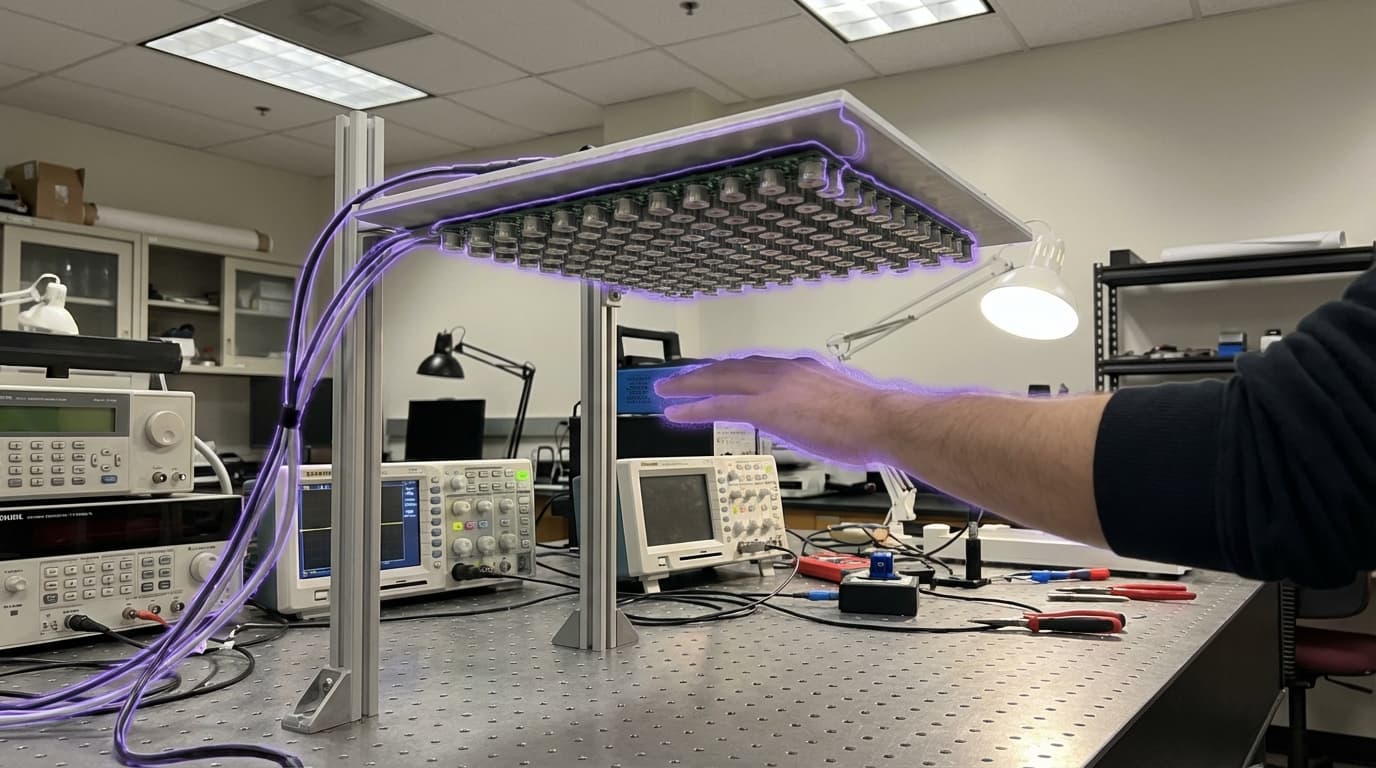

Ultrasonic haptics represents a breakthrough in human-computer interaction by generating tactile sensations in mid-air through the precise manipulation of acoustic radiation pressure. The technology employs phased arrays of ultrasonic transducers—typically operating at frequencies around 40 kHz, well above the range of human hearing—that emit sound waves capable of being focused and steered in three-dimensional space. When these acoustic waves converge at a focal point, they create localised pressure on the skin's mechanoreceptors, producing sensations that users perceive as touch, texture, or resistance. Unlike traditional haptic systems that require physical contact with controllers, gloves, or screens, ultrasonic haptics delivers feedback directly to bare skin in free space. The system works by modulating the amplitude and phase of individual transducers in the array, allowing precise control over the location, intensity, and pattern of the haptic sensation. This creates what researchers describe as "touchable holograms"—invisible force fields that can simulate the feel of buttons, switches, textures, or even the contours of virtual objects suspended in air.

The primary appeal of this technology lies in its ability to solve critical challenges in touchless interaction design, particularly in contexts where physical contact is undesirable or impractical. In automotive applications, ultrasonic haptics addresses the safety concerns of traditional touchscreens by providing tactile confirmation without requiring drivers to look away from the road—users can feel virtual buttons and controls while keeping their eyes forward. For public installations and shared interfaces, the technology eliminates hygiene concerns associated with frequently-touched surfaces, a consideration that has gained prominence in post-pandemic design thinking. In medical settings, ultrasonic haptics enables surgeons and technicians to interact with imaging systems and controls without breaking sterile fields. The technology also opens new possibilities for accessibility, allowing visually impaired users to explore spatial information and navigate interfaces through touch in ways that screen readers cannot provide. Industry analysts note growing interest from automotive manufacturers and consumer electronics companies seeking to differentiate their products through novel interaction paradigms.

Early commercial deployments have emerged in automotive dashboards, museum exhibits, and specialised industrial control systems, with several technology companies demonstrating prototype implementations at industry conferences. Research institutions continue to refine the technology's resolution and intensity range, exploring applications in virtual reality environments where mid-air haptics could complement visual displays without the encumbrance of gloves or handheld controllers. The technology aligns with broader trends toward ambient computing and natural user interfaces, where digital interactions become more seamless and less dependent on physical intermediaries. As the cost of ultrasonic transducer arrays decreases and software tools for designing haptic experiences mature, ultrasonic haptics is positioned to become an increasingly common feature in next-generation interfaces. The convergence of this technology with spatial computing platforms and gesture recognition systems suggests a future where tactile feedback becomes as ubiquitous in digital interactions as visual and auditory feedback are today, fundamentally reshaping how humans perceive and manipulate information in both physical and virtual spaces.

Related Organizations

The world leader in mid-air haptics and hand tracking, formed from the merger of Ultrahaptics and Leap Motion.

Consumer hardware startup creating the Emerge Wave-1, a device using ultrasound to create tactile sensations for VR/AR without gloves.

A leading research partner for JAXA, developing instruments and analyzing returned samples.

Public research university known for the Bristol Interaction Group.

The Soft Machines Lab at CMU develops soft multifunctional materials and robots.

Investigates soft robotics for safe human-robot interaction and expressive animatronics.

Produces small solid-state batteries for wearable and medical devices.

Industry consortium dedicated to standardizing and promoting haptic technologies.