Retrieval-Augmented Generation (RAG)

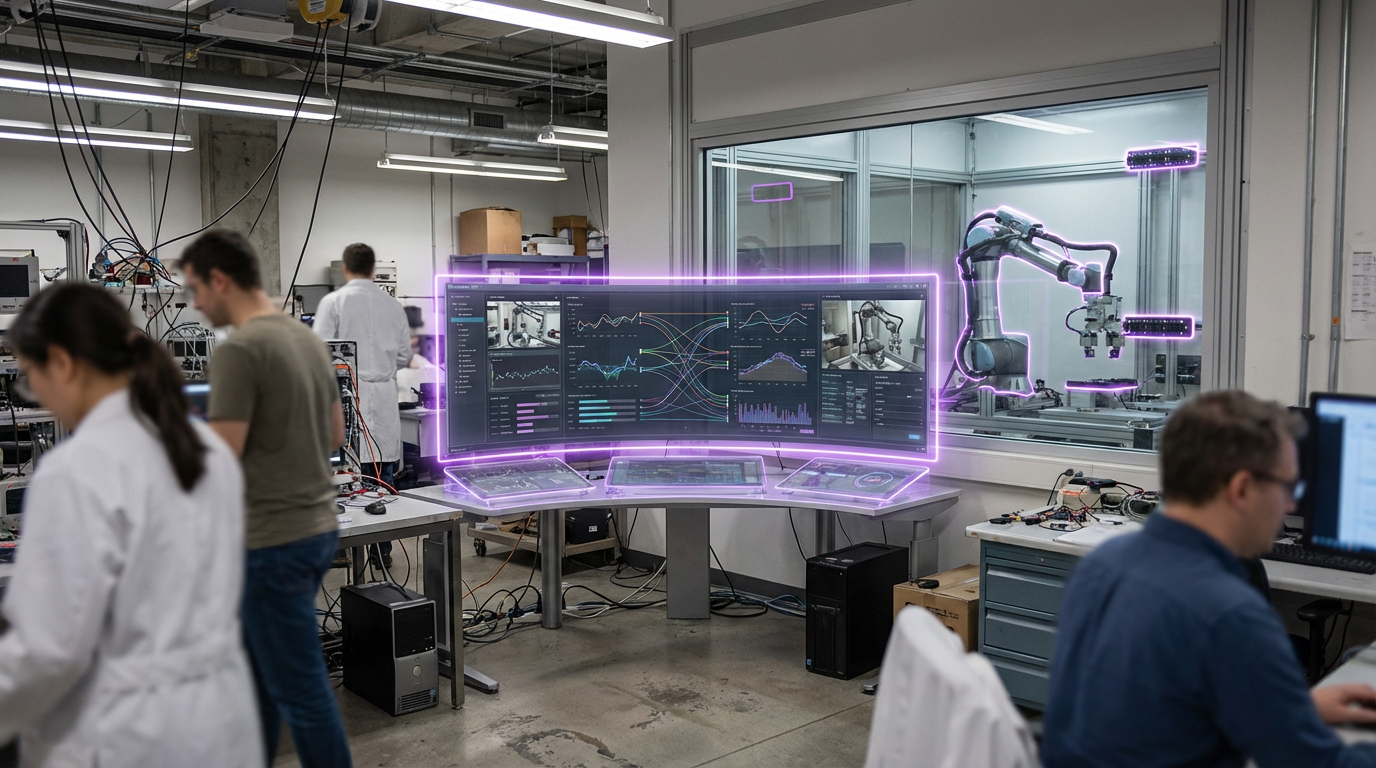

Retrieval-Augmented Generation (RAG) combines large language models with external knowledge retrieval systems to produce more accurate, up-to-date, and contextually relevant outputs. Instead of relying solely on the information the model was trained on, RAG systems first retrieve relevant information from external databases, documents, or knowledge bases, then use that retrieved information to inform the generative model's responses. This approach addresses limitations of LLMs including training data cutoff dates, lack of access to proprietary information, and tendency to hallucinate facts.

The technology enables AI systems that can access current information, use proprietary or domain-specific data, and provide citations for their sources. RAG systems can query databases, search documents, access APIs, and incorporate real-time information into their responses. Applications include financial analysis systems that query market data and reports, research assistants that search academic papers, customer service systems that access product databases, and compliance tools that reference regulations and policies. Companies and organizations are integrating RAG into various AI applications to improve accuracy and relevance.

At TRL 5, RAG systems are being deployed in various applications, though retrieval quality, integration complexity, and ensuring accurate use of retrieved information remain areas of development. The technology faces challenges including retrieving the most relevant information, ensuring retrieved information is accurately incorporated, managing large knowledge bases efficiently, and balancing retrieval scope with response quality. However, as retrieval techniques improve and integration becomes easier, RAG becomes increasingly valuable. The technology could transform how AI systems access and use information, enabling more accurate and trustworthy AI applications that can leverage current data and domain-specific knowledge, potentially making AI more useful for professional and specialized applications where accuracy and up-to-date information are critical.

Related Organizations

Develops the leading open-source framework for orchestrating LLMs and retrieval systems.

United States · Startup

Building 'RAG 2.0' systems that are end-to-end optimized for groundedness and attribution.

United States · Startup

Data framework for connecting custom data sources to large language models.

Provides a managed vector database optimized for high-performance AI similarity search.

Enterprise AI platform focusing on secure and aligned language models.

United States · Startup

Offers a 'RAG-as-a-Service' platform, providing an end-to-end solution for embedding, retrieval, and generation.

Germany · Startup

Creators of Haystack, an open-source framework specifically designed for building RAG pipelines.

Enterprise AI search platform that connects to internal apps to provide RAG-based answers.

Open-source vector search engine with out-of-the-box modules for vectorization and RAG.

Maintainers of Milvus, an open-source vector database for scalable similarity search.

Provides ETL tools to ingest and preprocess complex documents for LLM usage.