Multimodal Affective Computing

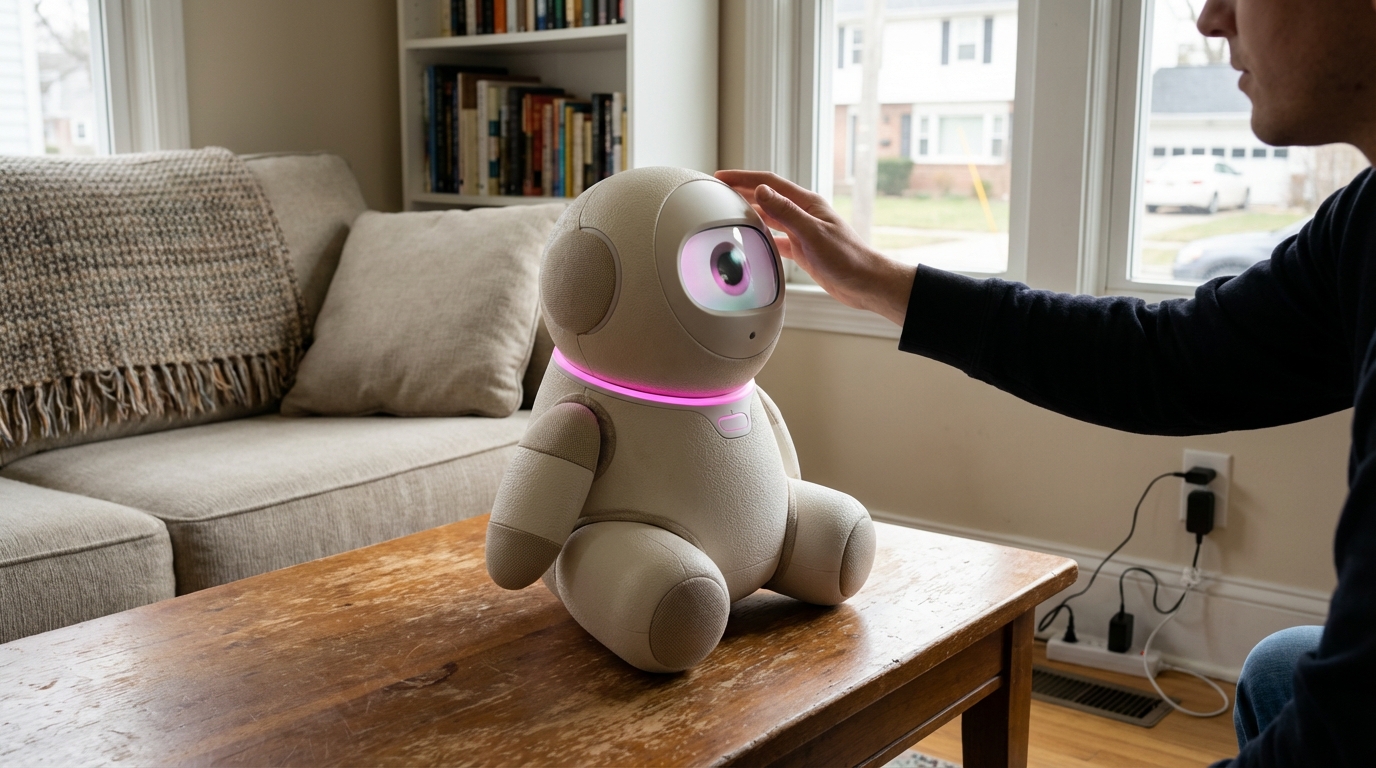

Multimodal affective computing represents a significant advancement in human-computer interaction, enabling machines to perceive and respond to the full spectrum of human emotional expression. Unlike traditional single-channel emotion recognition systems that might analyze only facial expressions or voice tone in isolation, this technology integrates multiple data streams simultaneously—including vocal prosody, facial micro-expressions, physiological signals like heart rate variability, body language, and linguistic content—to construct a more nuanced and accurate understanding of emotional states. The technical foundation relies on deep learning architectures that can process these heterogeneous data types in parallel, using sensor fusion techniques to reconcile potentially conflicting signals and temporal analysis to track emotional trajectories over time. Advanced implementations employ attention mechanisms that weight different modalities based on context, recognizing that vocal cues might be more reliable in phone conversations while facial expressions dominate in video interactions.

The relationship technology sector has long struggled with the fundamental challenge of digital communication's emotional flatness—the inability of text-based or even video platforms to fully capture the rich emotional context that characterizes face-to-face human connection. This limitation has contributed to widespread misunderstandings in digital relationships, reduced empathy in online interactions, and the difficulty of providing effective remote mental health support. Multimodal affective computing addresses these challenges by giving relationship-focused applications the capacity to detect when users are experiencing stress, frustration, joy, or subtle emotional shifts that might otherwise go unnoticed. This capability enables new forms of emotionally intelligent mediation in conflict resolution platforms, allows dating applications to provide better match recommendations based on authentic emotional compatibility rather than stated preferences, and supports mental health applications in identifying early warning signs of depression or anxiety that users might not explicitly report.

Early deployments of this technology are already appearing in therapeutic chatbots that adjust their conversational strategies based on detected emotional states, and in customer service platforms that route calls to human agents when automated systems detect high emotional distress. Research initiatives are exploring applications in couples therapy tools that can identify patterns of emotional dysregulation during arguments, and in long-distance relationship platforms that provide partners with richer emotional context during video calls. The technology shows particular promise in accessibility contexts, helping individuals with conditions like alexithymia or autism spectrum disorders better understand their own emotional states through objective feedback. As the technology matures, industry observers note a trajectory toward more sophisticated emotional intelligence in relationship platforms, though significant challenges remain around privacy concerns, the risk of emotional manipulation, and ensuring that automated empathy genuinely serves users rather than merely simulating care to drive engagement metrics.

Related Organizations

Home of the Affective Computing research group led by Rosalind Picard.

Developing an Empathic Voice Interface (EVI) that detects and responds to human emotion.

A leader in driver monitoring systems that acquired Affectiva, the pioneer of Emotion AI.

A spin-off from TU Munich specializing in audio analysis and speech emotion recognition.

Develops FaceReader, the standard software tool for automated analysis of facial expressions in scientific research.

Uses webcams to measure attention and emotion in response to video advertising.

An Emotion AI platform combining facial coding, eye tracking, and brainwave mapping (EEG).

Creates autonomously animated 'Digital People' with simulated nervous systems.