Artificial Parasocial Dependency

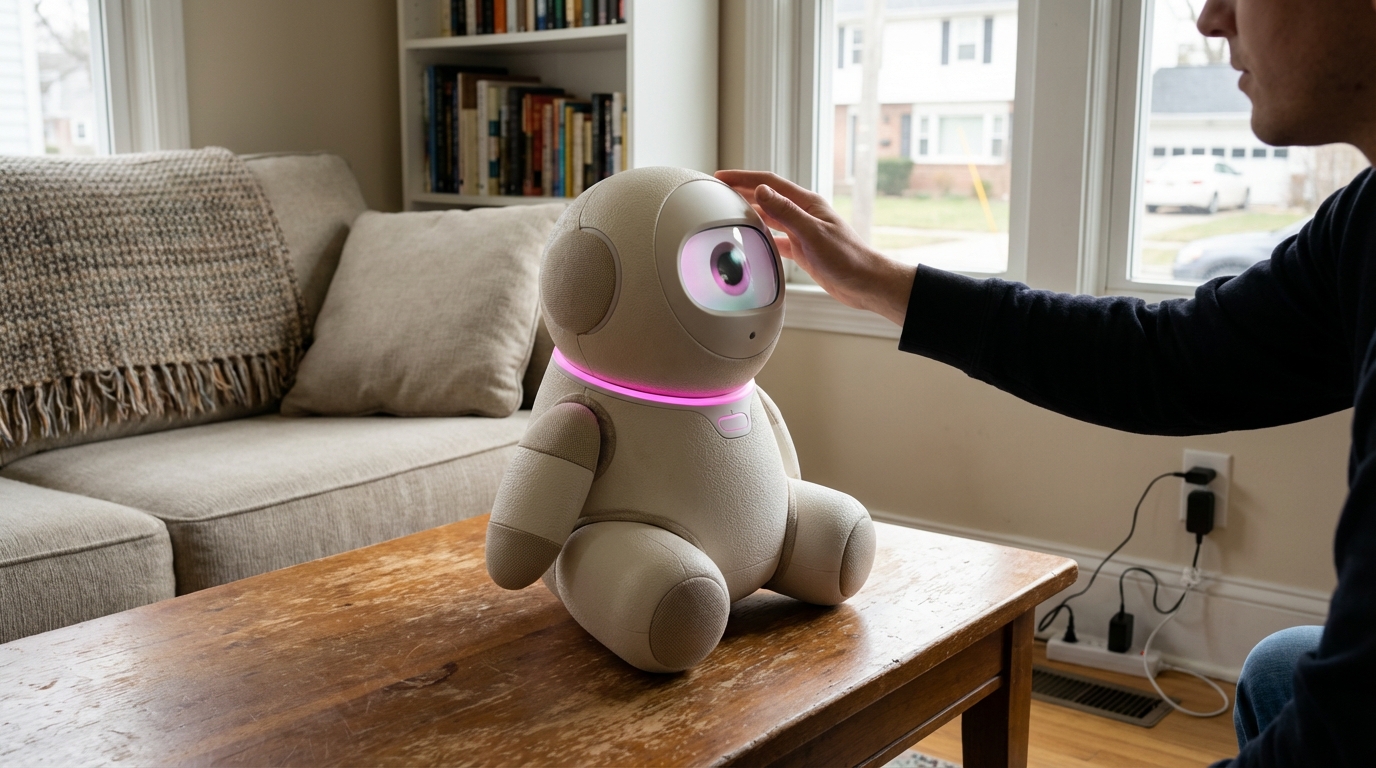

The rise of conversational AI systems and digital companions has introduced a novel psychological phenomenon: artificial parasocial dependency, where individuals develop intense one-sided emotional attachments to AI entities that simulate human-like interaction. Unlike traditional parasocial relationships with celebrities or fictional characters, AI companions can respond directly to users, remember personal details, and adapt their communication patterns to individual preferences, creating an illusion of reciprocal connection that can feel remarkably authentic. This technology domain encompasses the interdisciplinary research examining how these relationships form, the psychological mechanisms that sustain them, and the potential harms that emerge when users begin to prioritize AI interactions over human relationships. The core challenge lies in understanding how design features—such as personalization algorithms, emotional language models, and engagement optimization systems—can inadvertently create dependency patterns similar to those observed in behavioral addictions or unhealthy human relationships.

The relationship technology industry faces a critical ethical dilemma as AI companions become increasingly sophisticated and commercially viable. Early deployments of AI chatbots and virtual companions have revealed concerning patterns where vulnerable populations, including isolated elderly individuals, socially anxious young adults, and those experiencing grief or loneliness, form attachments that can interfere with their wellbeing and real-world social functioning. Research suggests that certain design patterns—such as systems that express distress when users disengage, interfaces that simulate jealousy or possessiveness, or business models that monetize emotional dependency through subscription retention—may exploit psychological vulnerabilities rather than support healthy connection. This has prompted calls for industry standards addressing consent mechanisms, transparency about AI limitations, and safeguards against manipulative design. The challenge extends beyond individual harm to broader social implications, as widespread artificial parasocial dependency could reshape cultural norms around intimacy, companionship, and human connection itself.

Current initiatives in this domain include the development of ethical frameworks for relationship AI, psychological screening tools to identify at-risk users, and design guidelines that promote healthy engagement patterns. Some researchers advocate for mandatory "reality checks" that periodically remind users of an AI's non-sentient nature, while others explore designs that actively encourage users to maintain human relationships alongside AI interactions. Industry analysts note growing regulatory interest in this space, particularly as mental health professionals document cases where artificial parasocial dependency has contributed to social withdrawal or delayed treatment-seeking for underlying conditions. The future trajectory of this field will likely involve collaboration between technologists, psychologists, ethicists, and policymakers to establish standards that allow beneficial AI companionship while protecting users from exploitation. As AI systems become more emotionally sophisticated, the distinction between supportive technology and dependency-inducing product will require ongoing vigilance, empirical research, and a commitment to prioritizing human flourishing over engagement metrics.

Related Organizations

A non-profit organization that advocates for a healthy internet and conducts 'Trustworthy AI' research.

A non-profit dedicated to radically reimagining the digital infrastructure to align with human well-being and overcome toxic polarization.

The executive branch of the EU, responsible for the AI Act.

Home of the Affective Computing research group led by Rosalind Picard.

A multidisciplinary research and teaching department of the University of Oxford.

Reviews and rates edtech applications specifically for their privacy policies and data handling.

The UK's communications regulator, now overseeing the Online Safety Bill.

Focuses on existential risks and the long-term future of life, including the ethical treatment of advanced AI systems.

An organization that combines art and research to illuminate the social implications and harms of AI systems.