Edge AI Offline Assistants

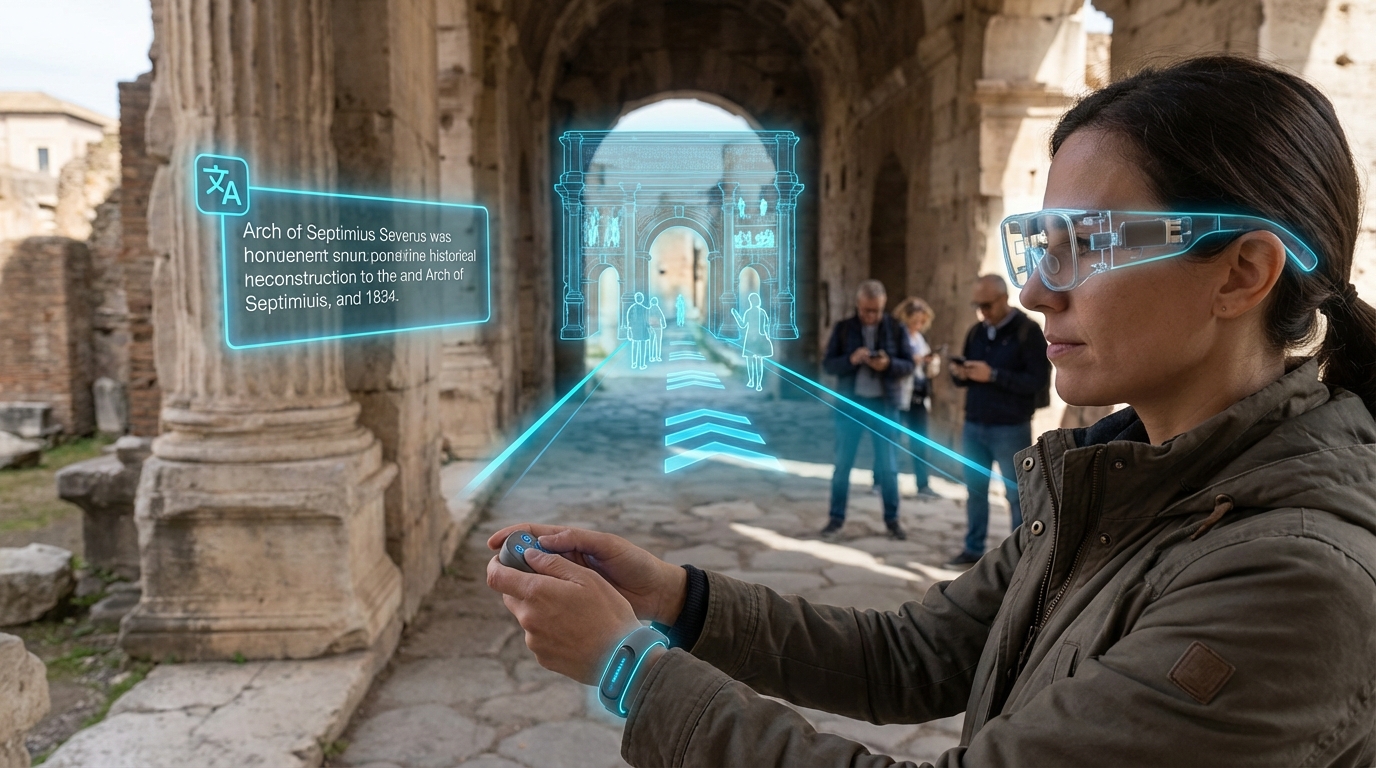

Edge AI Offline Assistants represent a fundamental shift in how artificial intelligence serves travelers, moving computational intelligence from distant cloud servers directly onto personal devices like smartphones, tablets, and wearables. This technology relies on highly compressed large language models (LLMs) and computer vision algorithms that have been optimized through techniques such as quantization, pruning, and knowledge distillation to fit within the memory and processing constraints of consumer hardware. Unlike traditional cloud-based AI services that require constant internet connectivity, these systems perform all inference operations locally on the device's processor or dedicated neural processing unit. The technical achievement lies in reducing model sizes from hundreds of gigabytes to just a few gigabytes while maintaining acceptable accuracy levels for practical applications. This compression enables sophisticated capabilities—real-time language translation, visual recognition of landmarks and signs, contextual route planning, and personalized recommendations—to function entirely offline, transforming the device into a self-contained intelligent assistant.

For travelers, connectivity gaps represent one of the most persistent challenges when exploring unfamiliar destinations. Remote natural areas, international roaming limitations, underground transit systems, and regions with underdeveloped telecommunications infrastructure all create situations where traditional cloud-dependent services become unavailable precisely when guidance is most needed. Edge AI Offline Assistants address this fundamental problem by ensuring that critical travel functions remain operational regardless of network conditions. This capability proves particularly valuable for adventure travelers venturing into wilderness areas, budget-conscious tourists avoiding expensive international data plans, and business travelers navigating countries with restricted internet access. The technology also solves latency issues inherent in cloud-based systems, providing instantaneous responses for time-sensitive tasks like translating emergency signage or identifying safe navigation routes. Furthermore, processing data locally enhances privacy by keeping sensitive information—travel patterns, personal preferences, captured images—entirely on the user's device rather than transmitting it to external servers.

Current implementations of this technology are emerging across multiple platforms, with smartphone manufacturers increasingly incorporating dedicated AI processors into flagship devices specifically to support on-device intelligence. Early deployments focus on core travel functions such as offline translation between major language pairs, visual identification of common landmarks and cultural sites, and basic navigation assistance using pre-downloaded map data enhanced with AI-powered route optimization. Some travel technology companies are developing specialized applications that bundle compressed models tailored for specific regions or activities, allowing users to download relevant AI capabilities before departing on trips. The trajectory of this technology aligns with broader industry movements toward edge computing and data sovereignty, as travelers become more conscious of both connectivity limitations and privacy concerns. As compression techniques improve and device hardware becomes more powerful, these offline assistants are expected to expand their capabilities to include more nuanced cultural guidance, real-time environmental analysis for outdoor activities, and sophisticated multimodal interactions that combine visual, audio, and textual inputs to provide richer contextual understanding of unfamiliar environments.