Data Minimization & Purpose Limitation Controls

Data minimization and purpose limitation controls represent a critical governance framework designed to address one of the most pressing challenges in modern digital democracy: the tendency for civic data systems to expand beyond their original mandate. This phenomenon, known as "mission creep" or "function creep," occurs when data collected for one specific public purpose gradually becomes repurposed for unrelated or more intrusive uses. At the technical level, these controls operate through a combination of architectural constraints and automated enforcement mechanisms. Purpose tags embedded in data schemas explicitly label each data element with its authorized use case, while access boundaries enforce strict compartmentalization between different government functions. Privacy budgets implement mathematical limits on how much information can be extracted from datasets, preventing excessive querying that might reveal sensitive patterns. Automated deletion protocols ensure that data retention periods align with stated purposes, removing information once its legitimate use has expired. These technical safeguards work in concert to create what researchers call "privacy by design," embedding democratic accountability directly into the infrastructure of civic technology.

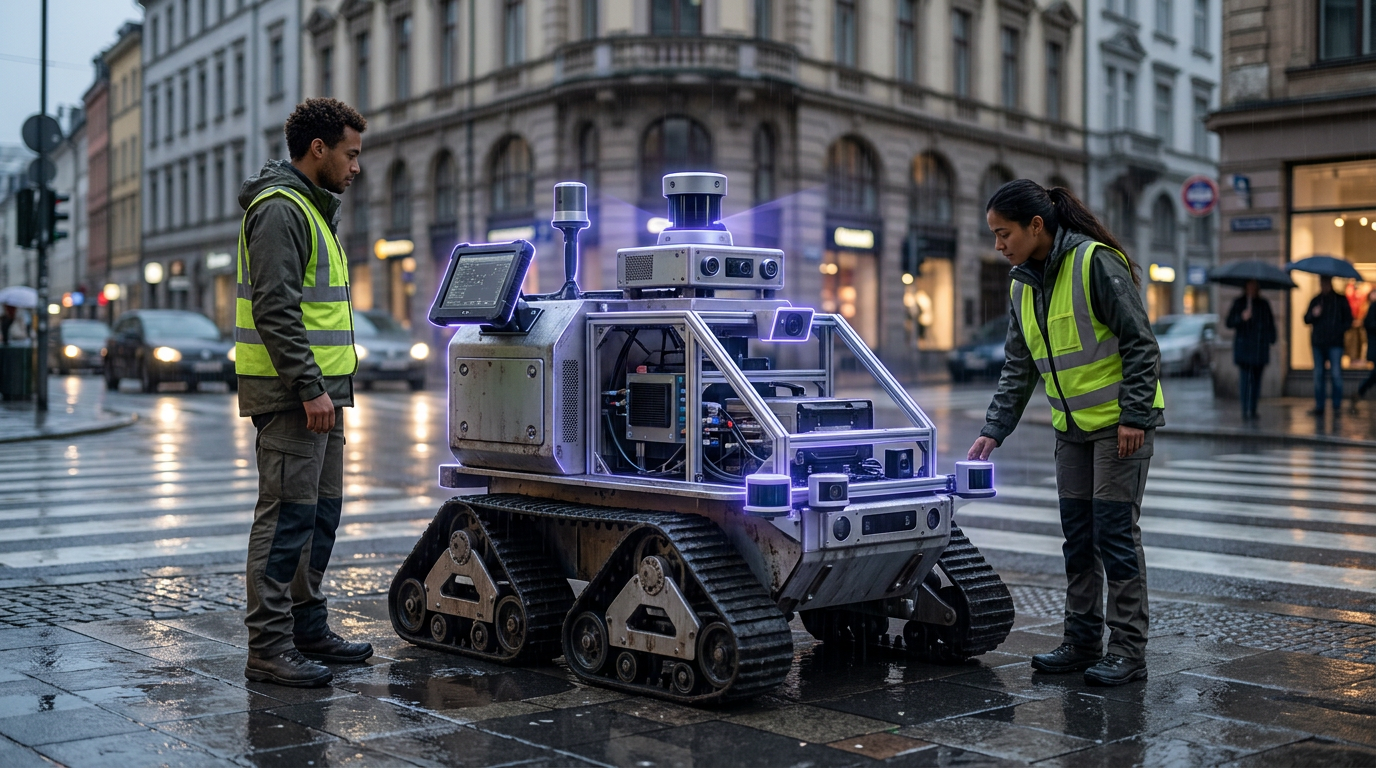

The fundamental problem these controls address is the erosion of public trust that occurs when governments collect data under one justification but later repurpose it in ways citizens never anticipated or consented to. Without such safeguards, even well-intentioned civic initiatives can evolve into surveillance infrastructure. For instance, contact tracing systems deployed during public health emergencies have historically been repurposed for law enforcement, while traffic monitoring systems intended for congestion management have expanded into comprehensive movement tracking. This pattern undermines the social contract between citizens and their governments, creating a chilling effect on civic participation and democratic engagement. By implementing technical barriers that prevent such expansion, these controls help maintain the legitimacy of digital governance systems. They enable governments to harness the benefits of data-driven decision-making while preserving the principle that public institutions should collect only the minimum data necessary for their stated purposes and use it only as originally intended.

Early implementations of these controls are emerging in jurisdictions with strong data protection frameworks, particularly in European cities experimenting with smart city technologies and digital identity systems. Municipal governments are beginning to adopt data governance policies that mandate purpose limitation at the architectural level, rather than relying solely on policy documents or organizational culture. Industry analysts note that these technical controls are becoming increasingly sophisticated, incorporating differential privacy techniques and zero-knowledge proofs that allow useful analysis while mathematically limiting what can be learned about individuals. The trajectory points toward a future where democratic oversight is encoded into civic technology infrastructure itself, creating systems that are structurally incapable of surveillance overreach. This represents a fundamental shift from trusting institutions to behave responsibly toward building systems that cannot misbehave, even under political pressure. As digital governance becomes more prevalent, these controls will likely become essential infrastructure for maintaining democratic legitimacy in an increasingly data-driven civic landscape.

Related Organizations

The European Union's independent data protection authority.

A community-driven organization building privacy-preserving AI technology, including PySyft for encrypted, privacy-preserving deep learning.

Data privacy software company enabling organizations to use sensitive data safely for analytics.

Provides secure data access control for analytics and AI, ensuring only authorized users/models access sensitive data.

The UK's independent regulator for data rights, providing specific guidance on AI and data protection.

US federal agency that sets standards for technology, including facial recognition vendor tests (FRVT).

Think tank producing extensive research and best practices on the privacy implications of XR, eye-tracking, and brain-computer interfaces.

Secret Computing company using Multi-Party Computation and FHE for privacy-preserving analytics.

Data intelligence platform for privacy, security, and governance.

Provides privacy guardrails to prevent data leakage and unauthorized data collection.