Sovereign AI Language Models

Geography: Asia Pacific · East Asia · South Korea

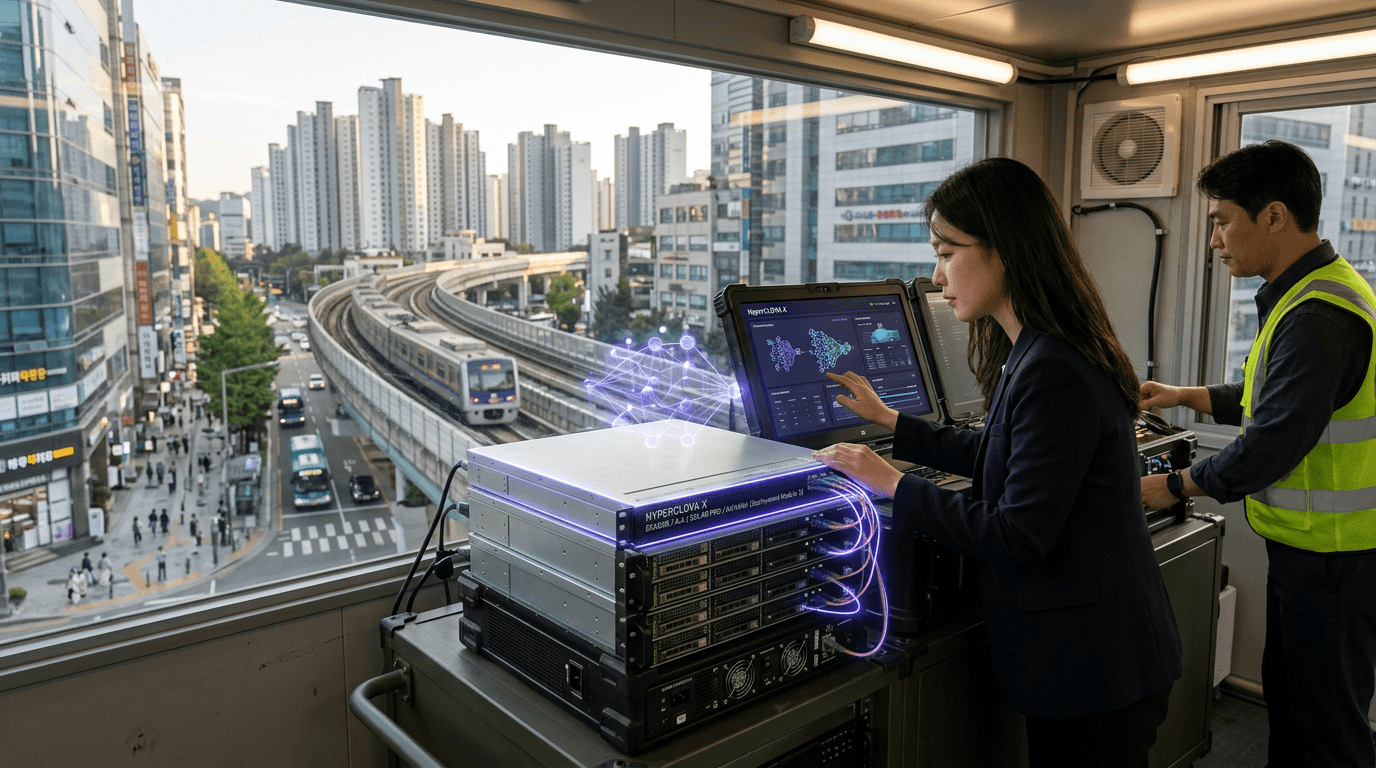

Naver's HyperCLOVA X is the most advanced Korean LLM, trained on Korean-language data at a scale that outperforms GPT-4 on Korean benchmarks. LG's Exaone is a bilingual model designed for enterprise and scientific applications. SK Telecom's A.X powers its customer service and telecom operations. Upstage's Solar Pro is an open-weight model competitive with Llama on multilingual tasks. Kakao's Kanana targets Korean conversational AI.

Korea's LLM diversity is remarkable for a country of 52 million people — five major independent efforts, each backed by a different chaebol or tech company. This reflects a national conviction that linguistic and cultural sovereignty requires domestic AI models, not dependence on OpenAI or Google. The Korean government's $349M AI investment in 2025 includes compute subsidies specifically for domestic model training.

The practical question is whether five separate Korean LLMs can each achieve sufficient scale to compete globally, or whether consolidation is inevitable. Naver has the strongest position with 60,000+ GPUs and the most comprehensive Korean training data, but smaller players like Upstage have found niches in open-source and enterprise deployment. Korea's LLM ecosystem is a microcosm of its broader innovation pattern: intense domestic competition driving rapid improvement.