Multilingual AI for 22 Official Languages

Geography: Asia Pacific · South Asia · India

India faces an AI challenge no other country does: building language models that work across 22 official languages written in 13 different scripts, spoken by 1.4 billion people. Only ~125 million Indians speak English fluently, yet most AI models are English-first. AI4Bharat, a research initiative at IIT Madras led by Professor Mitesh Khapra, has built IndicTrans3 — the world's first open-source state-of-the-art translation model supporting all 22 scheduled Indian languages. The project also includes IndicBERT, IndicBART, and Airavata (an instruction-tuned LLM for Indian languages), along with massive open datasets like Sangraha and IndicCorpora.

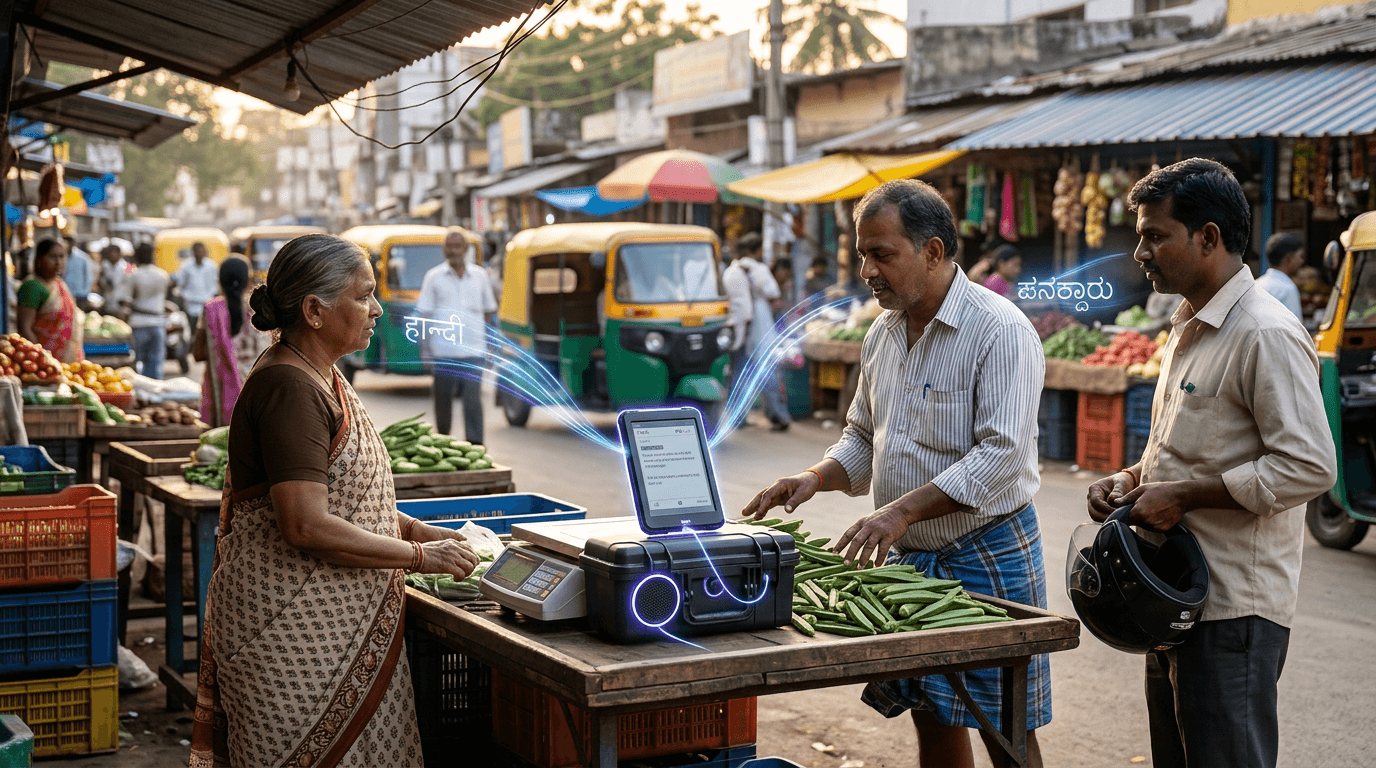

The Indian government's Bhashini platform operationalizes this research at scale. Bhashini provides real-time translation and speech recognition across Indian languages as a public API — enabling any app to offer multilingual support. When a farmer in Tamil Nadu calls a government helpline, Bhashini can translate between Tamil and Hindi in real-time. When a court document in Bengali needs to be understood by a lawyer in Gujarat, Bhashini enables it. This is the linguistic layer of India Stack: just as UPI made payments language-agnostic, Bhashini aims to make digital services language-agnostic.

The implications are global. India's multilingual AI research is producing techniques — cross-lingual transfer learning, script-agnostic models, low-resource language training — that are directly applicable to the ~7,000 languages spoken worldwide. Africa has a similar challenge (2,000+ languages); so does Southeast Asia. India is building the playbook for inclusive AI that doesn't assume English as default. Companies like Sarvam AI and Krutrim are commercializing this research, but the foundational open-source work from AI4Bharat ensures it remains a public good.