Hyperscale AI Data Center Infrastructure

Geography: Americas · North America · United States

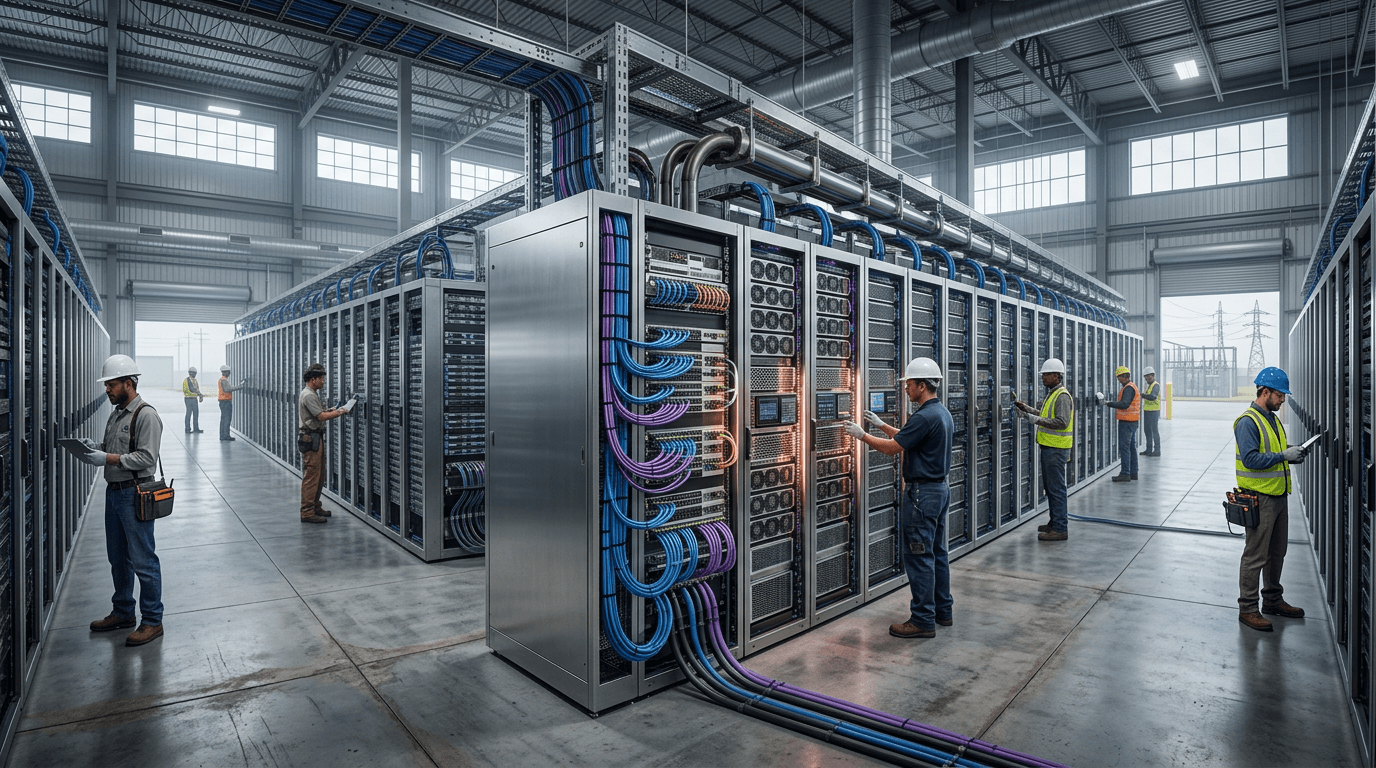

The AI infrastructure buildout is the largest capital expenditure program in technology history. Microsoft, Google, Amazon, and Meta are each investing $50-80 billion annually in data center construction, primarily for AI training and inference. Individual campuses are reaching gigawatt-scale power consumption — equivalent to small cities. Novel cooling technologies (liquid cooling, immersion cooling) are required to handle the heat density of AI accelerators.

This infrastructure investment creates a physical moat for US AI leadership. Training frontier models requires clusters of tens of thousands of GPUs operating in concert, interconnected by ultra-low-latency networks. The engineering complexity of building and operating these facilities at scale is itself a competitive barrier. The pivot to inference infrastructure in 2026 is driving demand for distributed, modular 'micro-data centers' closer to end users.

The energy demands of AI data centers are reshaping US energy policy. Hyperscalers are signing power purchase agreements with nuclear, geothermal, and CCS-equipped gas plants. Some data center projects face grid connection delays of 5-7 years due to insufficient transmission infrastructure. This energy constraint may become the binding limit on AI scaling before computing constraints.