Brain-Computer Interface Communication

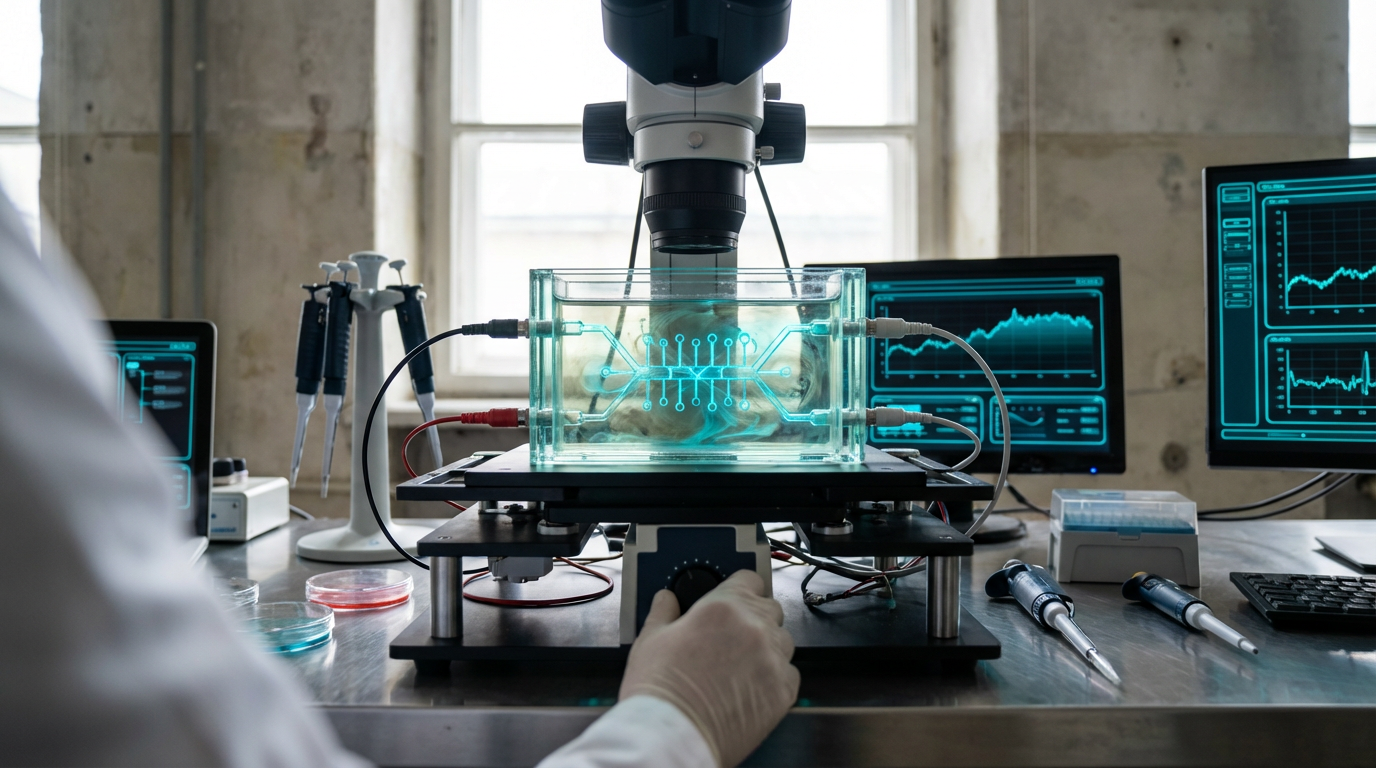

Brain-computer interface communication represents a paradigm shift in how humans interact with digital systems, translating neural activity directly into commands or messages without requiring physical movement or speech. At its core, this technology relies on electrodes—either surgically implanted within the brain or positioned non-invasively on the scalp—that detect and record electrical signals generated by neurons. These neural signals are then processed through sophisticated machine learning algorithms that decode patterns associated with specific intentions, thoughts, or imagined movements. Invasive systems, which place electrodes directly on or within brain tissue, can capture higher-resolution signals and enable more precise control, while non-invasive approaches using electroencephalography (EEG) offer safer, more accessible alternatives with lower signal fidelity. The fundamental mechanism involves training algorithms to recognise the unique neural signatures that correspond to particular mental states or intended actions, creating a direct communication pathway between the brain and external devices.

The primary challenge this technology addresses is the complete loss of motor function and communication ability experienced by individuals with conditions such as locked-in syndrome, advanced amyotrophic lateral sclerosis (ALS), or severe spinal cord injuries. For these patients, traditional assistive technologies that rely on residual muscle control become ineffective, leaving them isolated despite intact cognitive function. Brain-computer interfaces bypass damaged motor pathways entirely, allowing users to control cursors, type messages, operate wheelchairs, or manipulate robotic limbs through thought alone. Beyond medical rehabilitation, this technology promises to revolutionise human-computer interaction more broadly by eliminating the bottleneck of physical input devices. The integration with emerging telecommunications infrastructure could enable entirely new forms of communication, where thoughts are transmitted directly between individuals or to digital systems without the intermediary steps of speaking, typing, or gesturing.

Clinical trials and research deployments have demonstrated remarkable progress, with paralysed individuals successfully using brain-computer interfaces to compose text, browse the internet, and control prosthetic limbs with increasing speed and accuracy. Early commercial systems are beginning to emerge for medical applications, though widespread adoption remains limited by factors including surgical risks, signal stability, and the need for extensive user training. The convergence of this technology with next-generation wireless networks could dramatically expand its applications beyond medical contexts. Researchers envision scenarios where neural interfaces enable silent communication in noisy environments, provide intuitive control of augmented and virtual reality experiences, or allow seamless interaction with smart city infrastructure through thought alone. As signal processing algorithms improve and electrode technologies become more biocompatible and durable, brain-computer interface communication stands poised to fundamentally transform not just assistive technology, but the very nature of human connectivity and digital interaction in increasingly networked urban environments.

Related Organizations

A consortium of universities and hospitals developing and testing BCI technologies for people with paralysis.

Neurotechnology company developing implantable brain-machine interfaces.

Developed the Stentrode, an endovascular brain interface implanted via the jugular vein without open brain surgery.

A leading neurosurgery research lab focusing on the speech cortex and speech neuroprosthetics.

Manufacturer of the Utah Array, the gold-standard electrode system used in the majority of human BCI research.

Builds AI-powered BCI headsets with AR displays for accessibility and communication.

Creating the Connexus Direct Data Interface, a high-data-rate BCI for severe motor impairment.

Develops high-performance BCI hardware, including the 'Unicorn' hybrid black interface for developers.

Developing the Layer 7 Cortical Interface, a thin-film electrode array designed to sit on the brain's surface without penetrating tissue.

Translational research center developing implantable neuro-sensing devices for communication restoration in locked-in patients.

Developing graphene-based neural interfaces for high-resolution brain decoding and modulation.

Creates open-source brain-computer interface tools and the Galea headset (integrating with VR) for researching physiological responses.

Develops BCI-enabled headphones that detect focus and intent to control digital experiences.