Smart Textile Sensors

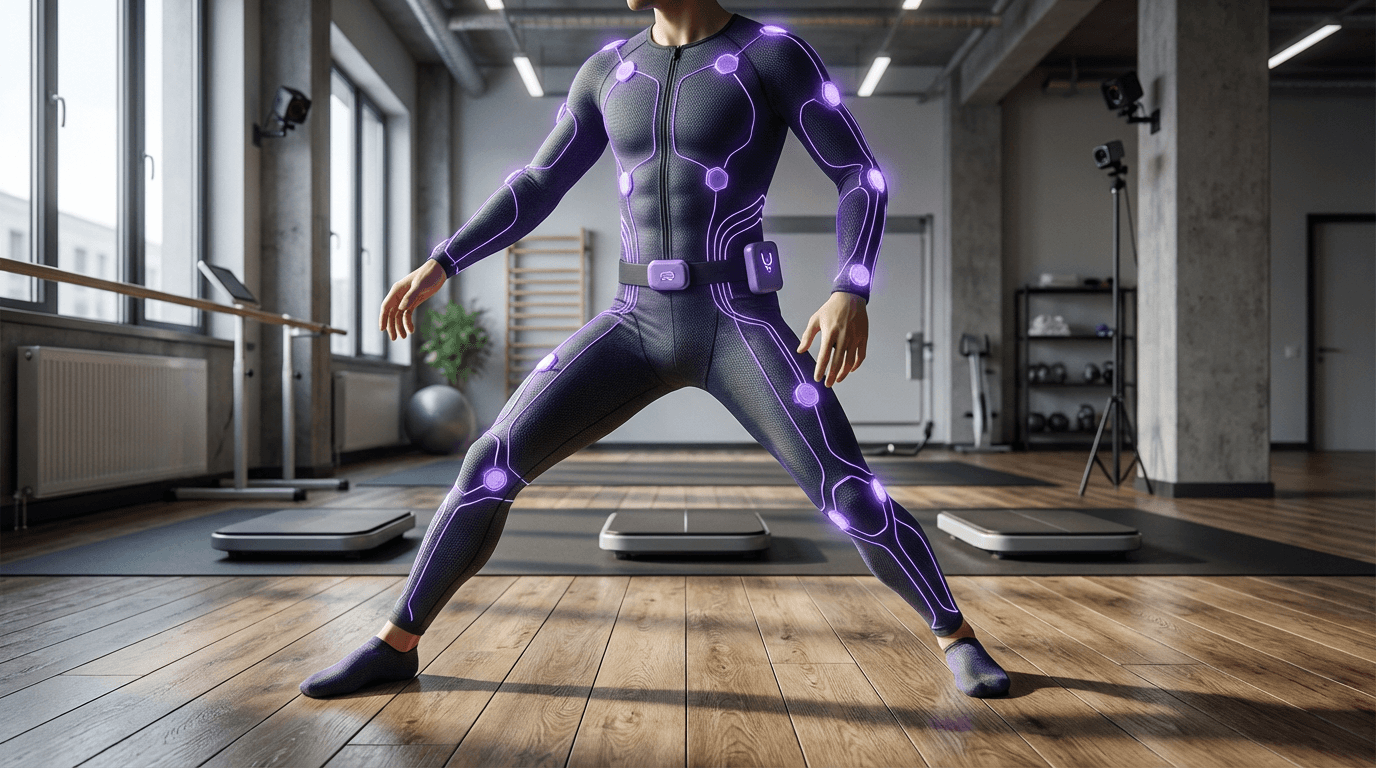

Smart textile sensors represent a convergence of materials science, electronics, and textile engineering that transforms ordinary fabric into a sophisticated data-capture platform. At their core, these systems integrate conductive yarns, flexible circuit elements, and miniaturised sensors directly into the weave or knit structure of clothing. The conductive threads—often composed of silver-coated nylon, carbon-based fibres, or copper-polymer blends—create pathways for electrical signals throughout the garment, while embedded strain gauges, accelerometers, and pressure sensors detect minute changes in fabric tension and body position. Unlike traditional motion-capture systems that rely on external cameras or rigid body-mounted devices, smart textile sensors achieve spatial tracking by measuring how the fabric itself deforms as the wearer moves. This approach captures a continuous stream of biomechanical data across the entire body surface, translating physical movement into digital information that can be processed for position tracking, gesture recognition, and physiological monitoring.

The entertainment and healthcare industries face persistent challenges in capturing natural human movement without constraining the subject or requiring controlled environments. Film and game studios have long relied on optical motion-capture systems that demand specialised studios, reflective markers, and line-of-sight constraints, while medical professionals struggle to monitor patients' gait, posture, and activity levels outside clinical settings. Smart textile sensors address these limitations by enabling capture in any environment—from a patient's home to an outdoor athletic field—without the infrastructure overhead of traditional systems. For animation and virtual production, this technology allows performers to work in natural settings while their movements are translated into digital characters in real time. In healthcare, the same garments can detect early signs of mobility decline, monitor rehabilitation progress, or alert caregivers to falls, all while patients go about their daily routines. The sports performance sector benefits from detailed biomechanical analysis during actual competition or training, rather than in artificial laboratory conditions.

Early commercial deployments have emerged across multiple sectors, with several companies now offering sensor-equipped compression garments for athletic training and physical therapy applications. Research institutions and motion-capture studios have begun incorporating these systems into production pipelines, particularly for projects requiring outdoor shoots or extended capture sessions where traditional setups prove impractical. The technology aligns with broader industry trends toward ubiquitous computing and ambient intelligence, where sensing capabilities become invisibly integrated into everyday objects. As manufacturing techniques improve and costs decline, smart textile sensors are positioned to expand beyond specialised applications into consumer wellness products and mainstream virtual reality interfaces. The convergence of this technology with advances in machine learning for movement analysis and edge computing for on-garment processing suggests a future where spatial tracking becomes as commonplace and unobtrusive as wearing clothing itself, fundamentally reshaping how we bridge physical and digital experiences.

Related Organizations

Developer of the Skiin textile computing platform, which knits sensors directly into fabric.

Nextiles

United States · Startup

Materials science company building smart fabrics that capture biomechanical data through sewing technology.

Produces a full-body haptic suit using electro-muscle stimulation (EMS) and TENS to simulate physical sensations.

Develops smart fabric sensors originally designed for musical instruments, now used in VR and safety.

Home of the Affective Computing research group led by Rosalind Picard.

Develops high-precision stretchable sensors for motion capture gloves.

Spinoff from the University of Tokyo developing 'e-skin' smart apparel.

German research institute specializing in reliability and micro-integration, including e-textiles.

Produces the Loomia Electronic Layer (LEL), a soft flexible circuit system for textiles.

Develops smart clothing with integrated body sensors for health tracking.