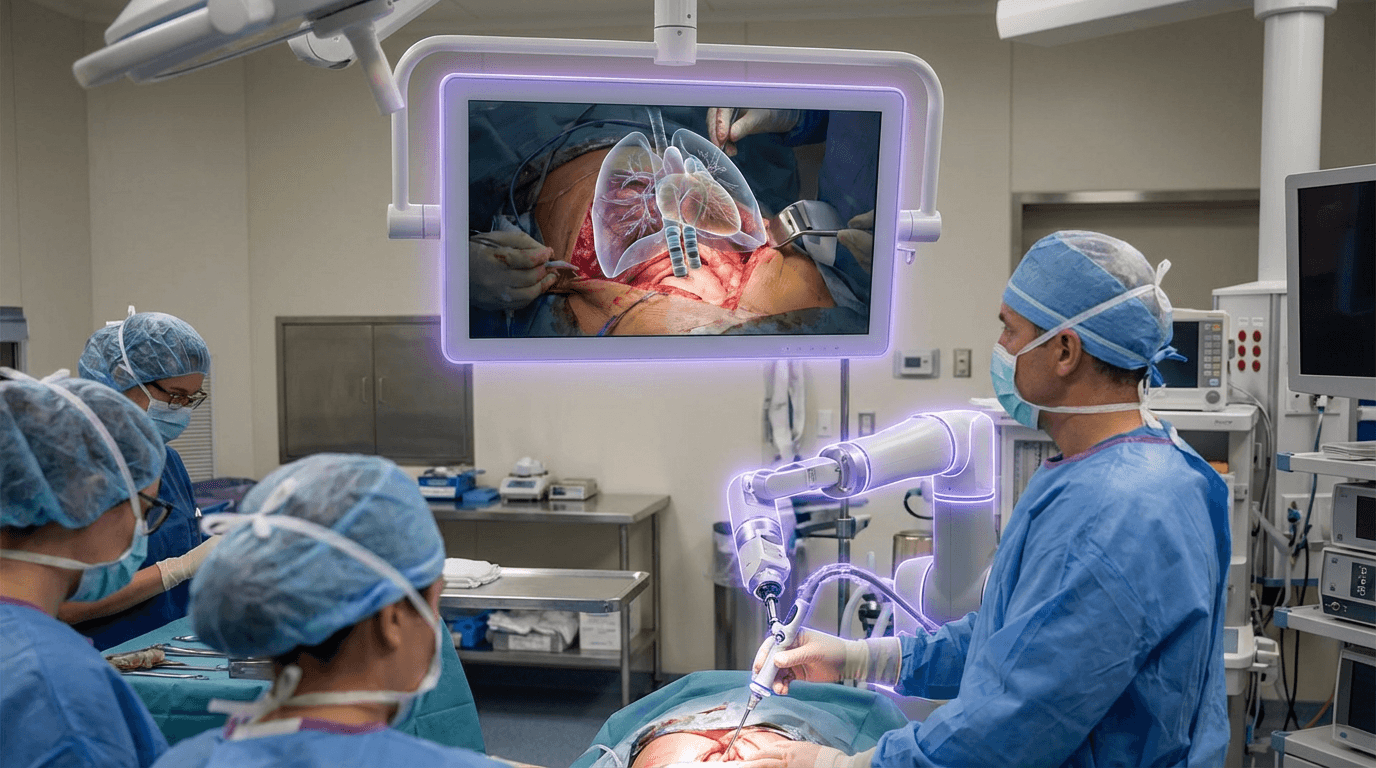

Digital Twin Surgery

Digital twin surgery represents a convergence of medical imaging, augmented reality, and real-time computational processing that fundamentally transforms how surgeons interact with patient anatomy during procedures. The technology works by creating a dynamic, three-dimensional digital model of a patient's internal structures from pre-operative imaging data—typically CT scans, MRI sequences, or ultrasound—which is then precisely registered and overlaid onto the patient's body during the actual operation. This registration process uses spatial tracking systems, fiducial markers, or advanced computer vision algorithms to maintain millimeter-level accuracy as the patient's position shifts or as surgical instruments move through tissue. The surgeon views this augmented information through head-mounted displays or specialized AR glasses, effectively gaining the ability to see beneath the skin surface while maintaining natural eye contact with the surgical field. Unlike traditional approaches where surgeons must mentally reconstruct anatomy from separate monitor displays, this solution integrates critical anatomical information directly into the surgeon's visual field, reducing cognitive load and the need to look away from the operative site.

The primary challenge this technology addresses is the inherent limitation of human vision during minimally invasive and complex surgical procedures. Surgeons have historically relied on anatomical knowledge, tactile feedback, and two-dimensional imaging displayed on external monitors to navigate around critical structures like major blood vessels, nerves, and healthy tissue boundaries. This mental translation between imaging data and the actual surgical field introduces risk, particularly in procedures involving tumors with irregular margins, vascular anomalies, or operations in anatomically dense regions. Digital twin surgery eliminates much of this guesswork by providing surgeons with a persistent, spatially accurate view of subsurface anatomy, enabling more precise incisions, safer dissection paths, and more complete tumor resections while preserving healthy tissue. This capability is particularly valuable in neurosurgery, oncological procedures, and vascular interventions where millimeter-scale precision can mean the difference between successful outcomes and serious complications.

Early clinical deployments have demonstrated measurable improvements in surgical precision and patient outcomes, with research suggesting reduced operative times, decreased blood loss, and lower rates of inadvertent damage to critical structures. Pilot programs in specialized surgical centers have focused on hepatic resections, where visualizing tumor boundaries and vascular anatomy in three dimensions helps surgeons achieve cleaner margins, and in spinal procedures, where nerve root visualization reduces the risk of neurological injury. The technology is also finding applications in training environments, where surgical residents can practice complex procedures with augmented guidance before operating on actual patients. As computational processing power increases and AR display technology becomes more refined, digital twin surgery is positioned to expand beyond specialized academic centers into broader clinical practice. This trajectory aligns with the larger movement toward precision medicine and minimally invasive techniques, where the goal is not simply to treat disease but to do so with maximum efficacy and minimum collateral impact on the patient's body, ultimately reshaping surgical practice into a more data-integrated, visually augmented discipline.

Related Organizations

Creators of SurgicalAR, which overlays holographic data onto the patient for surgical navigation.

Uses light field technology to create a real-time 3D representation of the surgical field.

Developed the xvision Spine System, an AR headset that gives surgeons 'x-ray vision'.

Provides a Precision VR platform for surgical planning and patient engagement.

A major medical technology company focusing on software-driven medical technology and digital surgery.

Researching sensory feedback in prosthetics (Paul Marasco's lab) to restore the sense of agency and ownership over artificial limbs.

Develops True3D, a holographic viewing system for medical anatomy.

A technology platform that allows clinicians to virtually 'scrub in' to any operating room.

A major medical technology company offering 'AI-Rad Companion', a family of AI-powered, cloud-based augmented workflow solutions.

Offers the iBed Wireless system and connected OR technologies to integrate equipment status with hospital records.