Longevity Model Interpretability Standards

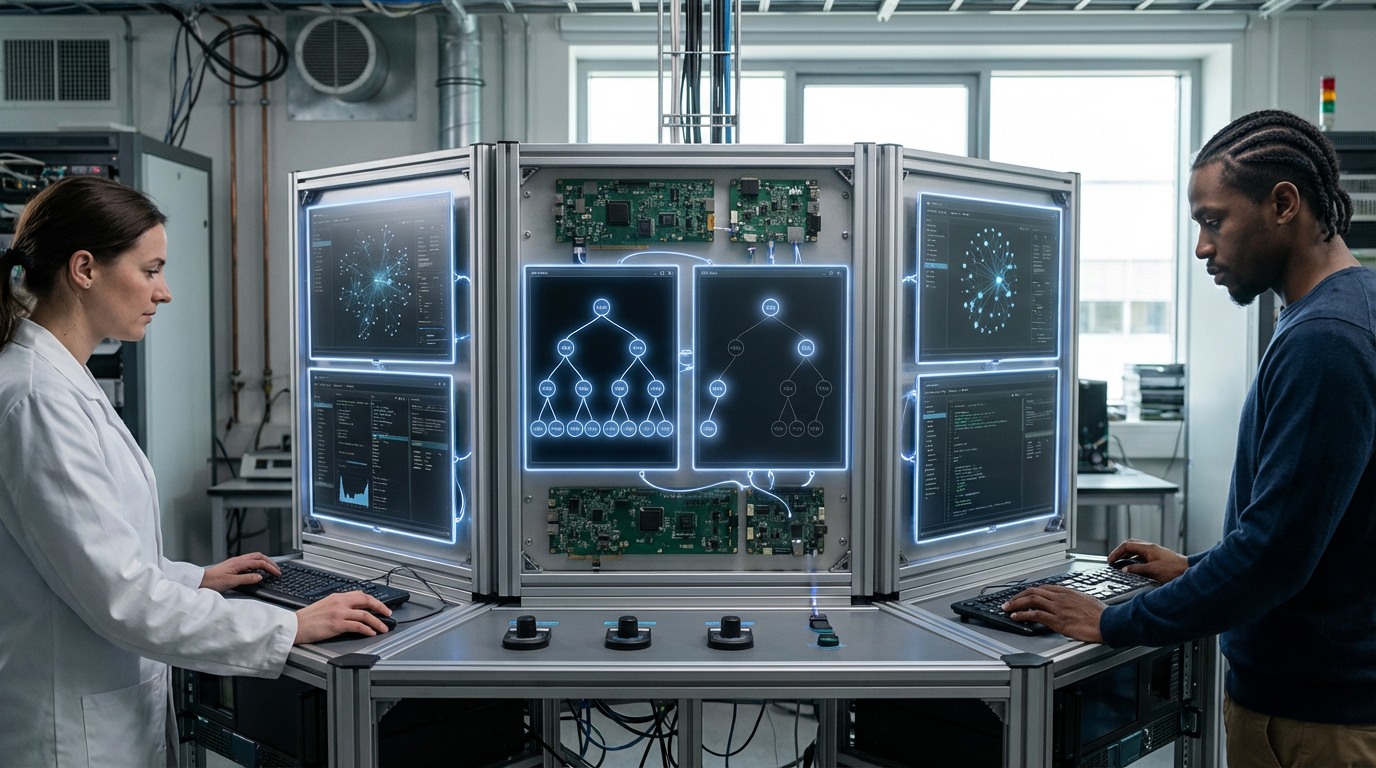

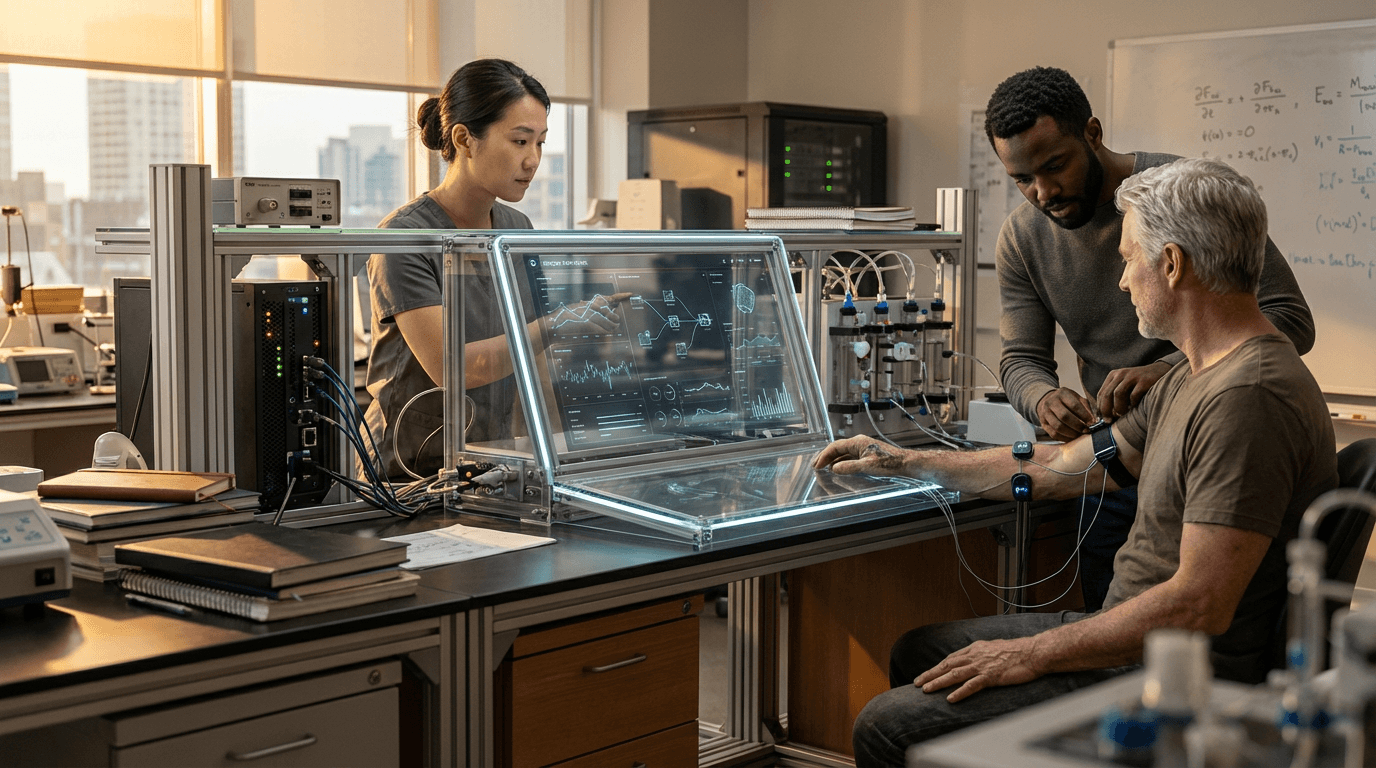

Longevity Model Interpretability Standards address a critical challenge in the emerging field of AI-driven healthspan prediction: the opacity of machine learning systems that make consequential recommendations about human aging and mortality. As digital twins, aging clocks, and other predictive models become increasingly sophisticated in their ability to forecast biological age and disease risk, many rely on deep learning architectures that function as "black boxes"—producing outputs without revealing the underlying reasoning. This lack of transparency becomes particularly problematic when these systems inform life-altering decisions, such as whether to pursue aggressive anti-aging interventions, adjust medication regimens, or allocate scarce medical resources. The fundamental technical challenge lies in balancing model accuracy with interpretability, as the most powerful predictive algorithms often sacrifice explainability for performance. These standards establish requirements for model developers to implement techniques such as attention mechanisms, feature importance scoring, and counterfactual explanations that allow clinicians and patients to trace how specific biomarkers, genetic factors, or lifestyle variables contribute to a given prediction.

The healthcare industry faces mounting pressure to ensure that AI systems used in longevity medicine can be audited, validated, and understood by human practitioners. Without interpretability standards, physicians may be reluctant to trust algorithmic recommendations, particularly when they contradict clinical intuition or suggest novel therapeutic approaches. Research suggests that unexplainable AI predictions can lead to diagnostic errors, inappropriate treatment escalation, or missed opportunities for early intervention. These standards address regulatory concerns by providing frameworks for documenting model decision pathways, enabling healthcare providers to identify when algorithms may be relying on spurious correlations or biased training data. Industry analysts note that interpretability requirements also facilitate more effective collaboration between AI developers and domain experts, as biological researchers can validate whether models are capturing genuine aging mechanisms or merely statistical artifacts. This transparency becomes essential for gaining regulatory approval and clinical adoption, particularly as longevity interventions move from research settings into mainstream medical practice.

Early implementations of these standards are emerging in research institutions developing aging clocks and biomarker panels, where investigators are beginning to incorporate explainability techniques into their model architectures from the outset. Some commercial longevity platforms are adopting layered approaches that combine high-performance prediction models with interpretable companion systems that translate outputs into clinically meaningful insights. The standards typically require documentation of which biological pathways, epigenetic markers, or physiological measurements most strongly influence predictions, along with confidence intervals and uncertainty quantification. As personalized longevity medicine becomes more prevalent, these interpretability requirements will likely expand to encompass not only individual predictions but also population-level fairness assessments, ensuring that aging models perform equitably across diverse demographic groups. The trajectory points toward a future where AI-driven longevity tools must demonstrate both predictive power and transparent reasoning, creating a foundation of trust necessary for widespread adoption in clinical practice and enabling patients to make truly informed decisions about their healthspan interventions.

Related Organizations

Non-profit organization co-founded by Steve Horvath to advance epigenetic age research and validate clock algorithms.

US federal agency that sets standards for technology, including facial recognition vendor tests (FRVT).

A clinical-stage biotechnology company using generative AI for end-to-end drug discovery and research.

Epigenetic testing company focused on aging algorithms.

The executive branch of the EU, responsible for the AI Act.

The regulatory body convening advisory committees to discuss the safety, efficacy, and ethics of artificial womb technology (EXTEND).

Consumer health company focused on aging research and supplements.

A company commercializing epigenetic biomarkers for the life insurance industry.

A global advocacy organization dedicated to the advancement of AI in healthcare.