Algorithmic Transparency & Explainability

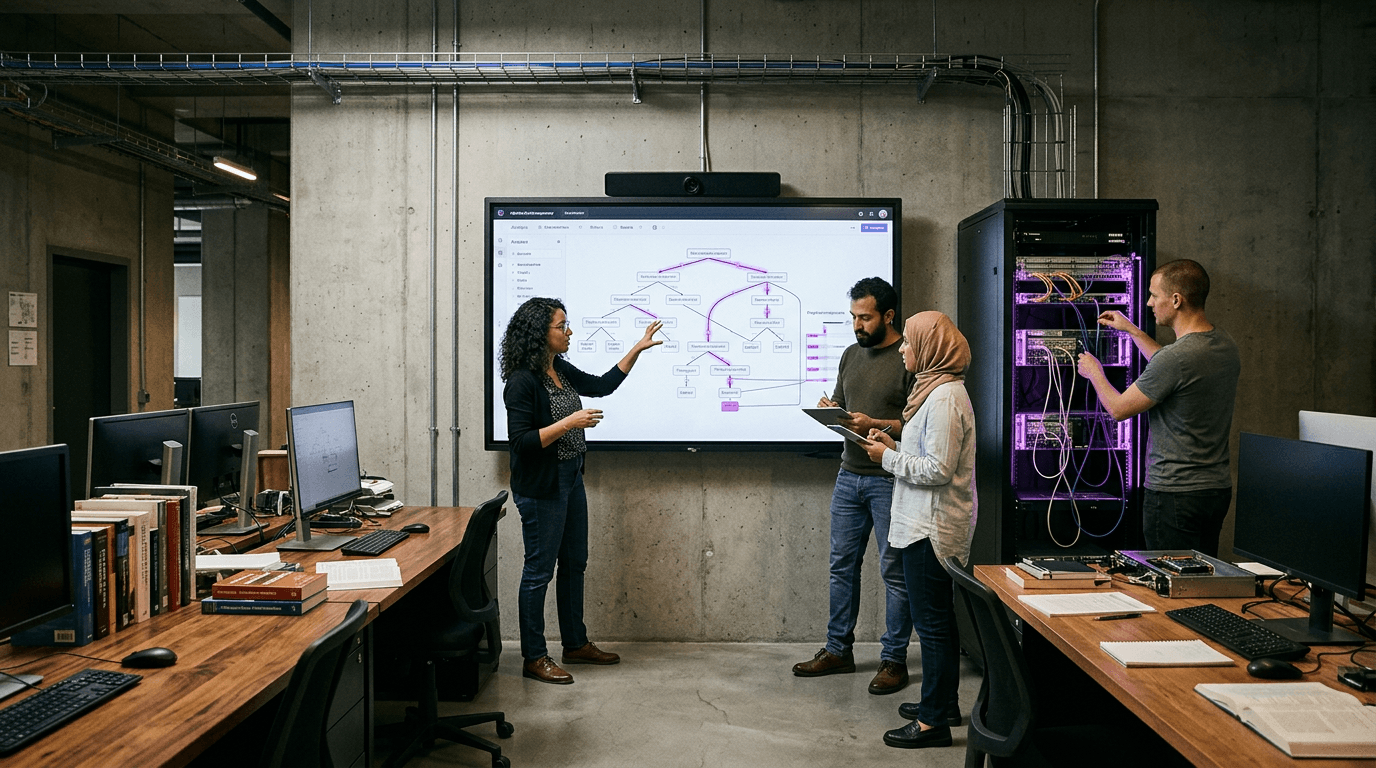

As governments increasingly deploy automated systems to make consequential decisions about citizens' lives—from determining welfare eligibility to prioritising emergency service responses—a fundamental tension has emerged between computational efficiency and democratic accountability. Traditional bureaucratic processes, while often slow, offered clear decision pathways and human points of contact for contestation. Algorithmic systems, by contrast, can process thousands of cases per second but frequently operate as inscrutable 'black boxes' where neither affected citizens nor oversight bodies can meaningfully understand how decisions are reached. Algorithmic transparency and explainability addresses this democratic deficit by establishing technical and procedural frameworks that make automated civic decision-making inspectable, contestable, and accountable. At its core, this approach combines multiple layers of disclosure: user-facing explanations that communicate in plain language why a particular decision was made, auditor-facing technical documentation that reveals the underlying logic and data sources, and reproducible testing environments where independent researchers can verify system behaviour. These mechanisms work together to create what scholars call 'meaningful transparency'—not merely publishing source code or model weights, but providing contextually appropriate information that enables different stakeholders to exercise appropriate oversight.

The practical implementation of these frameworks addresses several critical governance challenges. When a citizen is denied housing assistance or flagged by a predictive policing algorithm, they face not only the immediate consequence but also the inability to understand or challenge the basis for that determination. Research suggests this opacity disproportionately affects marginalised communities who may lack resources to navigate opaque systems. Transparency mechanisms create structured appeal pathways where individuals can request explanations, access the data used in their case, and contest errors or biases. For government auditors and civil society watchdogs, these systems enable systematic examination of whether algorithms are functioning as intended and whether they reproduce or amplify existing inequities. This includes the ability to conduct 'algorithmic audits'—controlled tests that probe for discriminatory patterns across protected characteristics like race, gender, or disability status. By making the decision-making process legible to multiple audiences, these frameworks help prevent the concentration of unaccountable power in technical systems.

Several jurisdictions have begun implementing transparency requirements, with varying approaches to balancing disclosure against concerns about gaming or proprietary interests. The European Union's AI Act includes provisions for high-risk systems to provide explanations, while some U.S. municipalities have established algorithmic accountability offices tasked with reviewing automated systems before deployment. Early implementations reveal both promise and challenges: simple rule-based systems can often provide clear explanations, while complex machine learning models may require approximation techniques that trade perfect accuracy for interpretability. Looking forward, the trajectory points toward transparency becoming a baseline expectation for civic automation, much as environmental impact assessments became standard for infrastructure projects. This shift reflects a broader recognition that democratic legitimacy in the digital age requires not just effective governance, but governance whose logic and limitations citizens can meaningfully comprehend and contest.

Related Organizations

A policy research institute focusing on the social consequences of artificial intelligence and the concentration of power in the tech industry.

A non-profit research and advocacy organization that audits automated decision-making systems, specifically focusing on social media platforms and recommender systems in Europe.

Conducts algorithmic audits to protect fundamental rights and identify digital discrimination.

A model monitoring platform that specializes in explainability, bias detection, and performance tracking.

Provides Model Performance Management (MPM) to monitor, explain, and analyze AI models in production.

Provides an AI governance platform that helps enterprises measure and monitor the fairness and performance of their AI systems.

The UK's independent regulator for data rights, providing specific guidance on AI and data protection.

Automated testing and monitoring for AI reliability, focusing on the Japanese and global markets.

AI observability platform for monitoring data health and model performance.