Multimodal Foundation Models

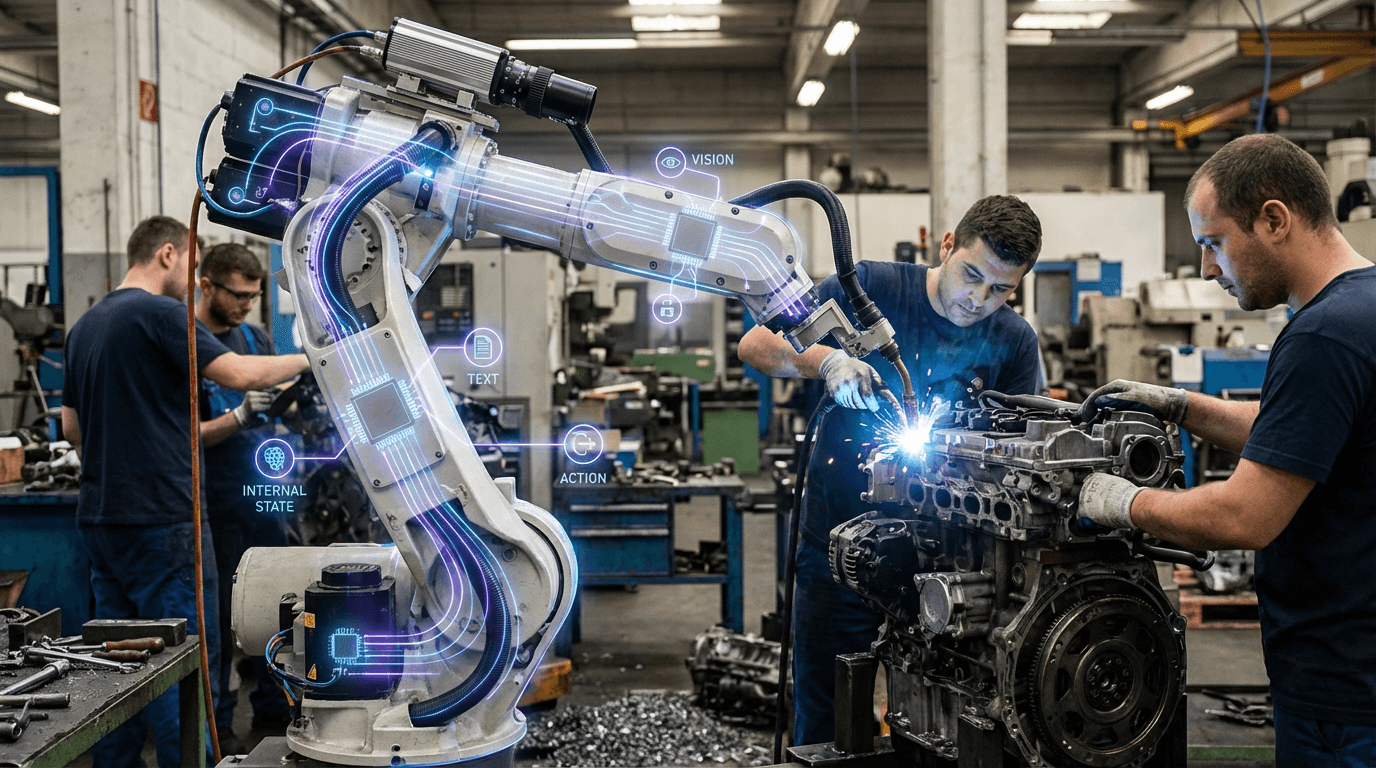

Multimodal foundation models process and understand information across multiple modalities—text, images, audio, video, and potentially actions or internal states—within a unified representation space. These models learn to map different types of information into shared embedding spaces, enabling them to understand relationships between modalities, translate between them, and reason about concepts that span multiple sensory channels.

This innovation addresses the limitation of single-modality AI systems, which can only process one type of information and struggle with tasks requiring understanding across modalities. By learning unified representations, multimodal models can understand that a picture of a cat, the word "cat," and the sound of meowing all refer to the same concept, enabling more sophisticated understanding and interaction. Models like GPT-4V, Claude, and Gemini demonstrate multimodal capabilities, processing text and images together.

The technology is fundamental to creating AI agents that can interact with the real world, which inherently involves multiple sensory modalities. As AI systems become more capable and are deployed in applications requiring real-world interaction—from robotics to virtual assistants to content creation—multimodal understanding becomes essential. The technology continues to evolve, with research exploring how to incorporate additional modalities like audio, video, actions, and potentially even internal states or proprioception, moving toward more comprehensive world understanding.

Related Organizations

Developers of the Gemini family of models, which are trained from the start to be multimodal across text, images, video, and audio.

OpenAI

United States · Company

Creator of GPT-4o, a natively multimodal model capable of reasoning across audio, vision, and text in real-time.

Building foundation models specifically for video understanding, enabling semantic search and summarization of video content.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

United States · Startup

Founded by former DeepMind and Meta researchers to build state-of-the-art multimodal language models (Reka Core, Flash, Edge).

China · Startup

Founded by Kai-Fu Lee, developing the Yi series of open-source models, including Yi-VL (Vision Language).

Creators of Dream Machine, a high-quality video generation model, and 3D capture technology.

Salesforce Research

United States · Research Lab

Developers of the BLIP (Bootstrapping Language-Image Pre-training) family of models and XGen.