Artificial Intelligence

Artificial intelligence (AI) refers to systems that perform tasks commonly associated with intelligence—perception, reasoning, learning, and decision-making—often using data-driven methods such as machine learning rather than hand-coded rules. Techniques range from classical optimisation and symbolic AI to deep learning and large-scale generative models. Applications include computer vision and natural language processing, recommendation and search, autonomous systems, medical imaging and diagnosis, fraud detection, and process automation. Leading developers include OpenAI, Google, Microsoft, IBM, and Nvidia, with models and tools increasingly embedded in enterprise and consumer products.

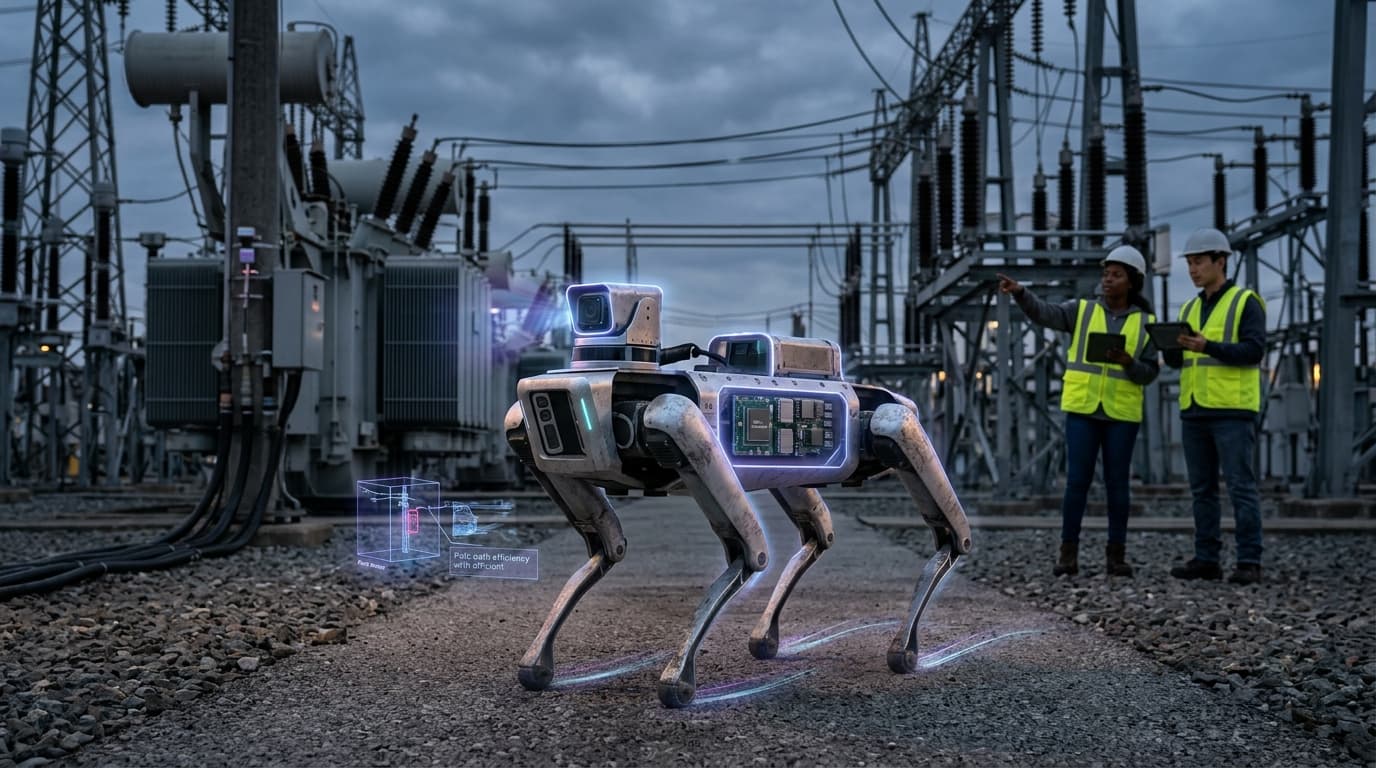

AI addresses the automation of cognitive and perceptual work and the extraction of insight from large datasets. It is integrated into critical infrastructure (energy, transport, finance), public services, and daily tools (assistants, search, content creation). Benefits include efficiency gains, personalisation, and new capabilities in science and engineering. At the same time, deployment raises concerns about bias and fairness when systems are trained on historical data, about job displacement and labour impact, about safety and alignment when systems act autonomously, and about misuse (e.g. disinformation, surveillance). Ethics and politics of AI are driving tension between companies, regulators, and civil society.

Regulation is advancing in several jurisdictions (EU AI Act, sector-specific rules); standards for safety, transparency, and accountability are under development. Semiconductor supply chains and compute availability influence who can train and deploy frontier models. As AI becomes more deeply embedded in organisational and societal processes, the focus is shifting from capability alone to governance, assurance, and equitable access.