Explainable AI for Administrative Decisions

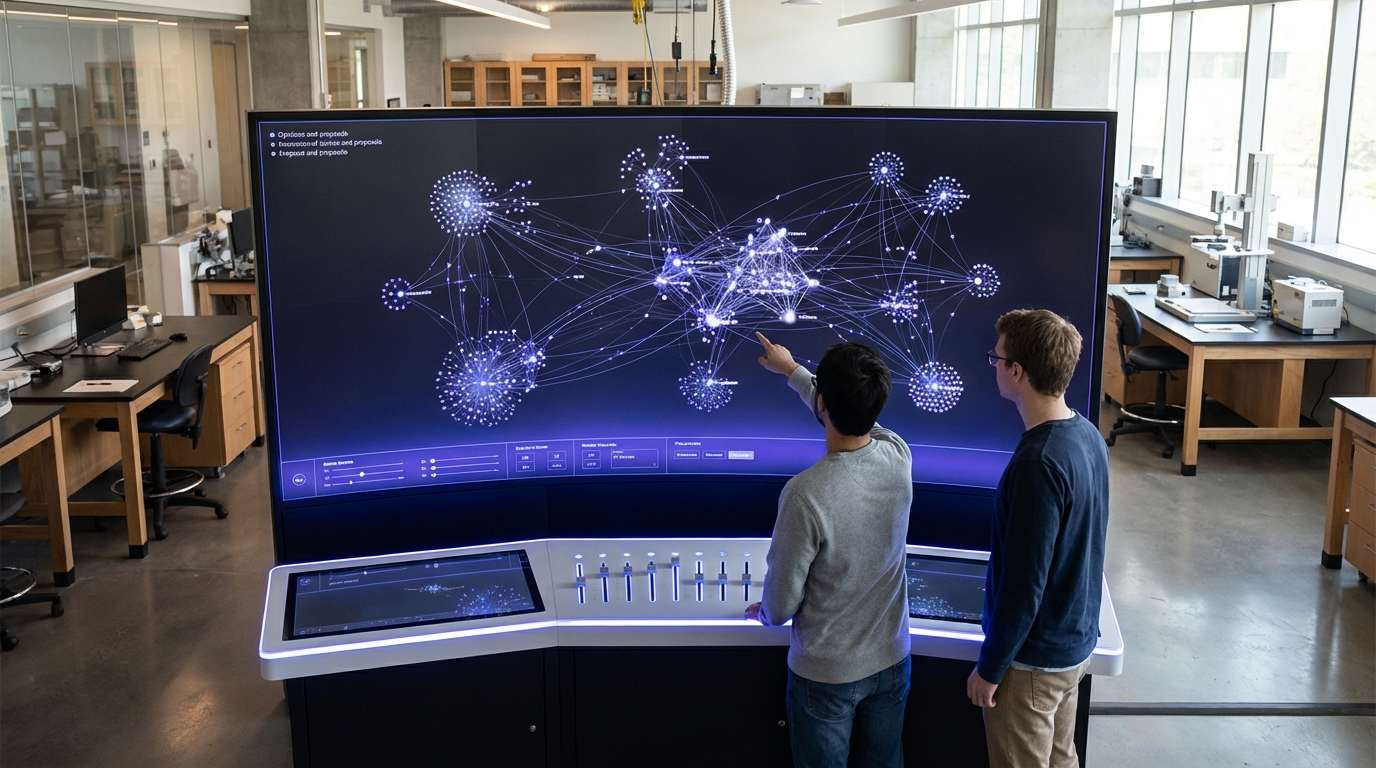

Government agencies worldwide face mounting pressure to modernize administrative processes while maintaining public trust and accountability. Traditional rule-based systems struggle to handle the complexity of modern policy frameworks, yet black-box AI models raise serious concerns about fairness, bias, and due process. When an algorithm denies a business permit, determines welfare eligibility, or flags a citizen for additional scrutiny, the affected individuals have a fundamental right to understand why. Explainable AI for administrative decisions addresses this critical tension by enabling government agencies to leverage advanced machine learning while preserving transparency and accountability. These systems employ techniques such as decision trees with human-readable logic, attention mechanisms that highlight influential data points, and counterfactual explanations that show what would need to change for a different outcome. Unlike opaque neural networks, explainable AI architectures generate structured reasoning chains that map inputs to outputs through interpretable intermediate steps, allowing both administrators and citizens to trace how specific factors—income levels, zoning requirements, compliance history—contributed to final determinations.

The adoption of explainable AI in public administration solves several interconnected challenges that have long plagued government decision-making. Manual processing of applications and assessments creates bottlenecks, inconsistencies, and delays that frustrate citizens and strain agency resources. Meanwhile, early attempts to automate these processes with conventional AI have sparked controversies over algorithmic bias and lack of recourse for affected individuals. Explainable AI systems address these issues by combining efficiency with accountability. They can process thousands of cases rapidly while documenting the rationale behind each decision in formats that satisfy legal requirements for administrative review. This capability proves particularly valuable in high-stakes domains like immigration status determinations, tax audits, and social service allocations, where errors can have profound consequences for individuals and families. Furthermore, these systems enable regulators and oversight bodies to audit decision patterns at scale, identifying systemic biases or policy inconsistencies that would be nearly impossible to detect through manual case review. By making AI reasoning transparent, governments can also build public confidence in automated systems, demonstrating that technology serves to enhance rather than replace human judgment in matters of civic importance.

Regulatory frameworks are increasingly mandating explainability in government AI systems, with the European Union's AI Act establishing strict transparency requirements for high-risk applications in public administration. Several jurisdictions have begun piloting explainable AI for specific administrative functions, with early implementations focusing on areas like building permit reviews, business license approvals, and eligibility screening for public benefits programs. These deployments typically generate explanation documents alongside decisions, detailing which criteria were evaluated, how evidence was weighted, and what alternative outcomes might have resulted from different circumstances. Some systems also provide interactive interfaces where applicants can explore hypothetical scenarios to understand decision boundaries. As these technologies mature, they are expected to expand into more complex domains such as urban planning approvals, environmental impact assessments, and regulatory compliance monitoring. The trajectory points toward a future where algorithmic governance becomes both more prevalent and more accountable, with explainability serving as a foundational requirement rather than an optional feature. This evolution aligns with broader movements toward digital government transformation and participatory democracy, where citizens expect not only efficient services but also meaningful insight into how institutions make decisions that affect their lives.

Related Organizations

An applied AI company that works closely with the UK government on AI safety and implementation.

A policy research institute focusing on the social consequences of artificial intelligence and the concentration of power in the tech industry.

A model monitoring and observability platform that includes specific tools for evaluating LLM accuracy and hallucination.

Provides Model Performance Management (MPM) to monitor, explain, and analyze AI models in production.

A non-profit research and advocacy organization that audits automated decision-making systems, specifically focusing on social media platforms and recommender systems in Europe.

Provides an AI governance platform that helps enterprises measure and monitor the fairness and performance of their AI systems.

Provides Driverless AI, an AutoML platform that includes architecture search and hyperparameter tuning.

Enterprise AI software provider with a dedicated suite for predictive maintenance across energy, defense, and manufacturing.