Multi-Language Intelligence Models

Multi-language intelligence models represent a specialized class of large language models trained on extensive multilingual datasets spanning hundreds of languages, dialects, and cultural contexts. Unlike general-purpose language models, these systems are optimized for intelligence applications, incorporating domain-specific training on geopolitical terminology, regional idioms, and culturally nuanced communication patterns. The technical architecture typically involves transformer-based neural networks trained on parallel corpora that include not only major world languages but also low-resource languages critical to intelligence operations. These models employ sophisticated tokenization strategies that can handle diverse writing systems—from Latin and Cyrillic alphabets to logographic scripts like Chinese and Arabic abjads—while maintaining semantic coherence across language boundaries. The training process integrates cultural context embeddings that capture region-specific references, historical events, and social dynamics, enabling the models to interpret meaning beyond literal translation.

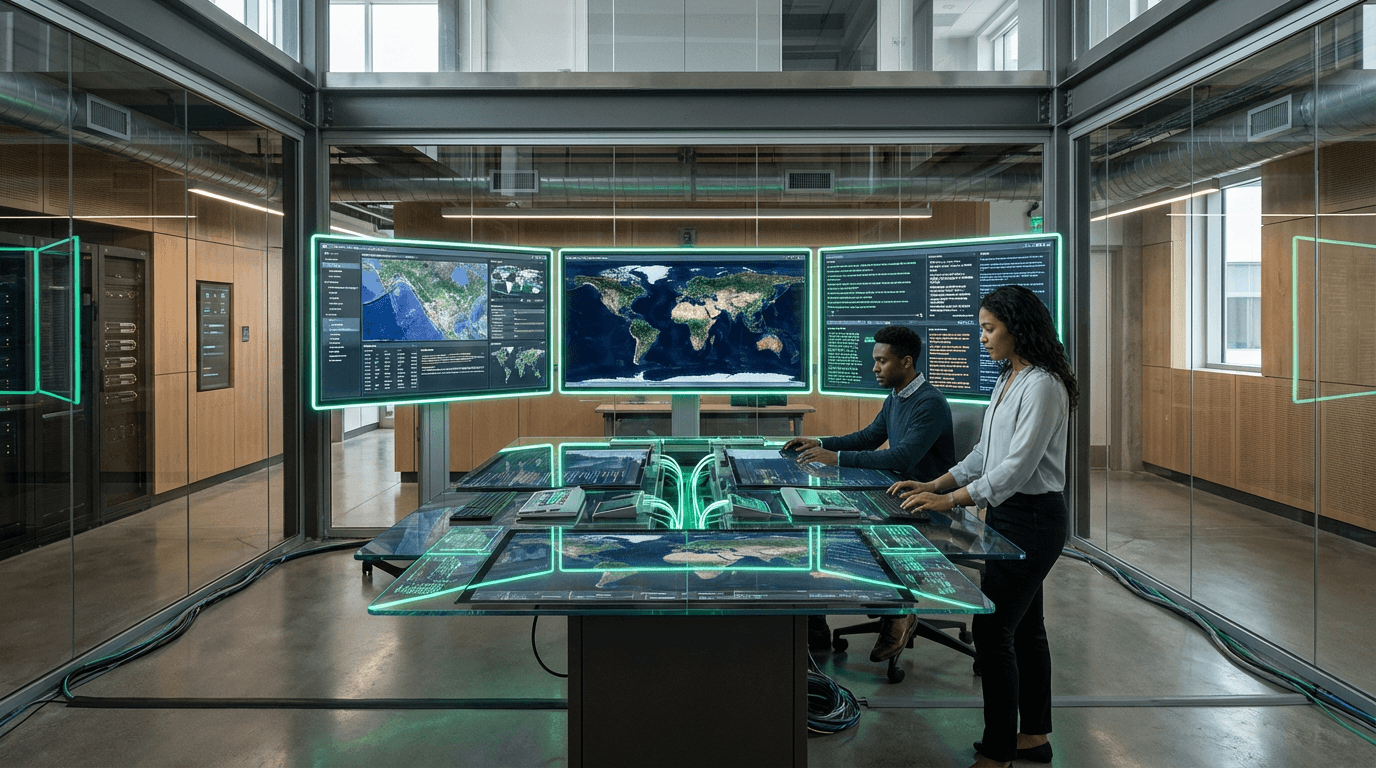

The strategic imperative driving these systems stems from the intelligence community's need for linguistic sovereignty and operational speed in an increasingly multipolar world. Traditional reliance on human translators or third-party translation services introduces delays, security vulnerabilities, and potential biases that can compromise time-sensitive intelligence operations. Multi-language intelligence models address these challenges by providing near-instantaneous processing of open-source intelligence (OSINT) from social media platforms, news outlets, and public communications across linguistic boundaries. This capability proves particularly valuable when monitoring emerging crises, tracking disinformation campaigns, or analyzing sentiment shifts in regions where linguistic expertise may be scarce. Furthermore, these models reduce dependency on foreign contractors or allied nations for translation services, mitigating risks associated with information sharing and maintaining operational security. The technology enables intelligence agencies to conduct comprehensive surveillance of global information flows without the bottlenecks inherent in human translation workflows.

Current deployments of multi-language intelligence models remain largely classified, though research institutions and defense contractors have demonstrated proof-of-concept systems capable of processing dozens of languages simultaneously. Intelligence agencies are integrating these models into OSINT analysis pipelines, where they assist analysts in identifying relevant information from vast streams of multilingual data, flagging potential threats, and tracking narratives across linguistic communities. The technology shows particular promise in counter-terrorism operations, where extremist communications often occur in regional dialects or code-switched languages that challenge conventional translation tools. As geopolitical competition intensifies and information warfare becomes increasingly sophisticated, the ability to comprehend and analyze communications in any language represents a critical asymmetric advantage. Future developments will likely focus on improving performance in low-resource languages, enhancing cultural context awareness, and integrating these models with other intelligence technologies such as network analysis and predictive analytics to create comprehensive situational awareness systems that transcend linguistic barriers.

Related Organizations

United Arab Emirates · University

A graduate-level research university that developed 'Jais', the world's highest quality open Arabic large language model.

The global hub for open-source AI models and datasets. Founded by French entrepreneurs with a major office in Paris.

Enterprise AI platform focusing on secure and aligned language models.

Provides advanced data analytics and multilingual search capabilities for open-source intelligence (OSINT) to government agencies.

South Korean tech giant developing HyperCLOVA, a massive Korean-centric LLM.

A grassroots NLP research community for Africa, building datasets and models for African languages often ignored by big tech.

An Indian startup building foundation models specifically designed for India's diverse linguistic landscape (Hindi, Tamil, etc.).

The Swedish national center for applied AI, which developed GPT-SW3, a large language model trained specifically on Nordic languages.