Algorithmic Transparency Dashboards

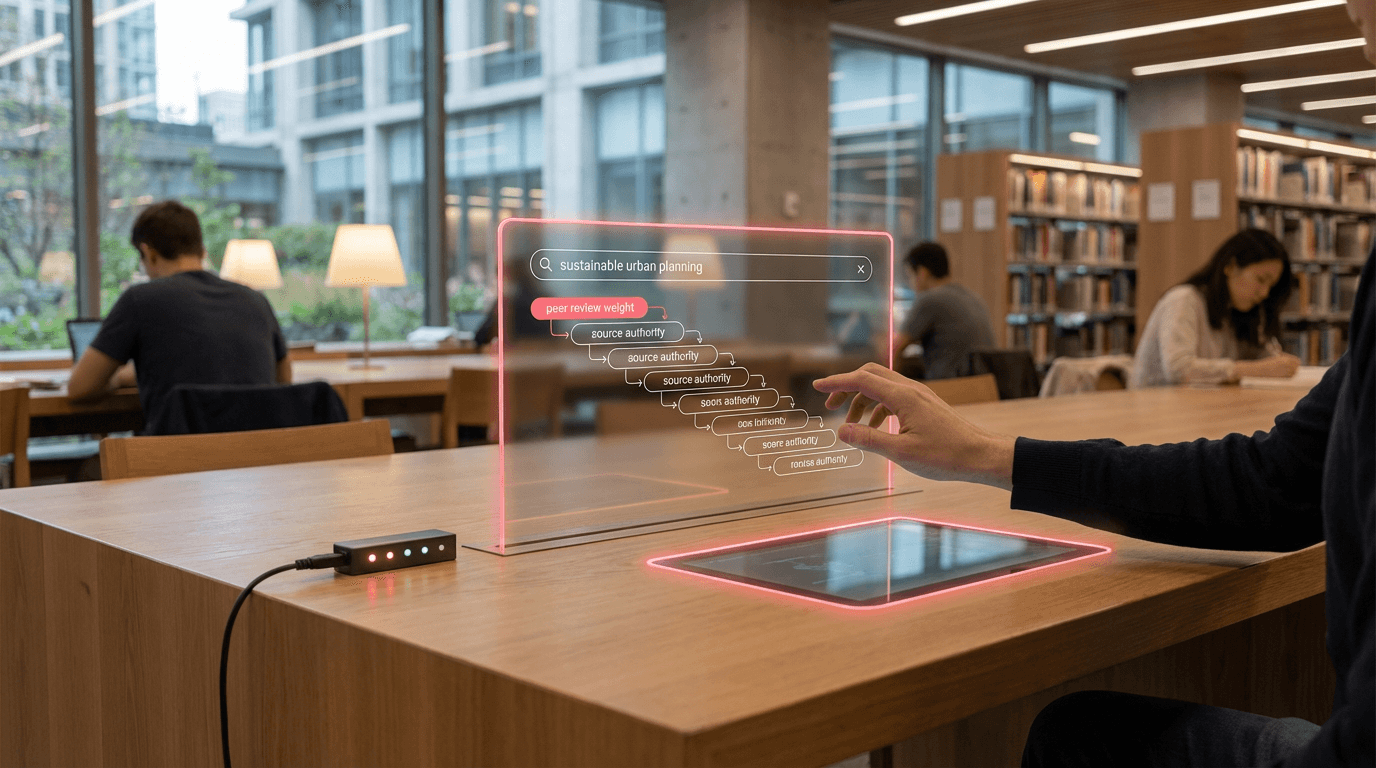

In the evolving landscape of digital libraries, archives, and knowledge repositories, users increasingly encounter search and recommendation systems powered by opaque algorithms that determine which resources surface first and which remain buried. Algorithmic transparency dashboards address a fundamental challenge in information discovery: the black-box nature of ranking systems that shape what knowledge becomes visible and accessible. These user-facing interfaces work by exposing the underlying logic of search and recommendation algorithms, revealing the specific factors—such as citation frequency, recency, semantic similarity, user engagement metrics, or institutional prominence—that influence why certain materials appear at the top of results. Rather than presenting rankings as neutral or inevitable, these dashboards decompose algorithmic decisions into comprehensible components, often using visual representations like weighted sliders, influence diagrams, or factor breakdowns that show the relative contribution of each ranking criterion to a document's final position.

The opacity of traditional search systems in libraries and archives creates several critical problems that transparency dashboards aim to resolve. Users often cannot understand why certain materials are prioritized over others, leading to diminished trust in discovery tools and potential reinforcement of existing biases embedded in ranking algorithms. Researchers may unknowingly overlook relevant sources because they lack visibility into how their search terms are being interpreted or weighted. Furthermore, algorithmic ranking systems can inadvertently privilege popular or recent materials while marginalizing historically significant but less-accessed resources, or favor publications from well-resourced institutions over those from smaller archives. By making these mechanisms visible, transparency dashboards restore user agency in the discovery process, allowing individuals to adjust ranking parameters according to their specific research needs—prioritizing older primary sources over recent commentary, for instance, or balancing semantic relevance against citation impact.

Early implementations of algorithmic transparency dashboards have emerged in academic search platforms and specialized digital archives, where research suggests that exposing ranking logic can significantly improve user satisfaction and research outcomes. Some platforms now offer interactive controls that let users modify the weight given to different ranking factors in real time, immediately seeing how their results reorder based on adjusted priorities. This capability proves particularly valuable in interdisciplinary research contexts, where standard relevance algorithms may not align with domain-specific discovery needs. Beyond individual empowerment, these dashboards serve broader institutional goals of accountability and equity in knowledge access, as they enable librarians, archivists, and researchers to audit algorithmic systems for potential biases or gaps in coverage. As concerns about algorithmic fairness intensify across the information sector, transparency dashboards represent a crucial step toward more democratic and trustworthy knowledge infrastructures, aligning with broader movements toward explainable AI and user-centered design in digital scholarship tools.

Related Organizations

A non-profit research and advocacy organization that audits automated decision-making systems, specifically focusing on social media platforms and recommender systems in Europe.

A non-profit organization that advocates for a healthy internet and conducts 'Trustworthy AI' research.

A data-driven newsroom that developed 'Citizen Browser', a custom web browser designed specifically to audit how social media algorithms treat different demographics.

Conducts algorithmic audits to protect fundamental rights and identify digital discrimination.

A policy research institute focusing on the social consequences of artificial intelligence and the concentration of power in the tech industry.

A model monitoring and observability platform that includes specific tools for evaluating LLM accuracy and hallucination.

Provides Model Performance Management (MPM) to monitor, explain, and analyze AI models in production.

A platform for AI governance and transparency, helping public agencies and companies register and report on their AI systems.

Provides an AI governance platform that helps enterprises measure and monitor the fairness and performance of their AI systems.

A decentralized social network protocol that allows users to choose and inspect their own algorithmic feeds.