Economic Exploitation in Intimacy Services

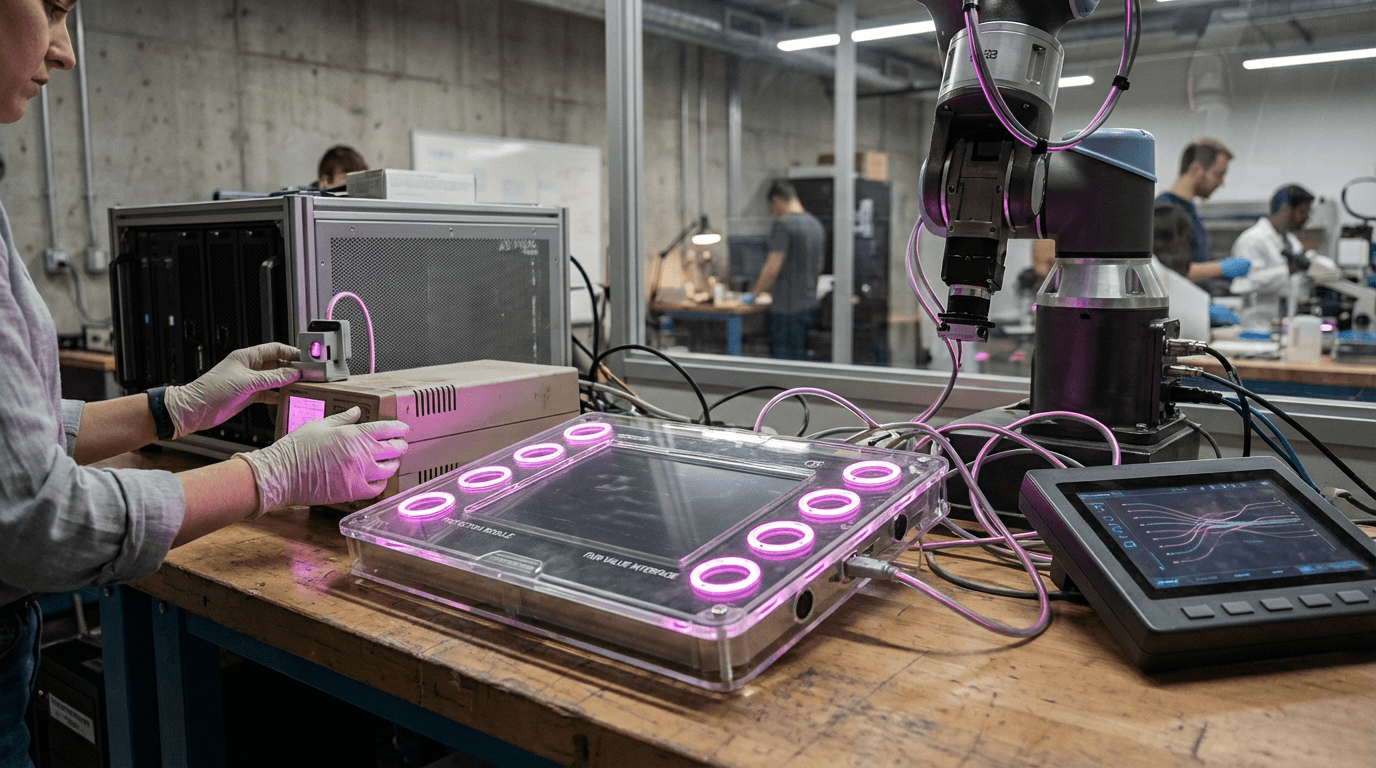

The rise of digital intimacy platforms has created a new frontier of labor exploitation that existing regulatory frameworks struggle to address. Workers in this sector—including those who train companion AI systems, moderate intimate content, provide virtual companionship services, or perform emotional labor through digital platforms—face unique vulnerabilities that traditional labor protections were not designed to handle. These workers often operate in legal gray areas where their contributions are classified as "gig work" or "user-generated content" rather than formal employment, leaving them without basic protections like minimum wage guarantees, health benefits, or psychological support. The commodification of intimate and emotional interactions has created markets where workers' personal vulnerabilities become extractable resources, with platforms profiting from the emotional labor of workers while offering minimal compensation or safeguards against psychological harm.

Regulatory frameworks addressing economic exploitation in intimacy services work by establishing clear standards for fair compensation, working conditions, and psychological support in these emerging labor markets. These protections typically include mechanisms to prevent wage theft through transparent payment structures and minimum compensation thresholds, requirements for platforms to provide mental health resources for workers exposed to traumatic or emotionally demanding content, and classifications that recognize emotional and intimate labor as legitimate work deserving of standard employment protections. Some frameworks also address the unique power imbalances inherent in these services by mandating consent protocols, limiting exploitative contract terms, and establishing grievance mechanisms for workers who experience harassment or coercion. By treating the commodification of vulnerability as a regulatory concern rather than a purely market-driven phenomenon, these frameworks aim to prevent platforms from extracting maximum value from workers' emotional capacities while externalizing the psychological and economic costs.

Early implementations of these protections have emerged primarily in jurisdictions with strong digital labor movements and existing frameworks for platform worker rights. Research suggests that workers in intimacy services experience higher rates of burnout, vicarious trauma, and economic precarity compared to other digital labor sectors, highlighting the urgent need for specialized protections. Industry analysts note that as AI companion services and emotional labor platforms continue to expand—projected to become multi-billion dollar markets—the absence of robust regulatory frameworks risks creating a permanent underclass of workers whose intimate and emotional capacities are systematically exploited. Forward-thinking jurisdictions are beginning to recognize that protecting workers in these sectors is not only a matter of labor rights but also essential for preventing the normalization of extractive practices that treat human vulnerability as a renewable resource to be mined for profit.

Related Organizations

An action-research project based at the Oxford Internet Institute that rates digital platforms on their labor standards.

Collective of sex workers and advocates working at the intersection of tech and labor rights.

OnlyFans

United Kingdom · Company

Subscription content platform primarily used by sex workers and intimacy creators to monetize their relationships with fans.

An AI companion app that has faced scrutiny regarding the emotional dependence of its users.

A training data company that positions itself as an 'ethical AI supply chain' provider, using an impact sourcing model.

Independent research institute studying the harms of AI, including the exploitation of data laborers.

Network representing sex workers in Europe, advocating for digital rights and protection against online exploitation.

A coalition of tech companies and nonprofits developing best practices for AI, including guidelines on human-AI interaction.

A digital outsourcing company focusing on content moderation and customer experience.

A worker-run organization and browser extension that allows Amazon Mechanical Turk workers to rate requesters and organize for better conditions.