Autonomous Vehicle

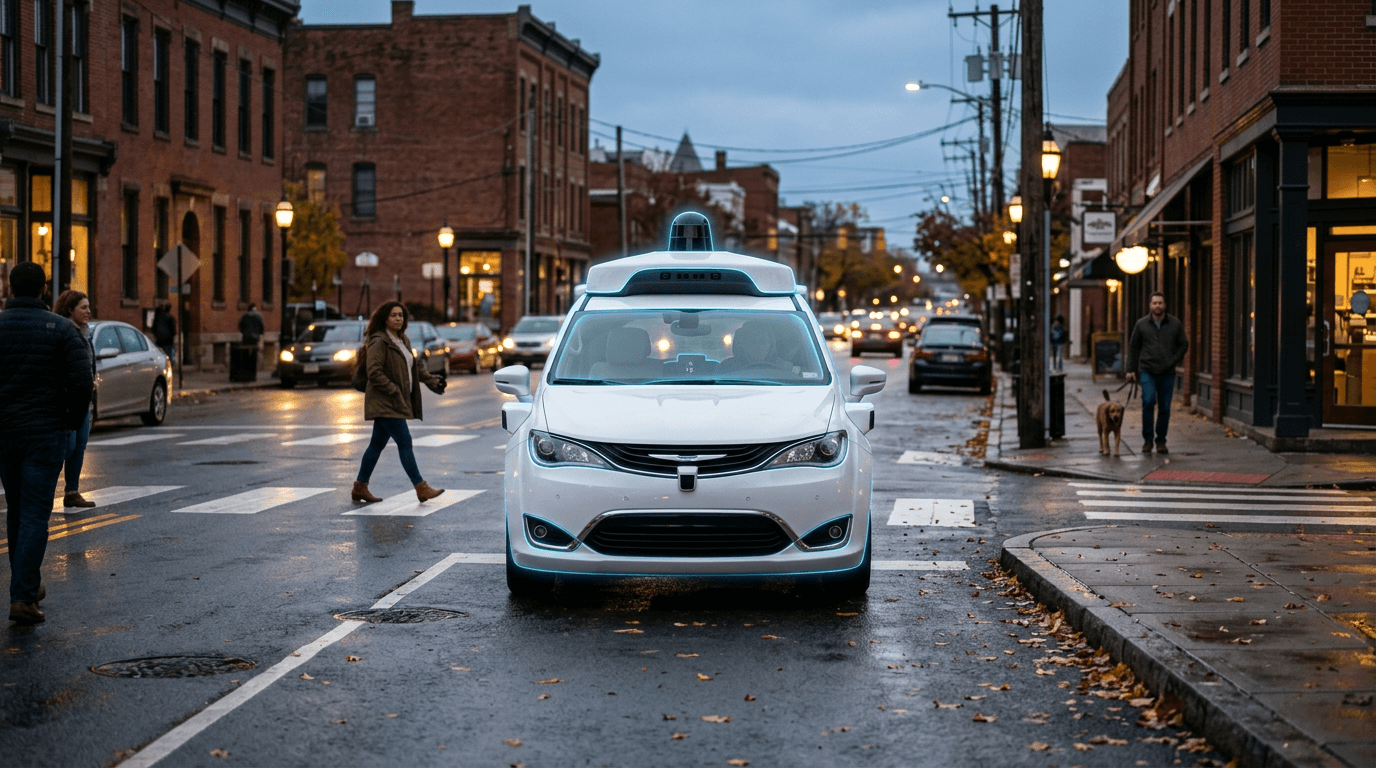

Autonomous vehicles combine sensors—radar, LiDAR, cameras, and sometimes ultrasonic—with perception, prediction, and planning software to navigate without a human driver. Data is fused to build a representation of the environment; machine learning models detect objects, predict behaviour, and generate driving decisions. Approaches differ by vendor: Tesla emphasises vision and neural networks with limited reliance on high-definition maps; Waymo and Cruise use multi-sensor suites and detailed maps for geofenced robotaxi services. SAE levels 2–4 describe partial to high automation, with level 4 indicating full autonomy within an operational design domain.

The technology promises to reshape mobility by reducing human error, enabling new service models (robotaxis, delivery pods), and freeing occupants for other tasks. Deployment is advancing in controlled settings: Waymo and Cruise operate commercial robotaxi services in select cities; Tesla’s Full Self-Driving remains supervised. Long-haul trucking and confined sites (mines, ports) are active pilot areas. Regulatory frameworks are evolving, with some jurisdictions permitting testing and limited commercial use while defining safety and liability rules.

Widespread deployment still faces hurdles. Edge cases—rare scenarios, adverse weather, and complex interactions with other road users—remain difficult. Public acceptance and trust vary. Cost of sensors and compute, maintenance of HD maps, and clarity on liability and insurance are ongoing concerns. As the industry consolidates and safety evidence accumulates, autonomous vehicles are likely to reach broader adoption first in constrained domains before expanding to general-purpose passenger driving.