Federated Learning for Financial Risk

Financial institutions face a fundamental tension between the need for robust risk models and the imperative to protect customer privacy. Traditional machine learning approaches require centralizing vast amounts of sensitive financial data—transaction histories, credit profiles, insurance claims—into single repositories where models can be trained. This creates significant vulnerabilities: regulatory constraints like GDPR and data residency requirements often prohibit cross-border data movement, competitive concerns prevent institutions from sharing proprietary customer information, and centralized data repositories present attractive targets for cyberattacks. Federated learning addresses these challenges by fundamentally restructuring how collaborative AI models are developed, enabling multiple organizations to benefit from collective intelligence without ever exposing their underlying data.

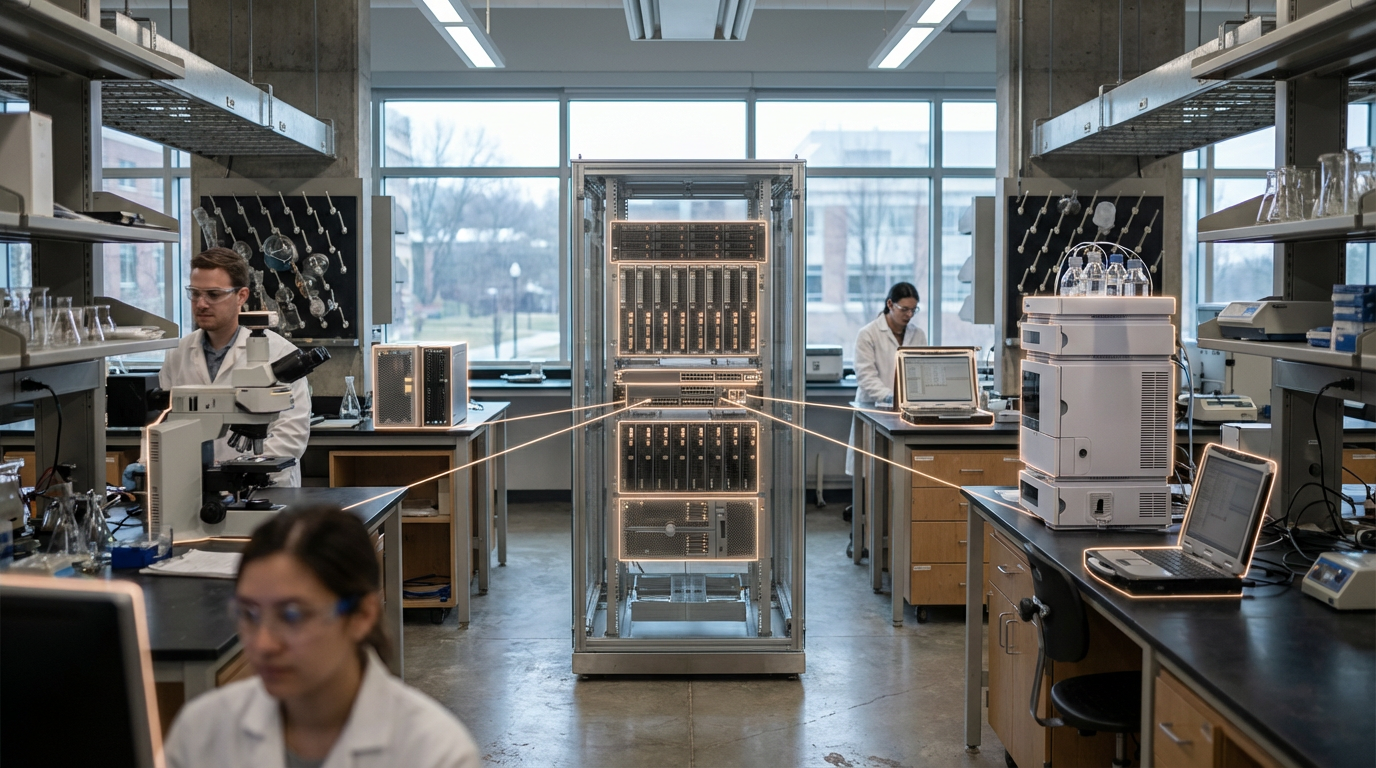

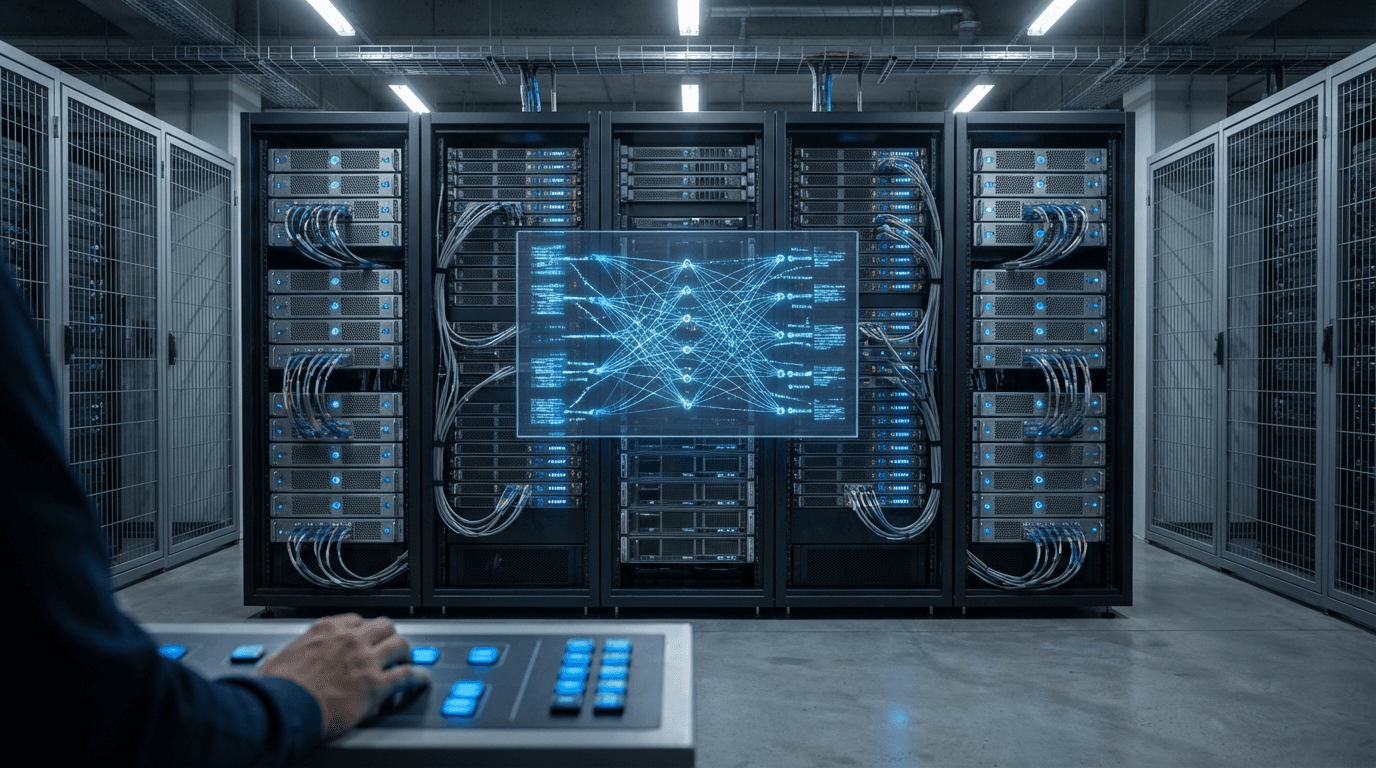

The core mechanism of federated learning involves training machine learning models locally on each institution's own infrastructure, then sharing only the model updates—mathematical parameters and gradients—rather than raw data. A central coordinating server aggregates these updates to refine a global model, which is then distributed back to participants for further local training iterations. This process repeats until the model converges to optimal performance. In financial risk applications, this means a consortium of banks could collaboratively develop superior fraud detection algorithms by learning from transaction patterns across all members, while each bank's customer data never leaves its secure environment. The approach solves critical industry problems: it enables smaller institutions to access the benefits of large-scale data without the scale themselves, allows cross-border collaboration despite data localization laws, and provides competitive advantages through cooperation without compromising proprietary information. Advanced implementations employ techniques like differential privacy and secure multi-party computation to add additional layers of protection, ensuring that even the shared model updates cannot be reverse-engineered to expose individual customer records.

Early deployments in the financial sector demonstrate promising results across multiple use cases. Credit scoring models trained through federated approaches have shown improved accuracy in detecting default risk by incorporating diverse lending patterns from multiple institutions, while maintaining compliance with privacy regulations. Insurance companies are exploring federated learning for claims fraud detection, where rare fraud patterns that might be invisible to individual insurers become detectable when learned collectively. Anti-money laundering systems represent another significant application, as suspicious transaction patterns often span multiple institutions and jurisdictions. Research initiatives suggest that federated models can approach—and sometimes exceed—the performance of centralized models while providing mathematical guarantees of privacy preservation. As financial services become increasingly digital and data-driven, federated learning represents a critical evolution in how institutions balance innovation with responsibility, enabling the development of more sophisticated risk models while respecting customer privacy and regulatory boundaries. The technology aligns with broader industry movements toward privacy-enhancing technologies and collaborative ecosystems, positioning it as a foundational capability for next-generation financial infrastructure.

Related Organizations

A FinTech company dedicated to transforming anti-money laundering (AML) and countering the financing of terrorism (CFT) using federated learning.

Initiator of the FATE (Federated AI Technology Enabler) open-source project, an industrial-grade federated learning framework.

Offers a platform for creating collaborative data ecosystems using federated learning and privacy-preserving technologies.

A community-driven organization building privacy-preserving AI technology, including PySyft for encrypted, privacy-preserving deep learning.

Develops the Flower framework, an open-source, unified approach to federated learning that works with any workload, ML framework, and training environment.

Provides a distributed data science platform that allows algorithms to travel to the data rather than moving the data itself.

Develops silicon spin qubits using advanced 300mm wafer manufacturing processes.

Developing foundation models for robotics (Project GR00T) and vision-language models like VILA.

Provides a privacy-preserving AI platform that enables federated learning for data privacy and regulatory compliance.