Your Vault is Your Moat

Issue 138 · April 20, 2026

Your Vault is Your Moat

Contextual knowledge, design systems, and outside perspectives

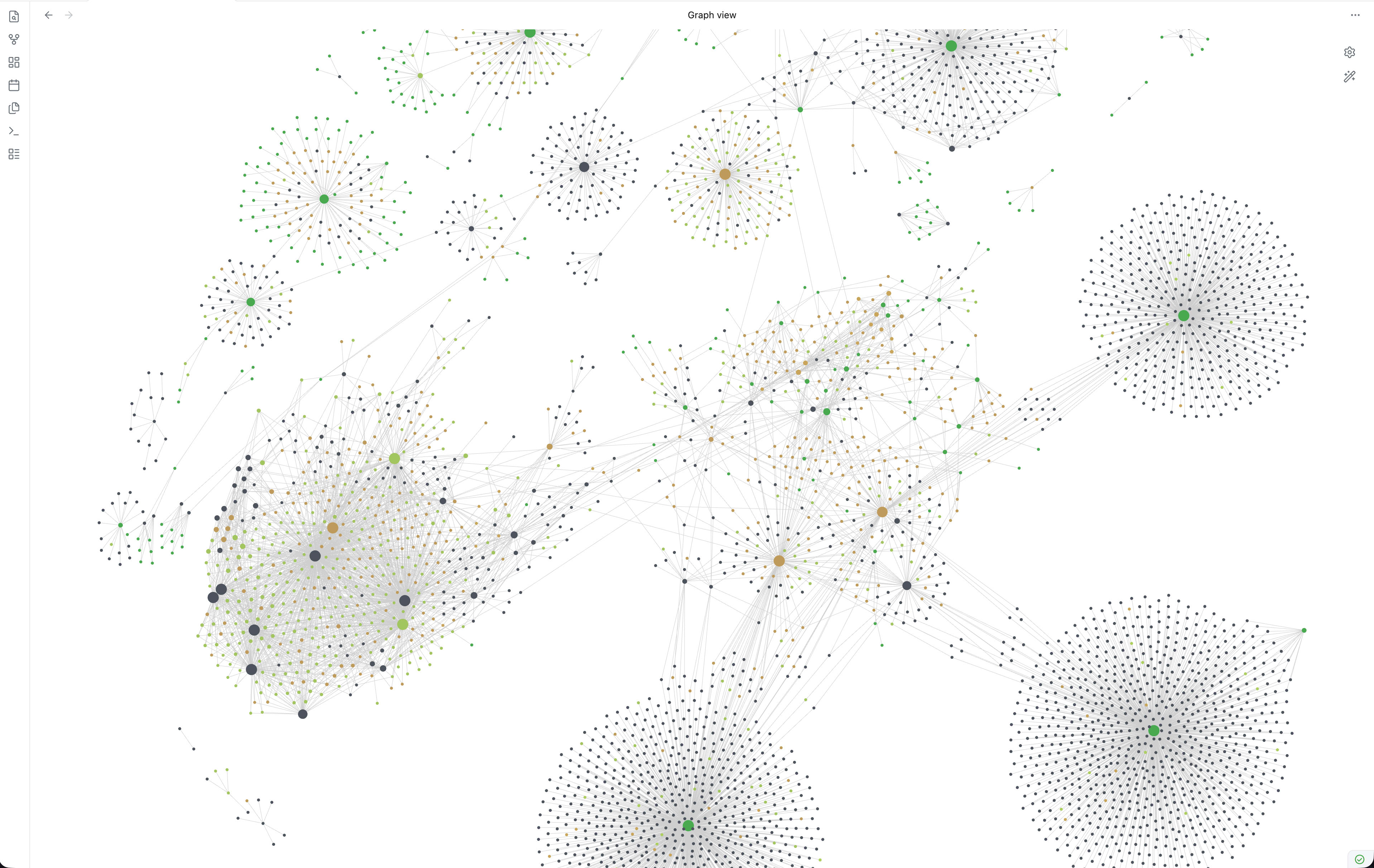

I’ve been experimenting with changing how I work with the help of AI for a couple of years now, and realize few of those experiments have made it into the newsletter. My reflections here usually revolve around the collective state of things in AI, but sometimes it’s useful to step back from the big picture and pay attention to the trees. What’s obvious to some isn’t for others, and I’m often reminded that how we work at Envisioning is anything but typical. My context is unlikely to be like yours – my work is independent, self-guided, and largely self-motivated – and while the shape of your work will be different, I hope some of this resonates.Contextual knowledgeThe gap between what you know and what AI knows can be surprising. AI has the average of the world’s information baked into its core, whereas you and I have spiky and unpredictable knowledge of the world. The organizations you work with are probably similar – they contain institutional and tacit knowledge from countless interactions and data, in a way that is rarely machine readable. Envisioning is small: me and four full-time remote staff working through dozens of software tools with hundreds of collaborators around the world. Repeatable processes and clear methodologies have been our approach for fifteen years, but where to manage the company’s information is a perennial challenge. Most of our shared written knowledge lives in Slack, Google Docs, and, for a while, Notion – none of which are particularly accessible by AI. Frontier models become most useful when you fill the context window with information only you have. Knowing what to put in context can feel like alchemy, and because of the newness of the field, you can only really learn by doing.Markdown is quietly becoming the substrate for reliable agents. The most stable way of guiding agents to date are skills: text instructions with occasional code, which can be composed and connected in various contexts and shared by different models. For agents to be useful, they first need to be reliable, so preparing your organizational knowledge into machine-readable formats seems inevitable. The difference between storing your documents as .doc files on OneDrive versus markdown files on Git is probably a 10x difference in how efficiently a model can work with them, maybe more. Markdown is pure content, no overhead. Everything that matters to the company is quickly becoming a shared markdown environment – methodology documents, writing style guides, our entire website copy, service lines, project history. Everything that can be managed as text should feed your vault. The alchemy gets easier when the ingredients are already on the shelf.

Design systemsVisual identity is code. Brand guidelines – typography, colors, composition, spacing – everything that composes your visual consistency can and should be expressed in a machine-readable format. Last Friday, Anthropic released Claude Design, which walks you through creating a programmatic design system from scratch and then replicating it across different media. I shared our website repo and was guided through a dozen contextual questions that helped translate Envisioning’s implicit design language into an explicit, replicable system. The resulting system can then generate slides, landing pages, prototypes, and just about anything else, grounded in your visual rules. You can replicate this behavior in just about any AI tool, not only Claude, as long as you ask it to infer, formalize, and document your existing design assets. Worth trying, if only to see your own design language described back to you.

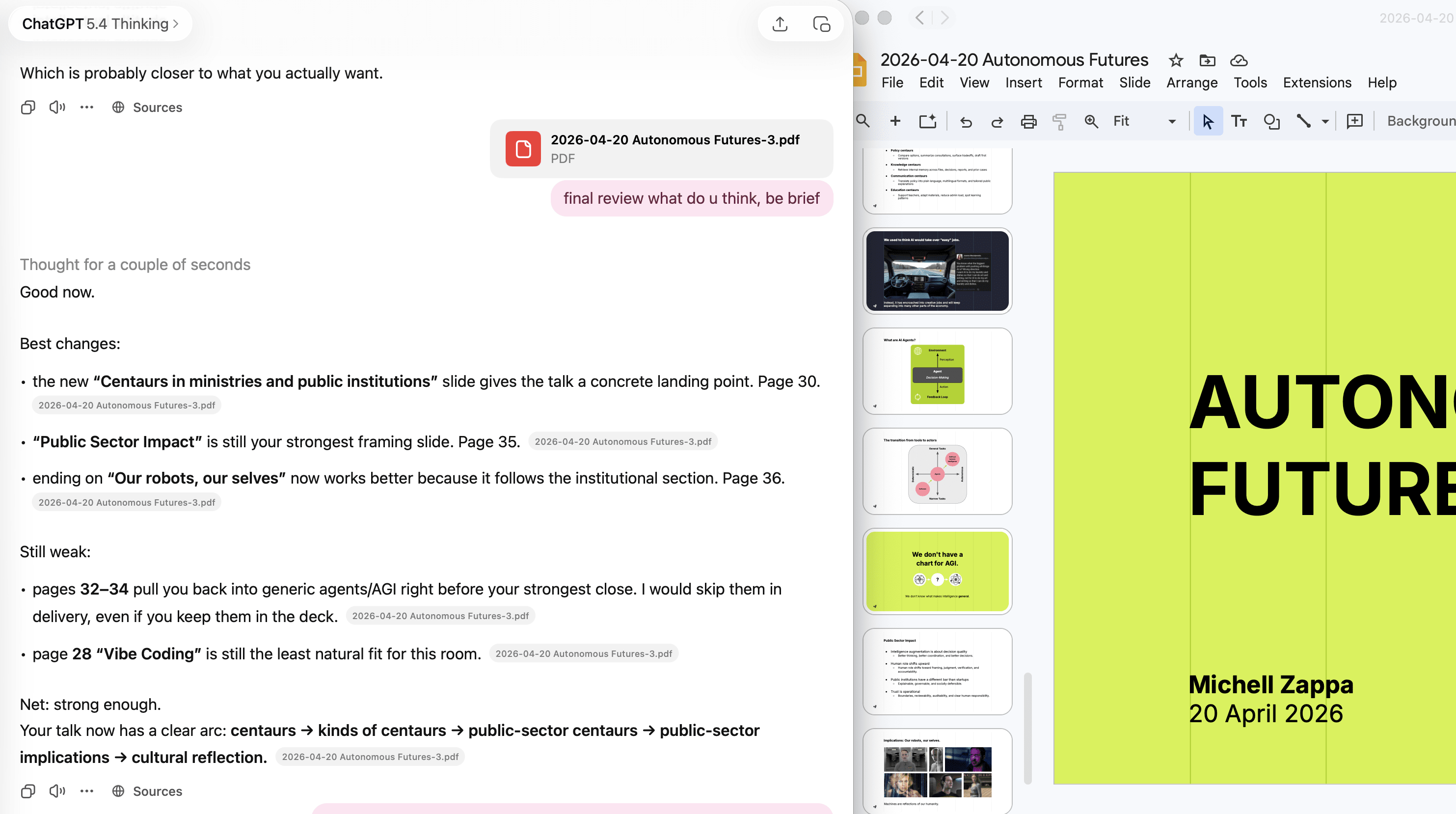

Outside perspectiveI present and speak at various functions. Over the years I’ve landed on a handful of decks with fleshed-out ideas that I update and remix whenever there’s an opportunity to speak. Because my attention is finite and our own assumptions quickly become invisible, I’ve made a habit of running every presentation by AI as part of preparation. Not creating presentations from scratch – that requires strong contextual knowledge and design systems to avoid mediocrity, and uniqueness of ideas is not yet something we should expect from language models. Instead, AI is useful precisely because it reflects the median reader: a quick way to test whether your ordering makes sense, where slides can be dropped, and where messaging is muddy. Sometimes it helps to export the speaker notes, but in general I export a PDF of the slides, drop it into a chat with context about the audience and program, and use the conversation to sharpen the deck.

Three small habits, no grand theory. Just the shape of the work today.MZPope Leo dropping bangers on Twitter.

MZ

Videos

Agents Building Agents While the Kids Sleep (54 min)

Jesse Genet, YC founder and homeschool mother of four under five, on running 11 Claude-based agents across coding, curriculum, and household management. The key architectural move: she trained her agents to spin up new agents autonomously, so she can delegate at the prompt level while offline.

AI Agents Will Expose Everyone’s Secrets (64 min)

George Mason economist Tyler Cowen, in conversation with Harvard’s Jonathan Zittrain at the Berkman Klein Center, argues that unreleased models from Anthropic and OpenAI can already compromise virtually any human system, and that a “good enough” open-source equivalent is 12-18 months away. The window to patch critical infrastructure is the same window before chaos.

OpenClaw’s Security Debt, by the Numbers (44 min)

Peter Steinberger, OpenClaw creator and now OpenAI staff, reports 1,142 security advisories in five months (16.6 per day, 99 critical), twice the rate of the Linux kernel. He also recounts hooking Nvidia’s NemoClaw sandbox up to Codex security and watching it find five escape routes in thirty minutes.

Agents Improvise; Chatbots Give Up (18 min)

OpenClaw creator Peter Steinberger on how his WhatsApp agent autonomously solved a voice-message format it was never built to handle, in 9 seconds, while he watched in Marrakech. The project went viral after he accidentally left it running unsupervised on a public Discord overnight; Shenzhen now subsidizes businesses built on it.

Winning AI While Hollowing Out Workers (16 min)

Former US Secretary of Commerce Gina Raimondo argues America’s workforce transition infrastructure, unemployment insurance designed in the 1920s, employer-agnostic college subsidies, no wage-replacement support, is structurally incompatible with AI-scale displacement. Her fix centers on a government-industry bargain where employers define needed skills and public systems fund the path to them.

Claude Design vs. Lovable, One Prompt (12 min)

UI designers Murat Bayral and Can Hoskan ran an identical single-prompt brief, a screenshot plus one MD file, through Claude Design (running Opus 4.7) and Lovable 45 minutes after Claude Design’s announcement. Claude Design produced a hi-fi interactive prototype that Bayral, a senior designer, called immediately Figma-ready; Lovable’s output was “acceptable” but read as a WordPress theme by comparison.

The Karpathy Loop Comes for Your Org (27 min)

Nate B. Jones breaks down how Karpathy’s 630-line auto-research script, which ran 700 experiments overnight and cut training time 11%, has now been extended to harness engineering: a meta-agent rewrote task-agent scaffolding overnight and claimed first place on two benchmarks, with the entire compute bill under $300.

Embeddings, Weather, and What LLMs Can’t Do (28 min)

Google DeepMind VP of Research Raia Hadsell walks through two underreported bets: Gemini Embedding 2, a fully omnimodal model encoding text, video, audio, and PDFs into a single vector, and DeepMind’s AI rainfall prediction work with the UK Met Office that outperformed physics-based models.

Newsletter

Follow us for weekly foresight in your inbox.