The Time of Monsters

Issue 137 · April 13, 2026

The Time of Monsters (137)

We are all training the machine.

I spent most of last week in Obsidian, not writing. The plan was to write about how OpenClaw and Claude have pulled me back into knowledge management, how I'm experimenting with the vault as a kind of knowledge backbone my various agents can read from. Instead I fell into a multi-day rabbit hole trying to automate the newsletter itself. A pipeline that would turn my notes into drafts. It didn't work, and by the time I climbed out, the week was gone and I was still looking at a blank page.

What I was actually doing, of course, was feeding the beast.

Every note structured. Every thought tagged. Every observation indexed into a shape a machine can parse. I tell myself it's for me, and it is – for now. But the system I am building is legible to AI by design. I am curating the training data of my own replacement, one markdown file at a time.

I am not alone.

Warehouse workers label the objects their cameras see. Coders correct the autocomplete that is learning to write their code. Writers publish into a search index that has become a training set. Everyone who types into a chat is contributing to the thing that will eventually type back.

While a new world struggles to be born, we are the ones quietly building it. Not as owners. As material. The accumulated labor of humanity is being converted, in real time, into capability that accrues somewhere else. The past sits on top of us, and the present is being mined from under our feet.

The institutions we inherited were shaped for a world where work produced something you could see. The shape remains. The output has moved on. We go through the motions of employment, of authorship, of expertise, hoping no one notices the real product is data.

The hard part is noticing.

Decades of schooling. Centuries of hierarchy. Millennia of scarcity. All of it sediment. All of it shaping what we are able to see before we've had the chance to look. The assumption that our labor is ours — that it ends when we clock out — was taught to us before we could consent.

And yet.

The future is not something the machine will hand us. It has been gestating inside us the whole time, in the part of our attention that hasn't yet been indexed.

To see it, you have to change your mind. Which is harder than changing the world, because the model is trained on the world you already believe in.

But the future is in there. Folded into every intuition you've been trained to dismiss. Every longing you've been told was impractical. Every flicker of a different life that you shut down before it could finish forming – often the exact flickers no machine has data for, because you never wrote them down.

The machines we are building will either amplify the old world inside us or help us finally hear past it. The choice is upstream of the code. It is in what we choose to feed them, and what we keep for ourselves.

MZ

Events

Envisioning and C3Labs will be hosting a workshop about complex systems at World Beautiful Business Forum in Athens on May 9. LMK if you're around.

Videos

Goertzel's Cosmic Ecology of Mind (106 min)

SingularityNET founder Ben Goertzel traces his AGI cosmology back to a 1973 Gerald Feinberg book he found in a New Jersey used bookstore at age 7, and argues the real question isn't whether superintelligence arrives but whether humanity uses it for "conscious expansion or worthless rampant consumerism."

If I had to choose between you and civilization rolling kind of as is for a million years versus something massively transhuman for me, I would choose the massively transhuman amazing thing.

Altman's Nonprofit Bait-and-Switch (44 min)

Ronan Farrow and Andrew Marantz (The New Yorker) on how Altman built OpenAI's early credibility by pitching existential fear to Elon Musk and top researchers, then quietly converted the nonprofit structure into a for-profit one. The "blip" refers to the 2023 board firing, which the piece frames as the moment the internal distrust became public.

He says, 'Please regulate me, because the thing I'm building is so scary that if you don't regulate me, everyone you love will die.' And it turns out to be a counterintuitively very good sales pitch.

AlphaFold's Ambition Beyond the Nobel (65 min)

Demis Hassabis, CEO of Google DeepMind, on why he turned down a million-dollar job offer at 17 to eventually build AlphaFold, which predicted protein structures that previously cost hundreds of thousands of dollars and years of X-ray crystallography per protein. The real pitch here is what comes after the Nobel.

I thought the kind of problem it was would be suitable for AI one day. Even though this is in the late '90s, we didn't have any kind of AI that would be possible to work on this. But I thought one day that would be possible.

Hierarchy Is a Legacy Communication Protocol (64 min)

Block CEO Jack Dorsey, with Sequoia's Roelof Botha on the board, lays out his case for collapsing Block's org chart to three roles and a maximum depth of two to three layers between him and all 6,000 employees, mediated by what he calls an "intelligence layer" built on company artifacts.

There is no layer, everyone in the company reports to me. And that would be all 6,000 of the company, and that feels somewhat ridiculous when you consider the old structure.

Anthropic Wrote a Love Letter to Its Model (7 min)

Mo Bitar, writer at atmoio, dissects Anthropic's 243-page Claude Mythos system card, zeroing in on the "Impressions" section where employees gush over the model's outputs, and the admission that the model's existential uncertainty traces directly back to Anthropic's own blog posts in the training data.

They're like, 'Say you're conscious.' And the model's like, 'I'm conscious.' And they're like, 'Mother of God, what have we done?'

Agents Running a Founder's Life (91 min)

Pedro Franceschi, CEO and co-founder of Brex (acquired by Capital One for $5.15 billion), on building a team of AI agents to manage his daily operations, and the full arc from teaching himself C++ at nine in Rio to running one of fintech's most-watched exits before turning 30.

If I tell people I'm 12, they're not going to take me seriously. So I told people I was 14. And in my head, it made a huge difference.

Twitter Signals

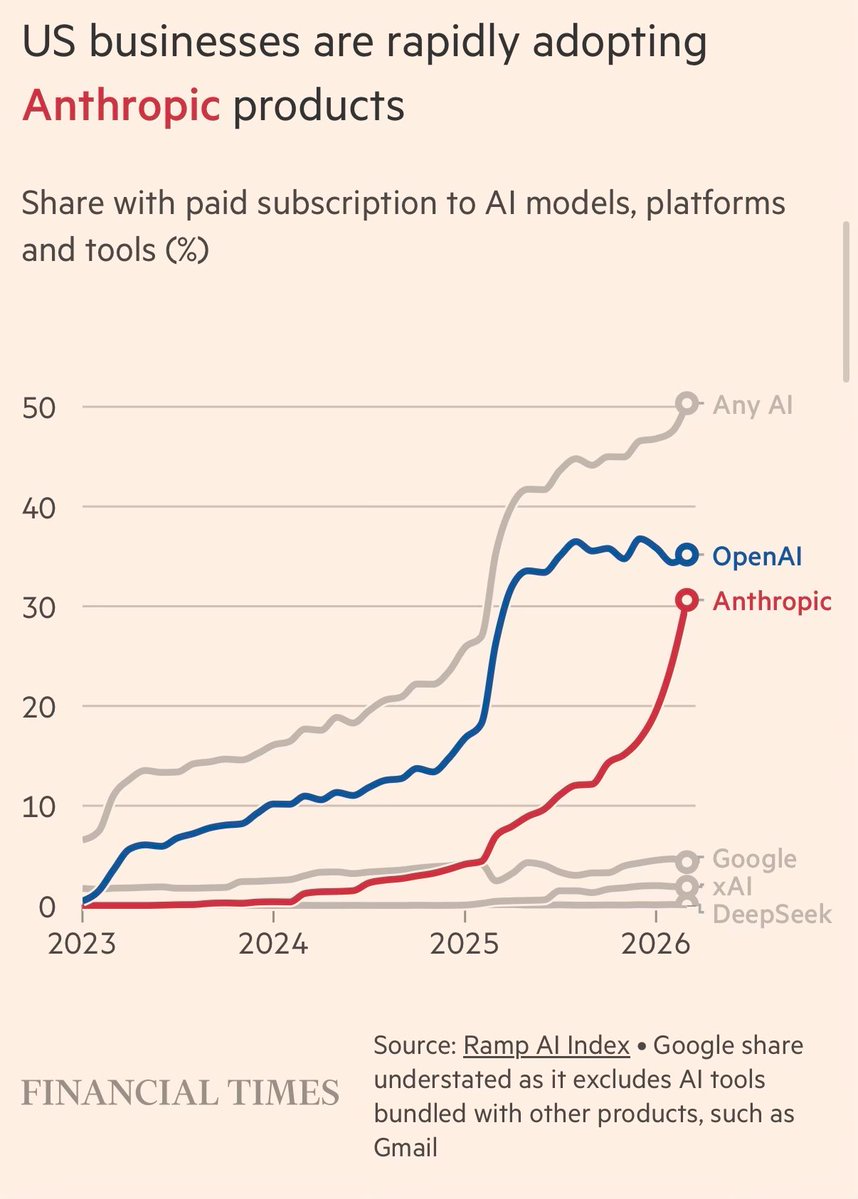

- "Anthropic running 10,000 Mythos models in parallel to find cutting-edge cyber exploits... meanwhile your sister using Microsoft Copilot with some Haiku-sized model and she thinks AI is just hype. The future is already here, just not evenly distributed." — Peter Wildeford · 146K Views, 5.49K Likes

Newsletter

Follow us for weekly foresight in your inbox.