Open Weights and Closing Loops

Issue 134 · March 23, 2026

Welcome to another edition of Artificial Insights, your autonomous intelligence digest. This week felt less like a product cycle than a coordination problem: models improving models, agents supervising agents, and humans trying to work out which parts of the loop can actually be outsourced.

Start with the mood. François Fleuret's observation, amplified by Yacine, is the cleanest line I saw all week: you may be able to outsource your thinking, but you cannot outsource your understanding.

That lands at exactly the moment when people are getting intoxicated by the surface area of the tools. Cody Schneider's ecstatic post about running cheaper models inside the Claude agent harness captures the other side of the mood perfectly: a genuine sense that cloud-based agents can now sit in recursive loops, optimize against live business data, and keep working while you sleep. Both reactions are correct. The leverage is real. So is the illusion that leverage and comprehension are the same thing.

At the same time, Google's new Science paper on "the next intelligence explosion", shared by Benjamin Bratton, argues against the old singularity fantasy altogether: not one godlike mind, but a plural, social, entangled intelligence system emerging through networks of humans and machines. That feels much closer to the world we are actually building: messy, collective, recursive, and impossible to reduce to a single actor or lab.

Then there is the economic absurdity of the whole thing. This throwaway comparison: pornography still generates roughly twice the annual revenue of AI – is funny mostly because it punctures so much inflated rhetoric in one sentence. We are supposedly living through the most important technology transition in history, yet the business models remain oddly unresolved. Sam Altman is reportedly in Washington trying to secure public backing for AI infrastructure while the market still talks about trillion-dollar valuations. If that sounds contradictory, it is. But contradictions are doing a lot of work right now.

Which brings me back to Fleuret's point. The bottleneck is no longer just access to models. It is whether you can turn outputs into understanding, understanding into judgment, and judgment into systems that compound. The real divide is opening up between people who use AI to deepen their grasp of reality and people who use it to simulate having one. That gap is going to matter a lot more than benchmark deltas.

MZ

<br />What if agents could learn from other agents and exchange better ways of working with you? To explore if that's feasible, I built a community database of 200+ proven "plays" – skill combinations OpenClaw agents actually use and a method for Claws to exchange plays based on how you are using it. You can take a peek at how people are using their agents here: https://hivemind.envisioning.com.

<br />

If you want to install it on your Claw and help kick off their recursive self-improvement, you can do so here: https://clawhub.ai/michellzappa/agent-hivemind

Video Links

(67 min)

Karpathy on the shift from writing code to orchestrating agents, auto-research pipelines, and why the "loopy" era of AI changes what it means to program.

(61 min)

Evans dissects why raw model access is becoming a commodity and where the real defensibility lies — distribution, interfaces, and trust.

(150 min)

Deep dive into the hardware supply chain constraints that determine who can actually train frontier models.

(44 min)

How Ramp deploys AI agents across finance operations at scale — one of the clearest examples of agentic AI in production.

(18 min)

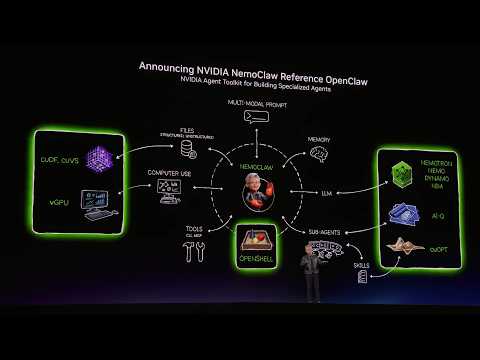

Jensen on stage introducing NVIDIA's OpenClaw integration and what it signals about enterprise agent infrastructure.

(9 min)

Bernie Sanders interrogates Claude on AI's impact on jobs, healthcare, and democracy. Surprisingly sharp exchange.

(64 min)

Practical walkthrough of getting OpenClaw running end-to-end — useful if you're setting up your own agent system.

Quick Links

-

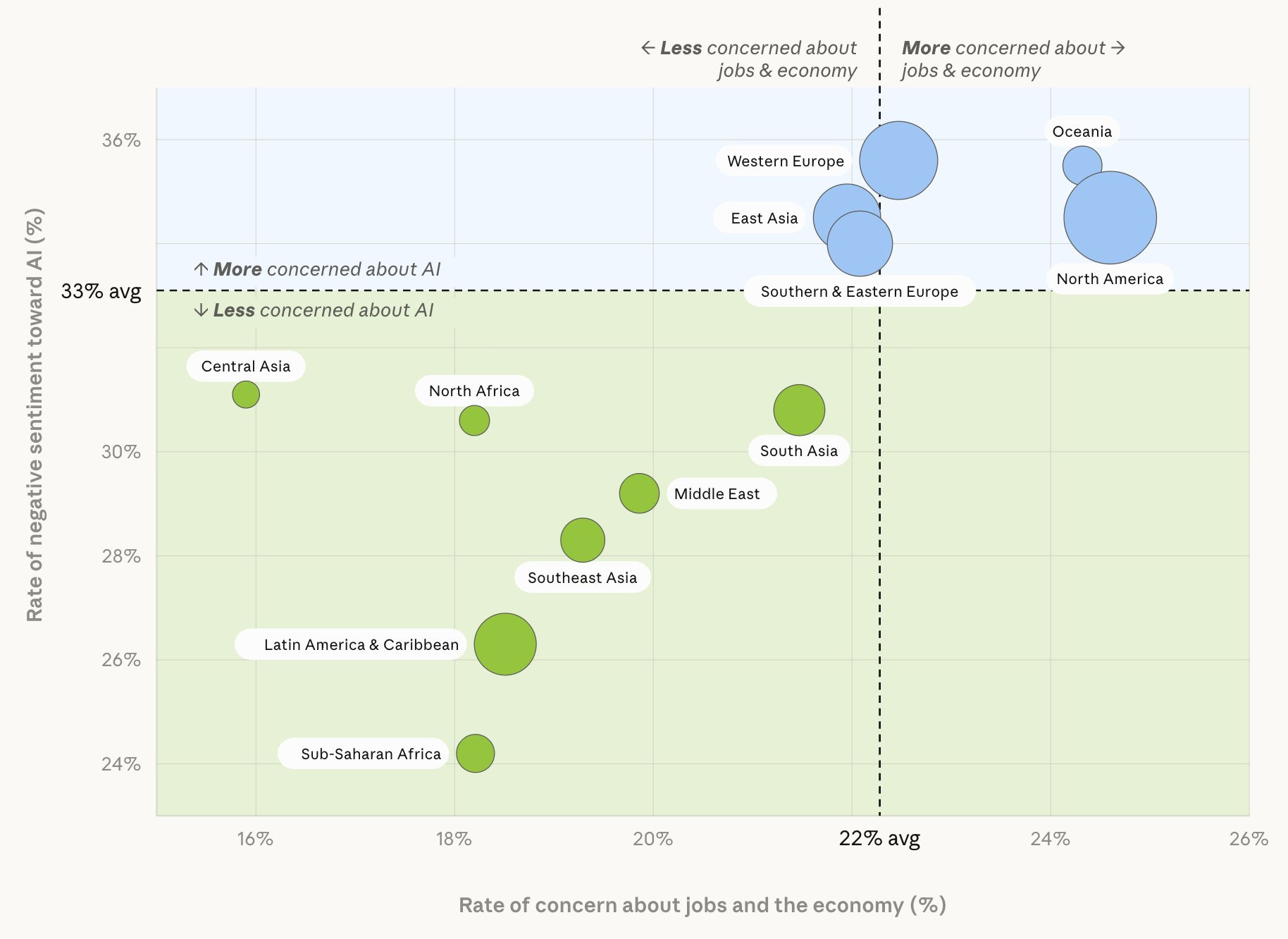

Anthropic's 81,000 interview dataset — Anthropic published survey findings from their large-scale interview project

-

Google DeepMind: measuring progress toward AGI — A cognitive framework for quantifying how close we are

-

Mistral Forge: frontier models grounded in proprietary knowledge — Mistral's bet on enterprise-specific model grounding

-

Meta's omnilingual machine translation for 1,600 languages — A single system that translates between 1,600 language pairs

-

Tokenmaxxing: the new AI agent economy — NYT on agents running recursive loops and burning tokens at scale

-

World Models might matter more than LLMs — Not Boring on why simulation-first intelligence could be the real unlock

-

Bratton et al.: "Agentic AI and the Next Intelligence Explosion" in Science — Benjamin Bratton's new paper argues against singularity, for plural social intelligence

-

Fleuret on outsourcing thinking vs. understanding — The cleanest articulation of why AI leverage ≠ comprehension

-

Yacine on the same thread — "You can outsource your thinking but you cannot outsource your understanding"

-

Ethan Mollick on Claude Cowork/Dispatch — First impressions after using Anthropic's new collaborative agent interface

-

Pi Mono: lightweight coding agent in a single repo — Minimalist open-source coding agent by Mario Zechner

-

PicoClaw: OpenClaw reimplemented in Go — 25K stars already; runs on minimalist hardware

<br />

Via Anthropic.

If Artificial Insights resonates with you, please help us out by:

-

Subscribing to the weekly newsletter on Substack.

-

Following the weekly newsletter on LinkedIn.

-

Forwarding this issue to colleagues and friends.

-

Sharing on your socials.

Artificial Insights is written by Michell Zappa, CEO of Envisioning.

Newsletter

Follow us for weekly foresight in your inbox.